Navigating Ethical AI for Your Marketing Team

As small to mid-sized businesses increasingly adopt AI in marketing, the conversation quickly shifts from ‘can we?’ to ‘should we?’ and ‘how do we do it right?’. This article cuts through the hype to offer a pragmatic guide on integrating ethical AI practices into your marketing operations. You’ll gain clear insights on what to prioritize, what tools offer real value, and crucially, what to deprioritize to protect your brand, maintain customer trust, and ensure compliance without overstretching your limited resources.

Our focus is on actionable steps that allow you to leverage AI’s power responsibly, turning potential risks into opportunities for stronger customer relationships and sustainable growth.

Understanding Ethical AI in Practice for SMBs

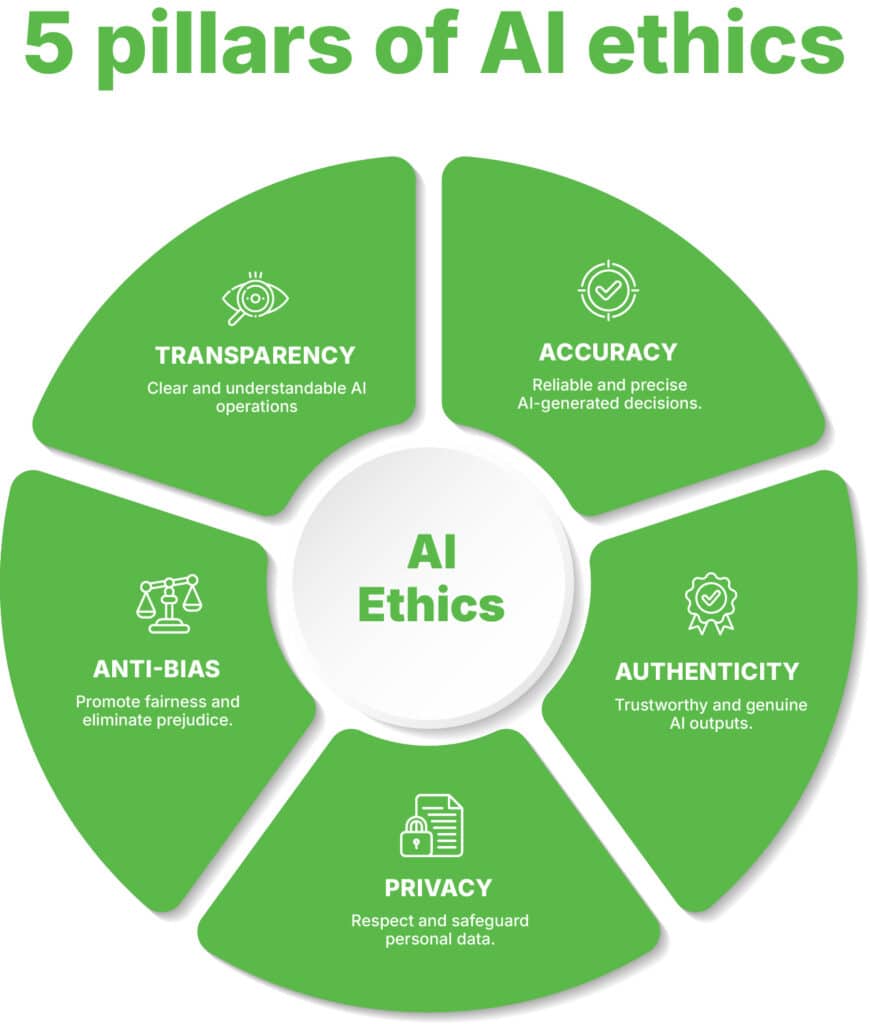

For a marketing team operating with real-world constraints, ‘ethical AI’ isn’t an abstract concept; it’s about mitigating tangible risks. It means ensuring your AI-driven campaigns don’t inadvertently alienate customers, violate privacy laws, or damage your brand’s reputation. The core pillars here are data privacy, bias detection, and transparency.

- Data Privacy & Security: This is foundational. AI systems often require vast amounts of customer data. Responsible use means adhering to regulations like GDPR and CCPA, securing data, and respecting user consent. Missteps here lead to significant legal and reputational damage.

- Bias Detection & Mitigation: AI learns from the data it’s fed. If that data reflects societal biases, your AI will perpetuate them. This can manifest in discriminatory ad targeting, skewed content recommendations, or even unfair pricing. Proactive identification and correction are essential.

- Transparency & Explainability: Can you explain how your AI made a specific decision? For instance, why was a particular ad shown to one segment but not another? While full explainability is complex, a basic understanding of your AI’s decision-making process builds trust with both customers and regulators.

What often gets overlooked in the rush to deploy AI is the hidden cost of data debt. Feeding an AI system with uncleaned, inconsistent, or poorly sourced data doesn’t just risk privacy violations; it guarantees ‘garbage in, garbage out.’ The immediate consequence is often wasted ad spend on ineffective campaigns or skewed insights that lead to poor strategic decisions. Beyond explicit demographic biases, teams can inadvertently train AI on historical data that reflects past business priorities, not future growth. For instance, an AI might optimize for short-term conversions, subtly deprioritizing segments that offer higher lifetime value but convert slower, simply because the historical data rewarded quick wins.

The push for transparency, while critical, also introduces a practical dilemma. For many SMBs, achieving true explainability for complex AI models is a significant technical hurdle. The temptation is to settle for superficial explanations or ‘narratives’ about how the AI works, rather than a deep, actionable understanding. This isn’t just about satisfying regulators; it’s about the team’s ability to diagnose problems. Without genuine insight into the AI’s decision logic, troubleshooting becomes guesswork, leading to prolonged periods of underperformance and immense frustration for the marketing team trying to course-correct.

Given these practical constraints, a common misstep is to chase full, real-time explainability for every AI decision from day one. For most SMBs, this is an overreach. Instead, prioritize understanding the aggregate behavior of your AI and establishing clear guardrails. Focus on identifying and mitigating the most egregious biases and ensuring data privacy compliance first. Attempting to build a fully transparent ‘black box’ explanation layer before you’ve even validated the core AI’s performance and ethical baseline is a resource drain that yields little practical benefit in the early stages. Get the fundamentals right, then iterate on deeper explainability as your capabilities and needs evolve.

Prioritizing Ethical AI Considerations for SMBs

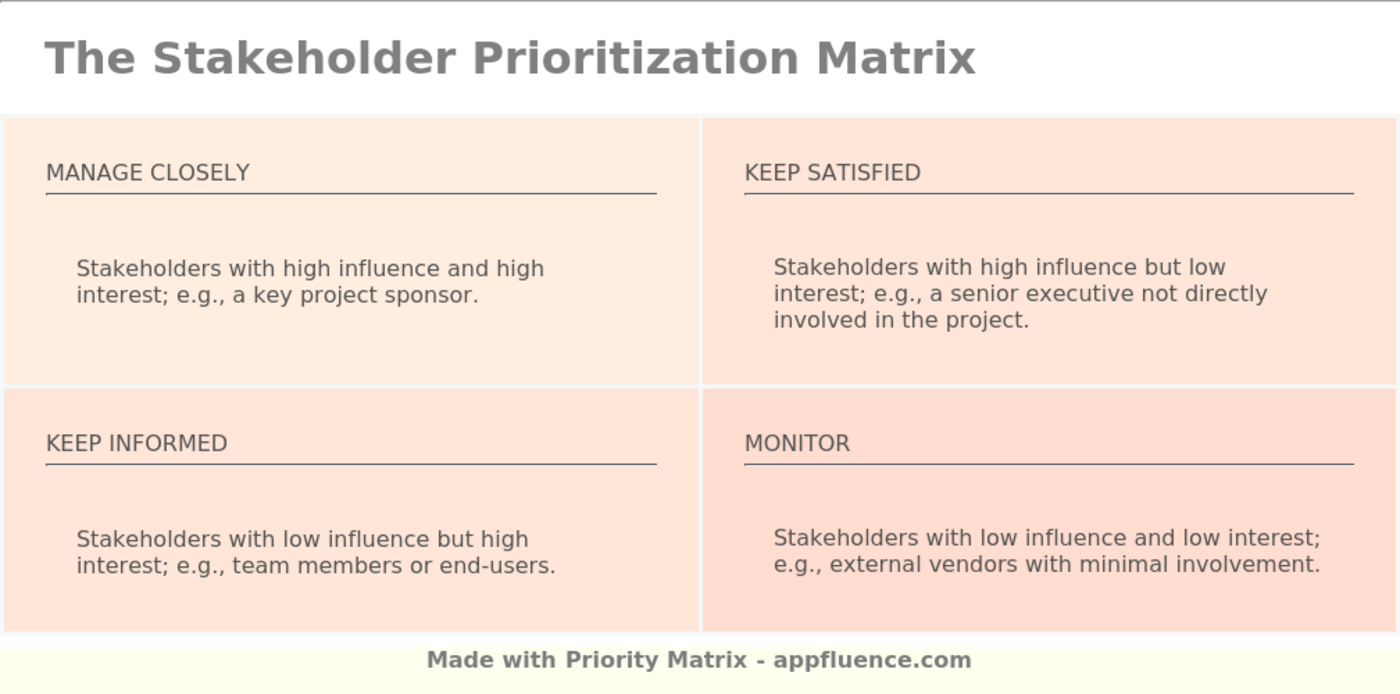

Given limited bandwidth, you can’t tackle every ethical AI challenge at once. Here’s where to focus your initial efforts:

- Non-Negotiable: Data Privacy Compliance. Start here. Ensure your data collection, storage, and processing practices align with relevant privacy laws. This isn’t just ethical; it’s a legal imperative. Implement robust consent mechanisms and data security protocols.

- High Impact: Bias in Audience Segmentation and Content Generation. Your AI-powered ad platforms and content tools can easily perpetuate biases. Regularly audit your audience segments for unintended exclusions or over-representation. Review AI-generated copy and visuals for stereotypes or inappropriate messaging before publication. This directly impacts brand perception and reach.

- Building Trust: Transparency in AI-Powered Interactions. When AI is used in customer service chatbots, personalized recommendations, or content curation, be upfront about it. A simple disclosure like ‘This chat is assisted by AI’ or ‘AI-powered recommendations’ can significantly enhance trust.

What’s often underestimated in these initial steps is the long-term operational burden and the potential for second-order effects. For data privacy, the initial compliance setup feels like a project with a clear end. However, the hidden cost emerges in the ongoing vigilance required. Regulations evolve, and relying solely on a platform’s built-in features without understanding the underlying legal nuances can create a false sense of security. A minor data handling oversight, when eventually discovered, can trigger disproportionate reputational damage and legal fees for an SMB, far outweighing the initial investment in robust, proactive measures and continuous monitoring.

Similarly, addressing bias in AI isn’t just about auditing your own data; it’s easy to overlook the inherent biases embedded within the foundational models themselves, which are often trained on vast, unfiltered internet data. Small teams, lacking dedicated AI ethics specialists, might not have the tools or expertise to detect these subtle, systemic biases. This creates a practical dilemma: AI promises efficiency, but the pressure to quickly deploy content or segmentation can lead human teams to skip the critical, time-consuming review needed to catch these deeper issues, inadvertently amplifying existing societal biases and alienating segments of your audience over time.

Finally, while transparency is crucial, simply adding a disclaimer isn’t a magic bullet. The non-obvious failure mode is when teams equate “disclosure” with “setting realistic expectations.” If an AI-powered tool consistently underperforms or provides unhelpful responses despite the disclaimer, user frustration will still mount. The internal pressure to present AI as a seamless, intelligent solution can lead teams to downplay its limitations, creating a gap between user expectations and reality that erodes trust faster than if the AI’s boundaries were clearly communicated from the outset. This can lead to a slow, frustrating decline in customer satisfaction, despite best intentions.

Practical Tools for Responsible AI Implementation

You don’t need bespoke, enterprise-level solutions. Focus on tools that integrate into your existing workflows and address the core priorities:

- Consent Management Platforms (CMPs): Essential for managing user consent for cookies and data processing. Many marketing automation platforms now offer integrated or easily connectable CMP features. This is your first line of defense for data privacy.

- AI Content Reviewers: While not perfect, tools that analyze AI-generated text for tone, sentiment, and potential bias can be invaluable. These act as an extra layer of human oversight, flagging content that might be off-brand or insensitive before it goes live.

- Data Governance Features within Existing Platforms: Leverage the data privacy and access controls built into your CRM, marketing automation, and analytics platforms. Ensure only necessary personnel have access to sensitive customer data and that data retention policies are enforced.

What to Deprioritize or Avoid Today

For small to mid-sized teams, attempting to build or implement highly complex, academic-level AI ethics frameworks or custom bias detection algorithms is a misallocation of resources. These often require dedicated AI ethics researchers and significant engineering investment, which is simply not feasible for most SMBs. Instead of chasing theoretical perfection, focus on practical, observable outcomes. Avoid over-automating critical customer interactions without a human-in-the-loop fallback, as this is a common pathway to PR disasters and customer frustration. Your resources are better spent on robust data privacy, diligent content review, and clear communication.

Building Trust Through Transparent AI Use

Transparency isn’t just about compliance; it’s a powerful trust-builder. When customers understand how and why AI is being used, they are more likely to engage positively. Consider:

- Clear Disclosures: A simple banner or footnote indicating AI assistance in chatbots or personalized content.

- Opt-Out Options: Where feasible, allow users to opt-out of certain AI-driven personalization features.

- Explaining the ‘Why’: Briefly explain the benefit of AI use, e.g., ‘AI helps us tailor product recommendations to your preferences for a better shopping experience.’

This approach transforms AI from a mysterious black box into a helpful assistant, fostering goodwill and loyalty.

Moving Forward with Responsible AI

Implementing ethical AI is an ongoing process, not a one-time fix. Start by auditing your current AI tools and data practices against the privacy and bias considerations discussed. Educate your team on the importance of ethical AI and establish clear guidelines for content review and customer communication. Prioritize human oversight in critical AI-driven processes. By taking these deliberate, practical steps, you can harness the power of AI to grow your business responsibly and build lasting trust with your audience. AI marketing strategy for small business

1 Comment