Why AI Governance Isn’t Just for Enterprises

As AI tools become integral to daily operations, small to mid-sized businesses face a critical challenge: how to harness their power without introducing unforeseen risks. This guide cuts through the complexity, offering a pragmatic playbook to establish control and build trust in your AI systems. You’ll gain clear direction on prioritizing efforts, making smart trade-offs, and ensuring your AI investments genuinely drive growth, not headaches.

We’ll focus on actionable steps that fit limited budgets and headcount, helping you navigate the real-world constraints of an SMB. The goal isn’t perfect compliance, but effective management that protects your business and maximizes AI’s strategic value.

Many small to mid-sized businesses (SMBs) mistakenly believe AI governance is a luxury reserved for large corporations with dedicated legal and compliance teams. This perspective is a significant oversight. In today’s landscape, where AI tools are readily accessible and deeply integrated into marketing, sales, and customer service, the risks of unmanaged AI are immediate and tangible for businesses of any size. From data privacy breaches to biased outputs impacting customer relations, the operational and reputational costs can be substantial.

For an SMB, a single misstep with an AI tool can have disproportionate consequences. It’s not about adhering to every obscure regulation; it’s about smart risk management and ensuring your AI tools are assets, not liabilities. Ignoring governance means operating blind, leaving your business vulnerable to issues that could erode customer trust or trigger unexpected operational disruptions.

Prioritizing Your AI Governance Efforts: What to Do First

With limited resources, prioritization is paramount. Don’t aim for a comprehensive, enterprise-grade framework from day one. Instead, focus on foundational elements that deliver the most immediate value and mitigate the highest risks.

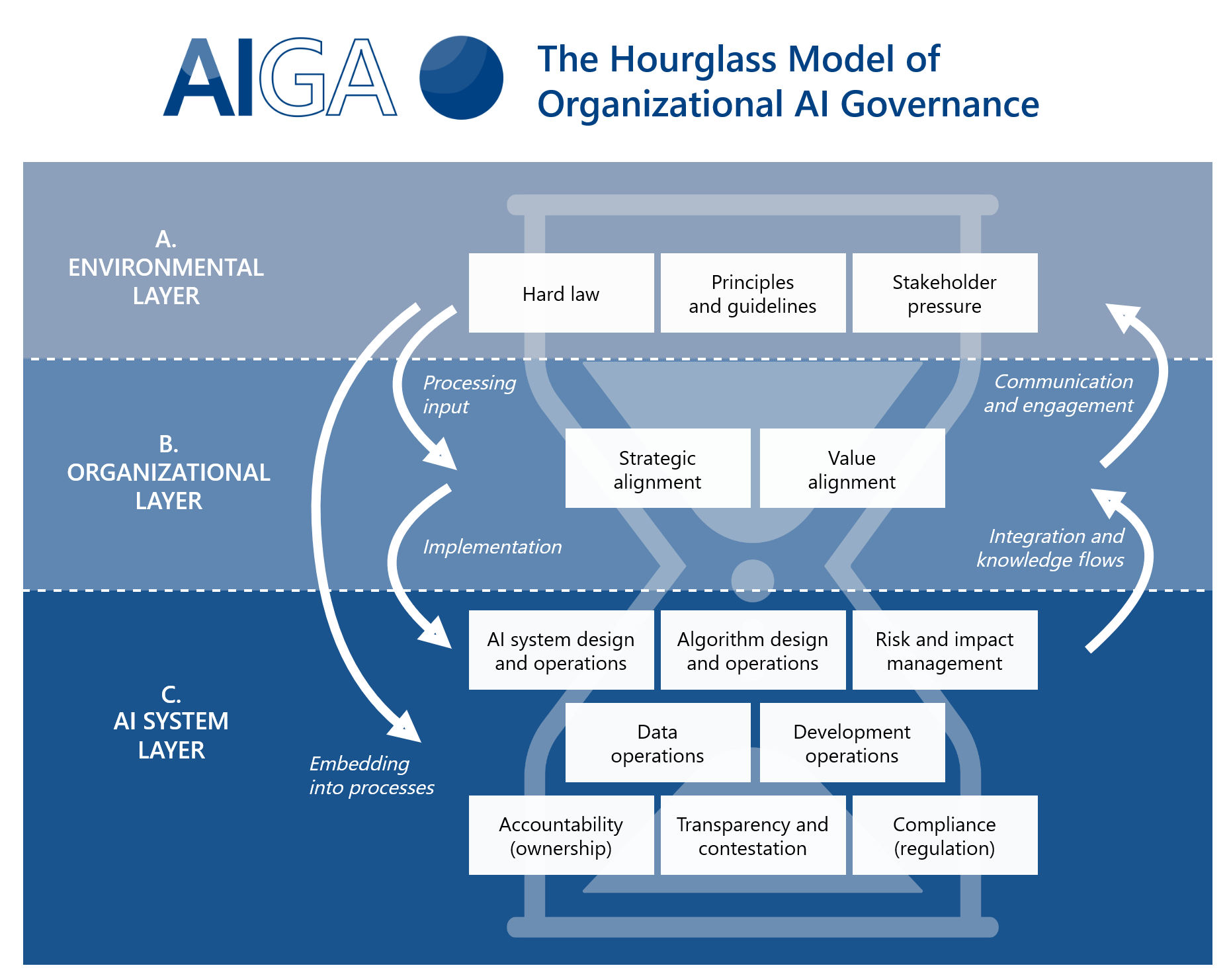

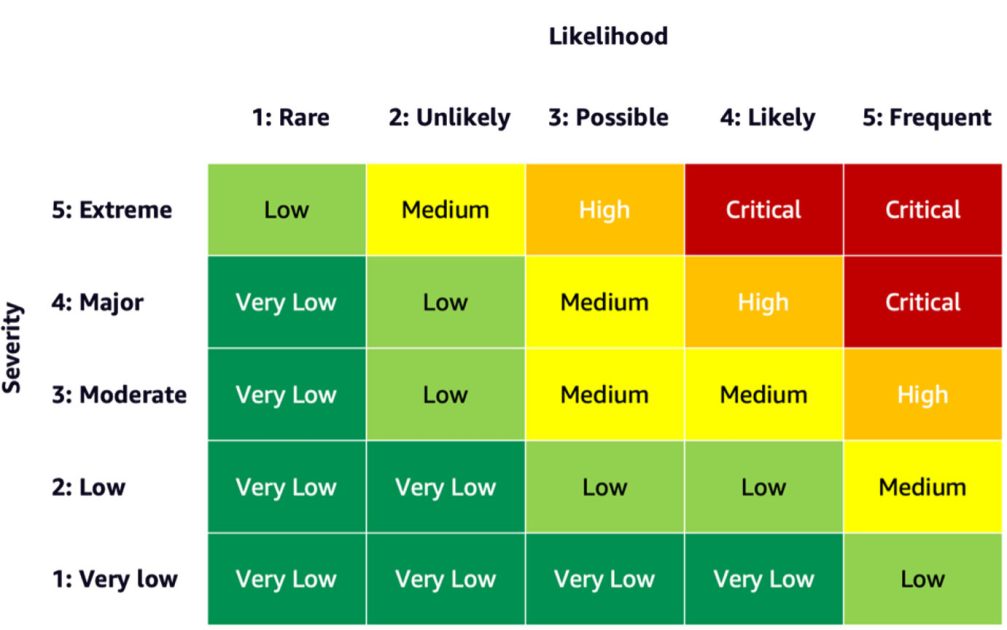

Inventory and Risk Assessment: Before you can govern, you must know what you’re governing. Start by identifying every AI tool currently in use across your organization, even those adopted informally by individual teams (shadow IT). For each tool, assess its purpose, the data it processes, and its potential impact on customers, operations, and reputation. This isn’t a deep dive; it’s a practical mapping exercise to highlight immediate vulnerabilities.

AI tool inventory and risk matrix Define Clear Use Cases and Ethical Boundaries: For any new AI adoption, clearly articulate its intended purpose and the specific problem it solves. More importantly, establish basic ethical guidelines. What data is off-limits? What constitutes fair or unfair use? These aren’t abstract philosophical debates; they’re practical guardrails to prevent AI from generating biased content, making discriminatory decisions, or misrepresenting your brand.

Establish Data Quality and Privacy Protocols: AI’s effectiveness is directly tied to the quality and ethical handling of its input data. Prioritize ensuring that data fed into AI systems is accurate, relevant, and collected/stored in compliance with privacy regulations (e.g., GDPR, CCPA). Implement basic data anonymization or pseudonymization where appropriate. A data breach stemming from an AI tool is a direct and costly liability.

Identifying shadow AI isn’t a one-time fix; it’s the start of an ongoing conversation that often reveals hidden organizational friction. Teams adopt these tools out of genuine need or perceived efficiency, and simply shutting them down without understanding the underlying problem can breed resentment and drive the behavior further underground. The real challenge lies in integrating these tools into a controlled environment without stifling the agility that led to their adoption. Overlooking this human element can lead to a continuous cat-and-mouse game, where governance is seen as a bottleneck rather than a safeguard, ultimately slowing legitimate innovation and increasing the hidden cost of non-compliance.

A critical, yet frequently overlooked, aspect is the ongoing maintenance and monitoring of AI systems. Models don’t remain static; they experience ‘drift’ as real-world data changes, potentially leading to degraded performance, biased outputs, or even non-compliance over time. What was ethical and accurate on day one might subtly shift over months, creating silent failures that accumulate. This erosion of quality and adherence can be far more damaging than an initial misstep, as it undermines trust in the AI’s reliability. When data quality issues persist, the cumulative effect isn’t just poor output; it’s a systemic loss of confidence in AI initiatives across the entire organization, making future adoption an uphill battle and wasting prior investment.

What to Delay or Deprioritize Today

For small to mid-sized teams, the biggest trap in AI governance is trying to implement everything at once. Many advanced governance concepts, while valuable for large enterprises, are resource-intensive and offer diminishing returns for SMBs in the initial stages.

Deprioritize building complex, custom AI audit frameworks or sophisticated explainability models. While understanding why an AI made a decision is crucial in some high-stakes scenarios, for most marketing and operational AI tools used by SMBs, focusing on the outcome and performance is a more pragmatic starting point. Developing intricate audit trails or investing heavily in AI explainability tools requires specialized expertise and significant time, which most SMBs simply don’t have. Instead, focus on clear documentation of AI’s intended function and regular monitoring of its output for unexpected behavior. If an AI-powered content generator starts producing off-brand messaging, the immediate priority is to correct the output and adjust the input, not to dissect the neural network’s internal logic.

Similarly, avoid getting bogged down in over-engineering for hypothetical future regulations. While staying informed about evolving AI legislation is wise, dedicating substantial resources to anticipate and pre-emptively comply with every potential future rule can paralyze current efforts. Focus on robust general data privacy practices and ethical AI use, which will likely cover the spirit of most future regulations. Your immediate concern should be managing the AI risks present today with the tools you’re currently using.

This tendency to chase comprehensive frameworks often carries a significant hidden cost: opportunity. Every hour spent on a theoretical audit framework or a deep dive into explainability is an hour not spent on refining prompts, integrating AI into a workflow, or analyzing the actual business impact of an AI tool. For lean teams, this isn’t just a delay; it’s a diversion of finite resources from activities that would yield immediate, tangible value. The result is often ‘analysis paralysis,’ where the pursuit of perfect governance prevents any practical implementation, leaving teams with sophisticated plans but no real-world experience.

Furthermore, an excessive focus on pre-emptive, theoretical governance can inadvertently blind teams to the real, emergent risks that only become apparent once an AI system is live. No framework, however detailed, can fully anticipate every edge case or user interaction. The most critical governance insights often come from observing AI in action, identifying unexpected behaviors, and adapting quickly. When teams feel pressured to meet an enterprise-level ideal, they can become overly cautious, delaying valuable learning cycles and fostering a culture where the fear of making a mistake outweighs the imperative to innovate and learn from practical application. This creates a frustrating gap between the perceived ‘right way’ and the pragmatic ‘effective way’ for SMBs.

Practical Steps for Implementing Governance

Once you’ve prioritized the foundational elements, integrate these practical steps into your existing workflows. This isn’t about creating new departments, but embedding responsible AI practices into daily operations.

Assign Clear Ownership: Even if it’s one person, designate who is ultimately responsible for overseeing AI tool usage, data inputs, and outputs. This ensures accountability and a single point of contact for issues.

Document AI Usage: Maintain a simple, accessible record. For each AI tool, note its purpose, the data it uses, who operates it, and any known limitations or biases. This doesn’t need to be a complex database; a shared spreadsheet or internal wiki page can suffice.

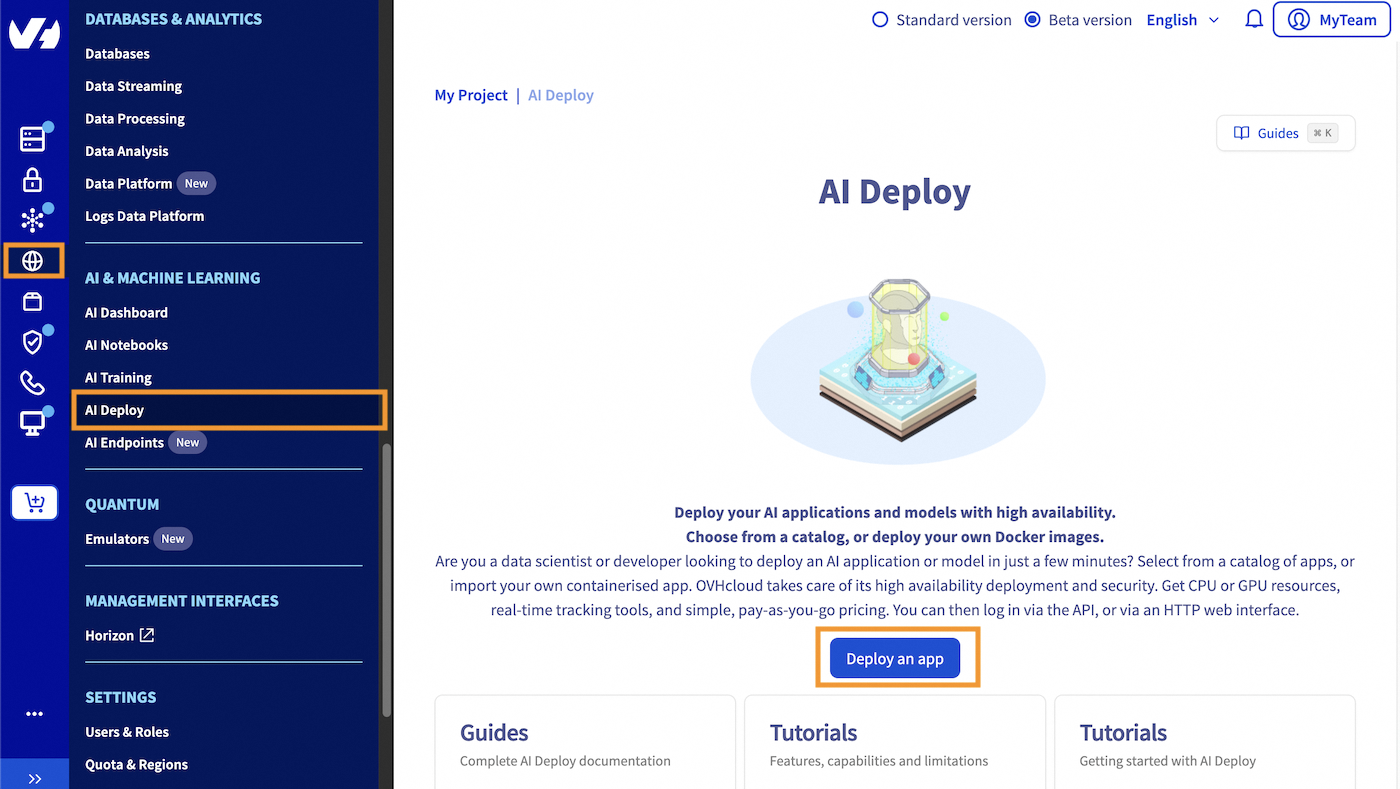

Simple AI tool documentation log Regular Review and Feedback Loops: Schedule periodic checks (e.g., monthly or quarterly) to review AI tool performance. Are they still meeting their intended purpose? Are there any unintended side effects? Encourage users to report issues or unexpected behaviors. This feedback is crucial for iterative improvement.

Training and Awareness: Educate your team on responsible AI use. This includes understanding the limitations of AI, recognizing potential biases, and knowing when human oversight is critical. Simple internal guidelines can go a long way in fostering a culture of responsible AI. AI ethics training for small businesses

Building Trust Through Transparent AI Practices

Effective AI governance isn’t just about avoiding penalties; it’s about building trust. Internally, clear guidelines empower employees to use AI confidently and responsibly. Externally, transparent practices reassure customers and partners that their data is handled ethically and that your AI-driven interactions are fair and reliable. This trust is a significant competitive advantage, especially as AI becomes more pervasive. By demonstrating a commitment to responsible AI, even with limited resources, SMBs can differentiate themselves and strengthen their brand reputation.

Focus on communicating your commitment to ethical AI use in your privacy policies or terms of service where relevant. While you don’t need to publish detailed technical specifications, a clear statement on how you approach AI responsibility can significantly enhance customer confidence. Google AI principles

2 Comments