The Non-Negotiable Reality of AI Data Security

In 2026, AI tools are deeply integrated into marketing workflows, from content generation to campaign optimization. However, every piece of data fed into an AI model, especially third-party tools, carries inherent risks. For SMBs, a data breach or compliance failure isn’t just a PR headache; it can be an existential threat. We’re talking about customer trust, potential regulatory fines (like those under GDPR or CCPA), and operational disruption. Your primary concern isn’t just what an AI tool can do, but what it does with your data.

The reality is that most SMBs lack dedicated cybersecurity teams. This means marketing practitioners must become the first line of defense. Understanding the basics of data handling, privacy policies, and vendor security postures isn’t a nice-to-have; it’s a core competency for anyone leveraging AI in marketing today.

Prioritizing Secure AI Tool Selection

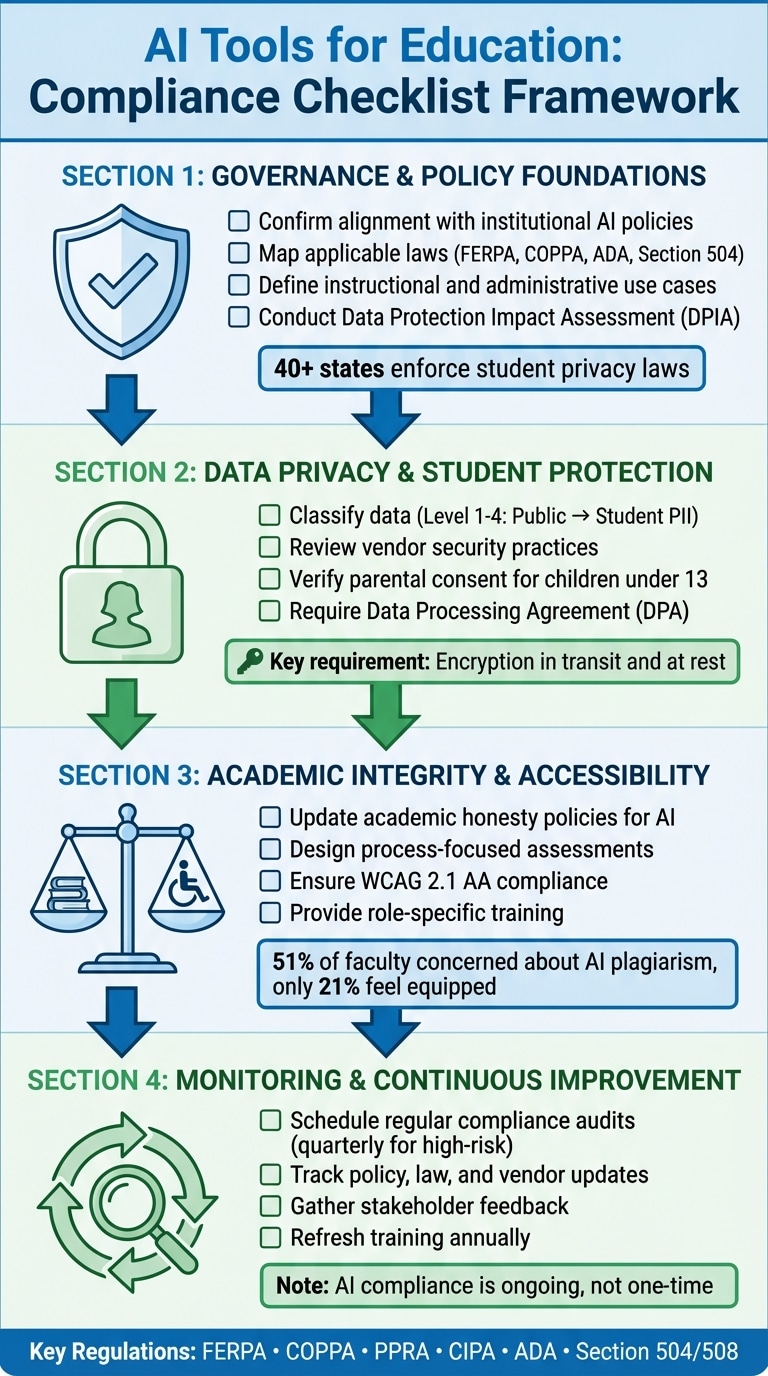

When evaluating new AI tools, your first filter should be security, not just features. This is where many teams make critical mistakes, seduced by shiny new capabilities without scrutinizing the underlying data practices. For SMBs, the focus must be on tools designed with security and privacy in mind from the ground up.

- Vendor Vetting is Paramount: Don’t just read the marketing copy. Dig into their security certifications (e.g., SOC 2 Type 2, ISO 27001), data encryption standards (in transit and at rest), and incident response plans. If a vendor is vague or unwilling to provide details, that’s a red flag.

- Understand Data Residency and Processing: Where will your data be stored and processed? For businesses operating in regions with strict data sovereignty laws, this is non-negotiable. Ensure the vendor’s infrastructure aligns with your compliance requirements.

- Review Data Usage Policies: Crucially, understand how the AI vendor uses your data. Do they use it to train their models? Is it anonymized? Can you opt out? Many free or low-cost tools might leverage your data in ways that compromise your competitive edge or customer privacy.

- Focus on Established Providers: While new startups offer innovative solutions, established players often have more mature security infrastructures and compliance teams. For core marketing functions involving sensitive data, prioritize stability and proven security over bleeding-edge features from unproven entities.

Even after a rigorous selection process, the work isn’t truly done. Security isn’t a static state; it’s an ongoing operational burden that many small teams underestimate. The initial vetting gives you a baseline, but vendor policies can change, new vulnerabilities emerge, and your team’s usage patterns evolve. Overlooking the need for continuous monitoring, periodic re-evaluation of data flows, and regular access audits can quickly erode any initial security gains, turning a seemingly secure choice into a liability over time.

Another common pitfall is the organic adoption of unvetted tools by individual team members. Under pressure to innovate or find quick solutions, practitioners often experiment with new AI services that haven’t passed through the official security gauntlet. This “shadow IT” creates significant, unmanaged risk. It’s a direct consequence of either overly restrictive internal processes or a lack of readily available, approved alternatives. The frustration of slow internal approvals can drive teams to bypass security protocols, creating a downstream effect where sensitive business data ends up in environments with unknown security postures and data usage policies.

For teams with limited bandwidth, it’s easy to get bogged down trying to apply enterprise-grade security scrutiny to every single AI tool, regardless of its function or the data it handles. A pragmatic approach means deprioritizing exhaustive security reviews for tools that process only publicly available, non-sensitive data, or those used for purely internal, non-critical tasks. Instead, allocate your finite resources to the tools that touch customer PII, proprietary business strategies, or financial data. Trying to secure everything equally often results in securing nothing effectively, as critical risks get diluted among less impactful ones.

Immediate Actions: What to Implement Today

You don’t need an enterprise-level budget to significantly improve your AI data security posture. Here are practical steps you can take right now:

- Data Minimization: Only feed AI tools the absolute minimum data required for the task. If an AI content generator needs a topic and a few keywords, don’t give it your entire customer database. This reduces the attack surface.

- Access Control & Permissions: Implement strict role-based access. Not everyone on your marketing team needs full access to every AI tool or the data within it. Limit permissions to only what’s necessary for each role. Regularly review and revoke access for departed employees.

- Anonymization and Pseudonymization: Where possible, anonymize or pseudonymize sensitive customer data before feeding it into AI tools. For example, use hashed email addresses instead of plain text, or remove personally identifiable information (PII) from datasets used for trend analysis.

- Employee Training: Your team is your strongest or weakest link. Conduct regular, practical training sessions on data security best practices for AI tools. Emphasize phishing awareness, strong password policies, and the importance of questioning suspicious data requests.

- Secure API Integrations: If you’re integrating AI tools via APIs, ensure you’re using secure API keys, rotating them regularly, and monitoring API usage for anomalies.

Beyond the direct controls, the most insidious threat often comes from within: the unsanctioned use of AI tools. Your team, under pressure to deliver, might turn to readily available public AI models for quick tasks, inadvertently feeding proprietary or sensitive data into systems outside your control. This “shadow AI” bypasses all your carefully implemented security protocols, creating a massive, unmonitored data leak risk that’s easy to overlook until it’s too late.

Implementing strict security measures inevitably introduces friction into workflows. While necessary, don’t get bogged down trying to achieve theoretical “perfect” data isolation or implementing enterprise-grade data governance frameworks that your team can’t realistically maintain. For most small to mid-sized businesses, the immediate priority should be practical risk reduction and visibility, not exhaustive, resource-intensive compliance. Over-engineering security at this stage can lead to team frustration and workarounds, ironically increasing your risk.

This brings us to a critical, often neglected area: robust audit trails and monitoring. It’s not enough to secure data going into AI tools; you need to know how that data is being used, who is accessing it, and what outputs are being generated. Without comprehensive logging and anomaly detection, you’re flying blind. When a security incident inevitably occurs—and it will—the absence of detailed audit logs makes effective investigation, containment, and recovery virtually impossible, turning a manageable breach into a catastrophic event.

What to Deprioritize (or Skip Entirely) Right Now

For small to mid-sized teams, resources are finite. Trying to implement every theoretical security measure will lead to burnout and ineffective execution. Focus your efforts where they have the most impact. Today, you should largely deprioritize:

- Building Custom AI Models from Scratch for Non-Core Tasks: Unless your core business relies on proprietary AI models, investing significant time and budget into building and securing custom AI for tasks like basic content generation or social media scheduling is a misallocation of resources. The security overhead, maintenance, and expertise required are substantial. Instead, leverage secure, off-the-shelf solutions from reputable vendors.

- Extensive In-House Security Audits for Every Free Tool: While vigilance is good, attempting to perform deep security audits on every free or low-cost AI tool you trial is impractical. Focus your audit efforts on tools that handle your most sensitive data or are deeply integrated into your core systems. For less critical tools, rely on vendor reputation, public security statements, and your data minimization strategy.

- Over-engineering Data Governance for Non-Sensitive Data: While all data deserves respect, don’t apply the same stringent governance protocols to public domain data or non-sensitive marketing insights as you would to customer PII or financial data. Tailor your security efforts to the sensitivity level of the data involved.

The goal is pragmatic security: sufficient protection without crippling your ability to innovate and execute. Focus on the big wins first.

Building a Secure AI Workflow

Security isn’t a one-time setup; it’s an ongoing process integrated into your daily operations. Think of it as a continuous loop:

- Assess: Before adopting a new AI tool, assess its data security implications.

- Implement: Configure the tool with security best practices (data minimization, access controls).

- Monitor: Regularly review access logs, data usage, and vendor security updates.

- Adapt: Adjust your practices as new threats emerge or as your data needs change.

Consider creating a simple internal policy document for AI tool usage. This doesn’t need to be a legal tome; a one-page guide outlining approved tools, data handling rules, and reporting procedures for suspicious activity can significantly reduce risk. This document serves as a quick reference and ensures consistency across your team. Creating an AI usage policy for SMBs

Navigating the Evolving AI Security Landscape

The AI landscape is dynamic. New tools, capabilities, and threats emerge constantly. For SMB marketers, staying agile means:

- Subscribing to Security Newsletters: Follow reputable cybersecurity news sources and AI ethics organizations. This keeps you informed about new vulnerabilities or best practices without requiring constant, deep research.

- Leveraging Vendor Updates: Most reputable AI tool providers regularly release security updates and feature enhancements. Ensure your team is aware of these and applies them promptly.

- Peer Learning: Engage with other marketing practitioners, perhaps in online communities or local meetups. Sharing experiences and solutions for AI security challenges can provide invaluable, real-world insights.

- Regular Policy Review: At least annually, review your internal AI usage policy and security protocols. What was sufficient last year might not be enough today. This doesn’t require a complete overhaul, but rather a check-in to ensure relevance.

Responsible innovation with AI means accepting that security is an ongoing journey, not a destination. By embedding pragmatic security practices into your marketing operations, you can harness the power of AI while protecting your business and your customers.

Leave a Comment