Navigating AI Responsibly in Your Marketing Operations

Adopting AI in marketing today isn’t just about leveraging new features; it’s about doing so with a clear understanding of your ethical obligations and practical risks. For small to mid-sized teams, often stretched thin with limited budgets and headcount, the real challenge lies in integrating AI ethically and effectively without overcomplicating existing workflows. This article provides a pragmatic roadmap, focusing on what truly matters: building customer trust, mitigating reputational risks, and ensuring your AI initiatives contribute to sustainable growth. We’ll cut through the theoretical noise to offer actionable insights on prioritizing responsible AI practices, identifying the most impactful tools, and understanding where to focus your limited resources.

Why Responsible AI Isn’t Optional for SMBs Anymore

Some might view responsible AI as a concern primarily for large enterprises, but that’s a miscalculation. For small to mid-sized businesses, reputation is everything. A single misstep in how AI handles customer data or targets audiences can erode trust, leading to customer churn and significant brand damage that’s hard to recover from. Beyond reputation, regulatory scrutiny around data privacy (like GDPR or CCPA) continues to evolve, and while direct enforcement might target larger players, the principles apply to all. Ignoring these aspects isn’t just unethical; it’s a direct business risk. Our experience shows that proactive attention to responsible AI builds stronger customer relationships and provides a competitive edge in a crowded market.

What’s often overlooked is the compounding operational cost of fixing these issues after they arise. It’s not just about potential fines or lost customers; it’s the internal drain of resources spent on incident response, re-engineering systems, and rebuilding data pipelines to address biases that could have been mitigated upfront. This creates a significant technical debt, making future AI initiatives harder, slower, and riskier, effectively stifling innovation rather than accelerating it.

Another subtle but critical failure mode is model decay. An AI system that is responsible and fair at launch can slowly drift into problematic territory as real-world data evolves. Customer demographics shift, market trends change, and even the language used in interactions can subtly alter the model’s behavior over time. Without continuous monitoring and a robust process for retraining or recalibrating, a seemingly benign AI can quietly start making biased decisions or violating privacy principles, often going unnoticed until a significant negative event forces an investigation.

For small teams, the theoretical ideal of “human in the loop” often clashes with practical realities. The sheer volume of AI-generated content, recommendations, or decisions can quickly overwhelm limited human resources. What starts as a diligent review process can devolve into oversight fatigue, where critical errors are missed due to the pressure to process outputs quickly. This isn’t a failure of intent, but a consequence of underestimating the ongoing human effort required to truly ensure responsible AI, leading to a false sense of security.

Prioritizing Core Pillars for Practical Implementation

For teams with limited resources, a comprehensive AI ethics framework is often overkill. Instead, focus on these foundational pillars that offer the highest return on investment for risk mitigation and trust-building:

- Data Privacy and Security: This is non-negotiable. Ensure explicit consent for data collection, transparent data usage policies, and robust security measures for stored customer information. Your CRM or marketing automation platform should be your first line of defense here.

- Human Oversight and Accountability: AI should be an assistant, not an autonomous decision-maker. Implement clear checkpoints where human judgment reviews AI outputs, especially for critical customer-facing content or targeting decisions.

- Bias Awareness and Mitigation: While complex bias detection is resource-intensive, simple awareness is crucial. Regularly review AI-generated audience segments or content for unintended biases that could exclude or misrepresent certain customer groups. Ask: “Does this feel fair and inclusive?”

What often gets overlooked, however, are the practical friction points and downstream consequences of these pillars. For instance, while platforms offer robust data security features, relying solely on them without internal process rigor can create a false sense of security. The true vulnerability often lies in human error or inconsistent internal data handling practices, which can lead to compliance gaps or even breaches long after initial implementation. This isn’t about platform failure; it’s about the operational disconnect between tool capabilities and team execution.

Similarly, “human oversight” can quickly become a bottleneck or a superficial rubber stamp under real-world deadlines. Teams, already stretched thin, may feel immense pressure to approve AI outputs quickly, especially when the AI consistently performs “well enough.” This decision pressure can lead to a desensitization to subtle errors or biases, effectively nullifying the intent of human review. The consequence is often a slow erosion of critical thinking, where the AI’s output is accepted as fact rather than a suggestion.

The impact of unaddressed bias also extends beyond immediate ethical concerns. Even subtle biases, if left unchecked, can lead to significant market inefficiencies. For example, consistently mis-segmenting or alienating a valuable customer demographic due to biased AI outputs doesn’t just feel unfair; it directly translates to missed revenue opportunities and a gradual erosion of brand relevance within those segments. These are not abstract risks; they are tangible business costs that accumulate over time, often unnoticed until they become systemic.

Practical Tools and Workflows to Implement Today

You don’t need specialized AI ethics software to start. Many of the tools you already use can support responsible AI practices:

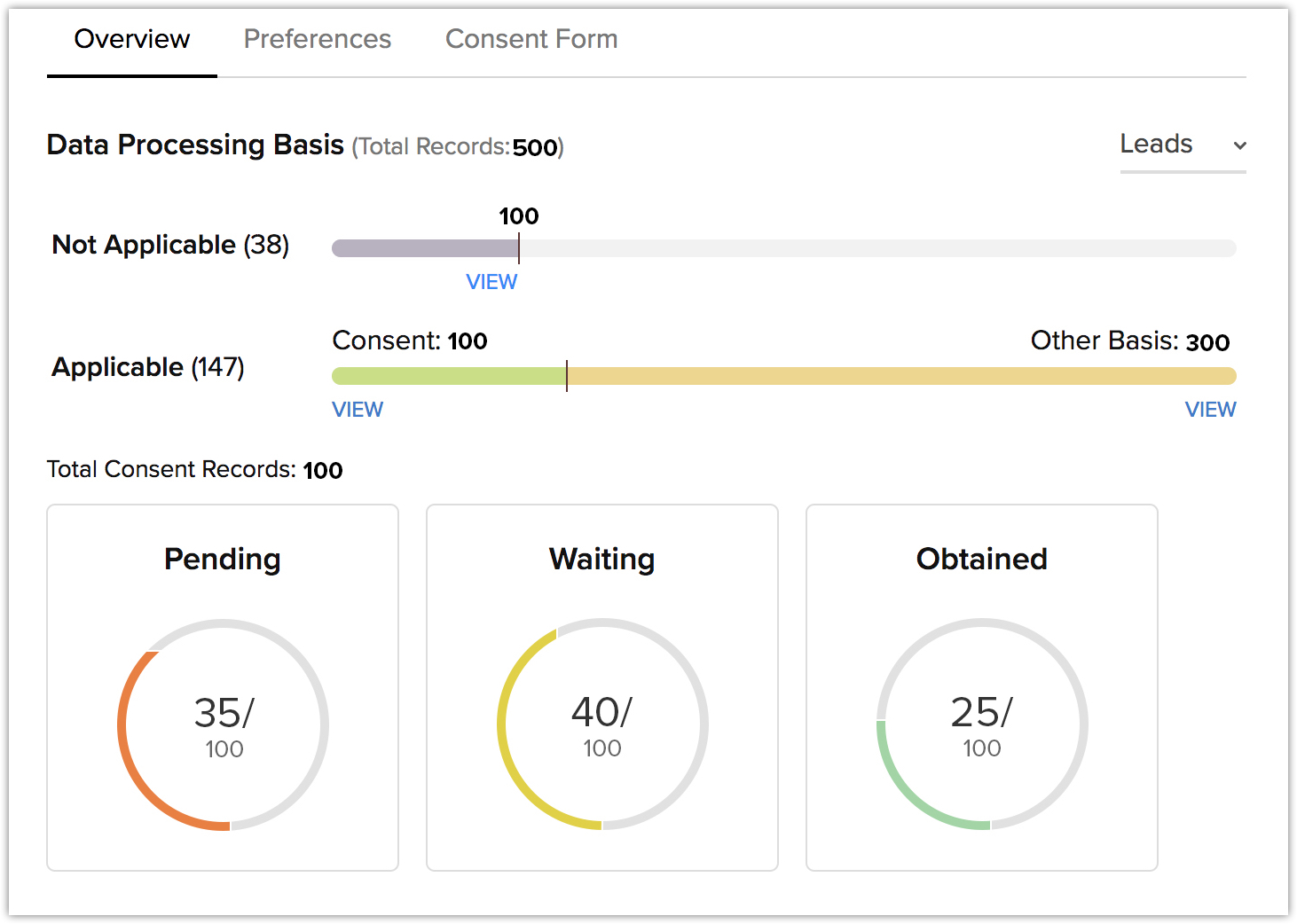

- Leverage CRM Consent Management: Most modern CRM and marketing automation platforms (like HubSpot or Salesforce Marketing Cloud) offer robust features for managing customer consent, preferences, and data access. Use them to their full potential to ensure compliance and transparency.

CRM Consent Management Dashboard - Establish AI-Generated Content Review Loops: If you’re using AI for content creation (emails, social posts, ad copy), implement a mandatory human review step. This isn’t just for quality; it’s to catch factual inaccuracies, inappropriate tone, or subtle biases that AI might introduce.

- Conduct Audience Segmentation Sanity Checks: When AI suggests new audience segments for campaigns, don’t just accept them. Manually review the criteria and the resulting audience demographics. Look for patterns that might inadvertently exclude or unfairly target specific groups.

- Monitor Performance for Unintended Outcomes: Beyond conversion rates, track customer feedback, sentiment, and engagement patterns. A sudden drop in engagement from a specific demographic, or an increase in negative comments, could signal an issue with AI-driven targeting or messaging.

What to Deprioritize and Why for SMBs

For small to mid-sized teams, it’s critical to understand where *not* to spend your limited resources. Today, we strongly advise against investing heavily in complex Explainable AI (XAI) solutions or bespoke bias detection algorithms. While valuable in highly regulated industries or for very large-scale AI deployments, these tools are often overkill for typical marketing applications and demand significant technical expertise and budget. They can quickly become a resource drain without providing proportional practical benefits for your scale. Instead, focus on the simpler, process-based controls and human oversight mechanisms outlined above. These provide immediate, tangible risk mitigation without the steep learning curve or financial outlay.

Building Trust, One AI Decision at a Time

Implementing responsible AI isn’t a one-time project; it’s an ongoing commitment. It requires continuous vigilance, iterative adjustments, and a culture that values transparency and accountability. By focusing on practical, actionable steps within your existing marketing stack, you can build a foundation of trust with your customers. This approach ensures your AI initiatives not only drive growth but also reinforce your brand’s integrity, proving that smart marketing and ethical automation can, and should, go hand-in-hand. Google AI Principles

Leave a Comment