Why Vetting Matters More Than Ever

Navigating the explosion of AI tools can feel overwhelming, especially for small to mid-sized marketing teams. This article cuts through the noise, providing a practical framework to evaluate AI solutions based on real-world impact, seamless integration, and clear ROI. You’ll learn how to prioritize tools that genuinely solve your team’s immediate challenges, avoid costly distractions, and make informed decisions that drive tangible business growth without overstretching your limited resources.

As of early 2026, the market is saturated with AI solutions promising everything from automated content creation to predictive analytics. For marketing teams with limited budgets and headcount, simply adopting the latest trend is a recipe for wasted resources. The critical skill now isn’t just identifying AI tools, but rigorously vetting them against your operational realities and strategic goals.

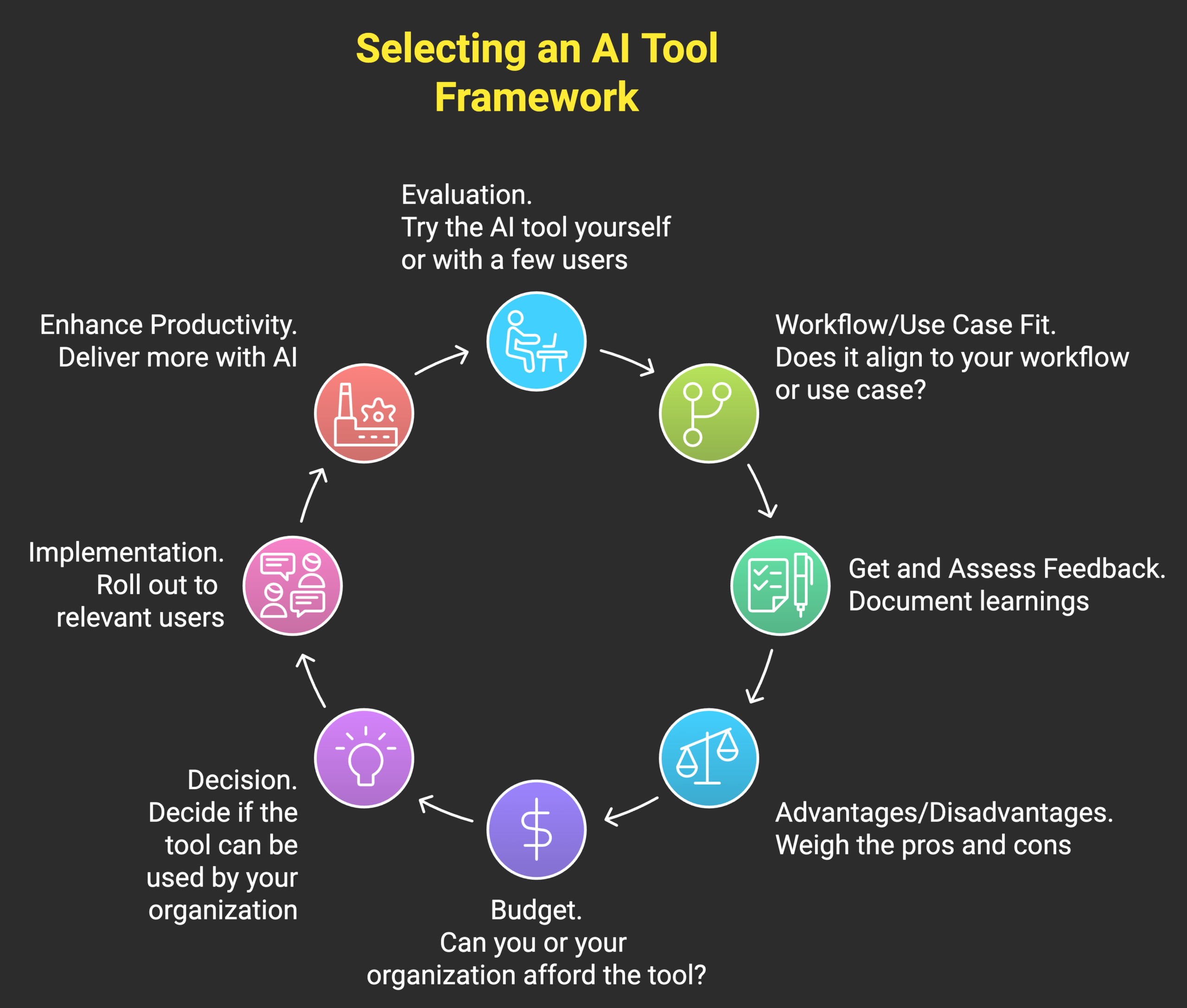

The Core Vetting Framework: Impact, Integration, and ROI

When evaluating any AI tool, we filter through three essential lenses. These aren’t theoretical concepts; they’re pragmatic checkpoints designed to ensure a tool delivers real value under real-world constraints.

- Impact: Does it solve a real problem? This is paramount. The tool must address a specific, measurable pain point or unlock a clear opportunity for your team. Avoid tools that are merely ‘nice-to-have’ or solve problems you don’t actually have. Focus on areas where AI can significantly reduce manual effort, improve accuracy, or provide insights that were previously unattainable due to resource limitations.

- Integration: How well does it fit your existing workflow? A powerful AI tool is useless if it disrupts your entire operation or requires a complete overhaul of your tech stack. Look for solutions that integrate smoothly with your current platforms (CRM, email marketing, analytics, project management). Minimal friction in adoption means faster time to value and less strain on your team.

- ROI: Is there a clear path to measurable returns? Before committing, you must be able to articulate how the tool will generate a return on investment. This could be through cost savings (e.g., reduced agency spend, fewer hours on repetitive tasks), revenue generation (e.g., improved conversion rates, better lead quality), or efficiency gains that free up your team for higher-value work. If you can’t define the ROI, it’s a red flag.

What often gets overlooked in the pursuit of ‘impact’ is the subtle drain of a tool that only solves a peripheral problem. While it might offer a marginal improvement, the real cost isn’t just the subscription fee; it’s the opportunity cost of your team’s attention and the cognitive load of managing another system. These ‘nice-to-haves’ can quickly become ‘nice-to-maintain,’ consuming valuable time in setup, training, and ongoing oversight that could have been directed towards initiatives with a demonstrably higher leverage.

Beyond technical compatibility, the true integration challenge often lies with your team and your data. A tool might plug into your CRM perfectly, but if your existing data is inconsistent or incomplete, the AI’s output will be flawed, leading to frustration and a rapid erosion of trust among users. Furthermore, even a seamless technical integration can fail if the new workflow isn’t intuitively adopted by the people who need to use it daily. The friction isn’t always in the API; it’s in the human habit and the quality of the inputs you feed it.

The pressure to demonstrate ROI can also lead to a common pitfall: overstating early wins or attributing general business improvements solely to the new AI tool. Without a rigorously defined baseline and clear, isolated metrics, it’s easy to fall into confirmation bias. When the initial hype fades and the promised returns don’t materialize consistently, it doesn’t just mean a wasted investment; it can breed cynicism within the team, making it harder to gain buy-in for future, genuinely impactful technology initiatives. This erosion of internal credibility is a significant, often unquantified, downstream cost.

Given these practical realities, it’s prudent to deprioritize any AI tool that doesn’t clearly meet all three criteria from the outset. Specifically, resist the urge to adopt solutions that require significant data cleanup before they can even function, or those that promise vague ‘efficiency gains’ without a concrete, measurable link to your bottom line. The operational overhead and potential for team disillusionment from a poorly vetted tool far outweigh the theoretical benefits of being ‘early adopters’ of every new AI trend.

Prioritizing for Small Teams: What to Do First

For small to mid-sized teams, prioritization isn’t just about what’s important; it’s about what’s *most* important given your constraints. Start with AI tools that address immediate, high-leverage problems with a clear, measurable impact and minimal implementation overhead.

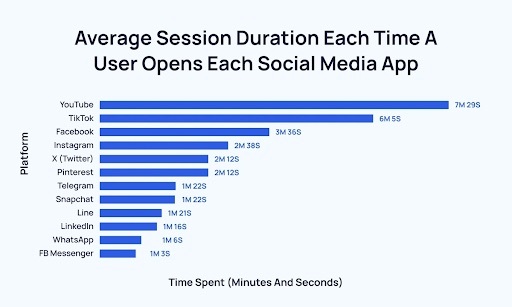

- Content Generation for Specific Tasks: Focus on AI for first drafts of blog posts, social media captions, ad copy variations, or email subject lines. These tools can significantly speed up the initial ideation and drafting process, freeing up writers for editing and strategic refinement.

- Basic Data Analysis & Reporting Automation: Look for AI that can automate the aggregation and initial interpretation of marketing data, generating routine reports or identifying basic trends. This saves hours typically spent on manual data compilation.

- Customer Service Automation for FAQs: Implementing AI chatbots for common customer inquiries can reduce the load on your support team, improve response times, and allow human agents to focus on more complex issues.

The key is to start small, prove value with a contained project, and then scale. Don’t try to boil the ocean. Incremental gains build momentum and demonstrate the tangible benefits of AI to your stakeholders.

What often gets overlooked in the initial excitement is the downstream impact of AI on team workflows and skill sets. For instance, while AI can generate content quickly, the quality of that “first draft” is rarely publication-ready. Teams often find themselves spending significant time editing, fact-checking, and refining AI output to match their brand voice and accuracy standards. This isn’t always a time-saver; it’s a shift in labor from creation to intensive curation, which can be frustrating when expectations are set for full automation. Therefore, deprioritize any AI content tool that promises “set it and forget it” content generation without a robust human review process built in.

Similarly, automating basic data analysis can free up time, but it also carries the risk of superficial understanding. If teams rely solely on AI to highlight trends, they might lose the critical thinking muscle needed to dig deeper into *why* those trends exist or to identify non-obvious connections. This can lead to acting on correlation rather than causation, or missing the strategic nuances that only a human, with broader business context, can truly grasp. The consequence is often a series of tactical decisions that don’t align with larger strategic goals. It’s critical to deprioritize any approach that treats AI as a substitute for human analytical judgment, rather than an augmentation.

The real challenge isn’t just implementing the tool, but integrating it effectively into human processes. This requires new skills, like prompt engineering and critical evaluation of AI outputs, which small teams often don’t budget for in terms of training or time. Without this adaptation, AI tools can become another source of operational friction rather than a seamless accelerator, leading to a cycle of adoption and abandonment as teams struggle with the practical realities. Don’t underestimate the hidden cost of upskilling your team; it’s a necessary investment, not an optional extra.

What to Deprioritize (or Skip Entirely) Today

For teams with limited budgets and headcount, the biggest trap isn’t choosing the wrong AI tool, it’s trying to implement too many or the wrong kind of AI tool. Today, you should actively deprioritize complex, all-in-one ‘AI suites’ that promise to revolutionize every aspect of your marketing. These often come with steep learning curves, require significant data migration, and demand dedicated resources for setup and maintenance – resources most small teams simply don’t have. Similarly, be wary of ‘bleeding edge’ AI solutions without a proven track record or clear, immediate use cases for your specific business. The risk of investing time and money into unstable, unproven technology is too high when you need reliable, incremental gains.

Instead of chasing every new AI trend, focus on tools that solve a specific, measurable problem with minimal disruption. Avoid AI for tasks that are already handled efficiently by simpler, existing tools, or those that require a complete overhaul of your current workflows. Your priority should be practical application and measurable return, not being first to adopt every new piece of tech. Delaying adoption of complex, high-risk AI allows you to observe how the market matures and how other, larger organizations navigate these challenges, saving your team from becoming an expensive beta tester.

Practical Evaluation Steps: Beyond the Demo

Once you’ve identified a potential AI tool, move beyond the vendor’s marketing claims and conduct a rigorous practical evaluation.

- Define Clear Success Metrics: Before starting a trial, establish exactly what success looks like. Is it a ten percent reduction in content creation time? A five percent increase in lead quality? Without clear metrics, you can’t objectively assess performance.

- Pilot with a Small, Contained Project: Don’t roll out a new AI tool across your entire operation immediately. Select a specific campaign, content type, or customer segment for a pilot project. This minimizes risk and allows for focused learning.

- Assess Data Privacy and Security: This is non-negotiable. Understand how the tool handles your data, where it’s stored, and what security protocols are in place. For SMBs, a data breach can be catastrophic. Data privacy best practices for marketing tools

- Evaluate Vendor Support and Roadmap: A tool is only as good as the support behind it. Assess the responsiveness and quality of customer service. Also, understand the vendor’s product roadmap – is it aligned with your future needs?

- Consider the ‘Human in the Loop’ Aspect: AI should augment, not replace, human intelligence. Ensure the tool allows for human oversight, editing, and strategic input. Fully autonomous AI in critical marketing functions is often premature and risky.

Making the Decision: When to Commit

The decision to commit to an AI tool should be driven by demonstrated value, not just potential. If your pilot project shows clear, measurable impact against your defined success metrics, and the tool integrates well without undue strain on your team, then you have a strong case for adoption. Remember, the goal is to enhance your marketing capabilities and efficiency within your team’s real-world constraints, not to chase every shiny new object. Prioritize tools that deliver tangible results and empower your team to do more with less.

Leave a Comment