Implementing AI responsibly isn’t just a compliance checkbox; it’s a strategic imperative for small to mid-sized businesses looking to build trust, protect their brand, and ensure sustainable growth. This guide cuts through the noise, offering a pragmatic roadmap to integrate ethical considerations into your AI initiatives without requiring a dedicated ethics department or an unlimited budget.

You’ll learn how to prioritize actions, identify immediate risks, and establish practical safeguards that work within your operational realities. Our focus is on actionable steps that deliver real value and mitigate potential pitfalls, allowing you to leverage AI’s power responsibly from day one.

Why Ethical AI Matters for SMBs (Beyond Compliance)

For SMBs, trust is your most valuable asset. Unethical AI practices, even unintentional ones, can erode customer loyalty, damage your reputation, and invite regulatory scrutiny. This isn’t just about avoiding fines; it’s about maintaining the goodwill that fuels your business. Every AI tool, from a simple chatbot to a sophisticated recommendation engine, carries ethical implications that need to be managed.

- Reputation and Trust: Customers increasingly care about how businesses use their data and AI. Demonstrating ethical practices builds long-term loyalty.

- Risk Mitigation: Proactive ethical considerations help you avoid costly data breaches, biased outcomes, and potential legal challenges.

- Competitive Edge: Businesses known for responsible AI use can differentiate themselves in a crowded market, attracting conscious consumers and talent.

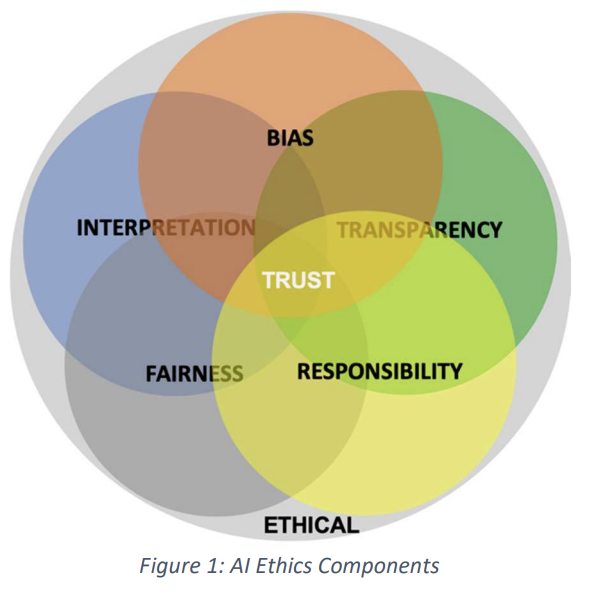

Prioritizing Your Ethical AI Framework

Given limited resources, you can’t tackle everything at once. Here’s how to focus your efforts for maximum impact.

What to Do First:

- Define Core Values & Principles: Start by articulating what “ethical” means for your business. How do your existing company values translate to AI use? This provides a compass for all future decisions.

- Identify High-Risk AI Use Cases: Pinpoint the AI applications that directly interact with customers or handle sensitive data. Examples include AI-powered customer service, personalized marketing, or any tool influencing hiring decisions. These are your immediate priorities for ethical review.

- Basic Data Governance: Understand your data. Where does it come from? How is it collected, stored, and used? What’s its quality? Poor data hygiene is often the root of ethical AI problems. Implement basic data mapping and access controls.

What to Delay:

- Complex AI Audits: Don’t get bogged down in deep, technical AI model audits initially. Focus on high-level checks and observable outcomes. You need to understand what the AI does, not necessarily how every line of code works.

- Developing Proprietary Ethical AI Tools: Leverage existing frameworks, guidelines, and third-party tools that incorporate ethical features. Building custom solutions is resource-intensive and rarely necessary for SMBs.

What often gets overlooked in the rush to adopt AI is the compounding effect of skipping foundational steps. For instance, failing to clearly articulate your core values and principles early on doesn’t just mean a missing document; it creates a vacuum where inconsistent decisions will inevitably arise. Different teams will interpret “ethical” through their own lens, leading to internal friction, rework, and a lack of unified direction when new, complex AI use cases emerge. This isn’t a theoretical problem; it’s a real source of decision paralysis and frustration for teams trying to move quickly.

Similarly, neglecting basic data governance isn’t merely a technical oversight; it’s building ethical debt. Cleaning up messy, biased, or non-compliant data after an AI system is deployed and causing issues is exponentially more costly and disruptive than establishing sound practices upfront. The downstream effect is not just a flawed AI output, but a potential erosion of customer trust and brand reputation that takes years to rebuild, far outweighing any initial time savings.

Another common pitfall is treating an ethical AI framework as a static compliance checklist rather than an active, living guide for decision-making. Under real-world pressure to deliver features or improve efficiency, teams can easily fall into a “check-the-box” mentality. The framework might exist on paper, but if it doesn’t genuinely influence daily product development choices and operational processes, it becomes performative. This gap between the written policy and practical application leaves the organization vulnerable to the very ethical risks it sought to mitigate, creating a false sense of security.

Practical Steps for Responsible AI Deployment

Once you have your priorities, these are the actionable steps to integrate ethics into your AI operations.

Transparency & Explainability:

Be clear with your users when AI is involved. If a chatbot is answering questions, state it. If a recommendation engine is suggesting products, explain the basis (e.g., “based on your past purchases”). This builds trust and manages expectations. Avoid opaque “black box” scenarios where users don’t understand why an AI made a particular decision.

Bias Mitigation:

AI models are only as good as the data they’re trained on. Biased data leads to biased outcomes. For SMBs, this means:

- Data Source Scrutiny: Question the origin of your training data. Is it representative? Does it inadvertently exclude or misrepresent certain groups?

- Regular Output Review: Periodically check AI outputs for unintended biases. For example, if your AI-powered hiring tool consistently favors one demographic, investigate why.

- Feedback Loops: Encourage users to report unfair or inaccurate AI behavior.

Data Privacy & Security:

This is non-negotiable. Ensure your AI systems comply with relevant data protection regulations like GDPR or CCPA. Minimize the data you collect, only gathering what’s strictly necessary. Implement robust security measures to protect data from breaches. Data privacy regulations

Human Oversight & Feedback Loops:

AI should augment human capabilities, not replace human judgment entirely. Establish clear points where humans can review, override, or refine AI decisions. Implement mechanisms for users to provide feedback on AI performance, which can then be used to improve the system iteratively.

Vendor Due Diligence:

If you’re using third-party AI tools (which most SMBs do), thoroughly vet your vendors. Ask about their data privacy policies, bias mitigation strategies, and how they ensure ethical AI development. Don’t assume their ethics align with yours.

What’s often missed in the push for “ethical AI” is the sustained operational burden. Bias mitigation, for instance, isn’t a checkbox; it’s a continuous, resource-intensive process. Addressing one type of bias can inadvertently surface another, or even degrade performance for a different user segment. Teams often face the unenviable task of making trade-offs between different definitions of “fairness,” a problem that has no single technical solution and can lead to significant internal friction and decision fatigue.

Similarly, “human oversight” often sounds like a simple solution, but in practice, it introduces its own set of challenges. When AI systems generate high volumes of decisions or recommendations, human reviewers can quickly become fatigued, leading to inconsistent judgments or, worse, simply rubber-stamping AI outputs without true scrutiny. This creates a false sense of security, where the system appears to be “human-verified” but is, in reality, operating with uncorrected flaws that compound over time. The pressure on human teams to keep up with AI output can lead to burnout and a degradation of the very judgment AI was meant to augment.

Another overlooked aspect is the practical application of transparency, especially with third-party AI solutions. While vendors might claim their models are “explainable,” the level of detail or the format of that explanation is often geared towards technical users, not your end customers. Your team is then left to translate complex algorithmic reasoning into digestible, trust-building language, which is a significant, often unbudgeted, product and content challenge. This gap between theoretical explainability and practical user transparency can erode trust just as quickly as a completely opaque system.

What to Deprioritize or Skip Today

For small to mid-sized businesses operating with lean teams and tight budgets, it’s crucial to distinguish between essential ethical practices and aspirational initiatives that can drain resources without immediate, tangible benefits. Today, you should deprioritize or skip:

- Building a dedicated “AI Ethics Committee” from scratch: While large enterprises might benefit from this, for SMBs, it’s an unnecessary overhead. Instead, integrate ethical considerations into existing roles and team meetings. Empower your product manager, marketing lead, or IT specialist to champion ethical AI within their scope. This ensures accountability without creating a new, potentially isolated, bureaucratic layer.

- Over-engineering custom AI ethics frameworks: Don’t spend months developing a bespoke framework. Start with widely accepted principles like fairness, accountability, and transparency, and adapt them to your specific context. There are plenty of excellent, publicly available guidelines that can serve as a strong foundation.

- Chasing every new AI ethics certification: The landscape of AI ethics certifications is still evolving. Focus on demonstrating practical, verifiable commitment to responsible AI through your actions and policies, rather than investing heavily in certifications that may not yet have widespread recognition or direct relevance to your customer base.

- Extensive academic research into AI ethics theory: While intellectual curiosity is valuable, your immediate need is actionable steps. Rely on practical guides, industry best practices, and expert summaries rather than diving deep into theoretical debates. Your time is better spent implementing safeguards than debating philosophical nuances.

Building a Culture of Responsible AI

Ethical AI isn’t a project with a start and end date; it’s an ongoing commitment that requires a cultural shift.

- Training & Awareness: Provide basic training to your team on what ethical AI means for your business and their roles. This doesn’t need to be extensive; a concise internal guide or workshop can suffice.

- Open Dialogue: Foster an environment where team members feel comfortable raising ethical concerns about AI tools or data practices. Encourage critical thinking about potential impacts.

- Iterative Approach: Treat ethical AI as a continuous improvement process. Regularly review your AI systems, gather feedback, and be prepared to adapt your practices as technology evolves and new ethical challenges emerge.

Leave a Comment