Why Transparency Matters for Your AI Marketing Today

As AI tools become integral to marketing, small and mid-sized businesses face a critical challenge: ensuring these systems are transparent and trustworthy. This isn’t about understanding complex algorithms; it’s about practical risk management and maintaining brand integrity. This article will guide you through actionable steps to evaluate, implement, and manage AI marketing tools with transparency in mind, helping you make informed decisions under real-world constraints.

You’ll learn what to prioritize today to safeguard your brand and campaign effectiveness, what can be delayed, and what common pitfalls to avoid. Our focus is on pragmatic approaches that work even with limited budgets and headcount, ensuring your AI investments build, rather than erode, customer and internal trust.

Identifying “Black Box” Risks in Your AI Tools

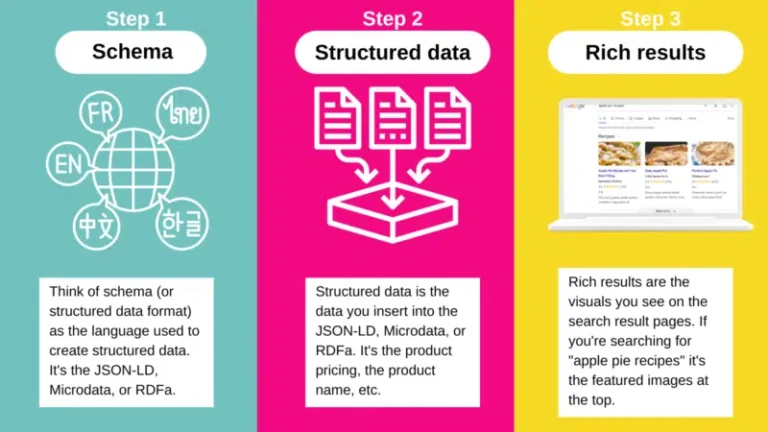

Many off-the-shelf AI marketing tools, while powerful, operate with varying degrees of transparency. The “black box” isn’t always malicious; it’s often a byproduct of proprietary algorithms or complexity. For SMBs, the risk isn’t in the code itself, but in the lack of insight into how decisions are made, data is used, and outputs are generated. This opacity can hide biases, inefficiencies, or even misalignments with your marketing goals.

- Ad Targeting Algorithms: How are audiences segmented? What data points are prioritized?

- Content Generation Tools: What sources inform the AI? How are biases mitigated?

- Personalization Engines: What criteria drive specific recommendations or experiences?

- Predictive Analytics: On what basis are future outcomes forecast? What are the confidence levels?

- Data Usage and Privacy: How is customer data processed and protected within the AI system?

The immediate consequence of this opacity is often a delayed reaction to performance shifts. When an AI tool’s output begins to degrade, or its targeting becomes less effective, the “black box” nature makes root cause analysis incredibly difficult. Teams often waste valuable time and budget tweaking campaign elements, creative assets, or audience definitions, when the underlying issue might be a subtle drift in the AI model itself, or a change in how it interprets input data. This isn’t just an efficiency problem; it’s a source of significant frustration and misdirected effort, as teams chase symptoms without understanding the core malfunction.

This lack of transparency also creates a critical trust deficit within marketing teams. When an AI generates a recommendation or content piece, and the team can’t articulate the “why” behind it, it eroding confidence. Practitioners are still accountable for results, and being unable to explain or defend an AI’s output to internal stakeholders or clients puts them in a vulnerable position. This pressure often leads to over-verification, manual overrides, or a reluctance to fully embrace the AI’s capabilities, effectively negating the very efficiency gains the tool was purchased to provide. This is a second-order effect: the black box doesn’t just obscure data, it can undermine team autonomy and operational agility.

Furthermore, the “garbage in, garbage out” principle is amplified within an opaque system. If the data feeding the AI is biased, incomplete, or outdated, the resulting outputs will reflect those flaws. However, without visibility into the AI’s processing logic, it becomes nearly impossible to identify where the breakdown occurred. Was it the initial data input? The AI’s interpretation? Or the algorithm’s inherent biases? This makes continuous learning and iterative improvement a guessing game, hindering the team’s ability to refine their strategy based on reliable insights.

For SMBs, the practical implication is not to demand full algorithmic transparency – that’s often an unrealistic expectation for proprietary tools. Instead, the focus must shift to rigorous monitoring of inputs and outputs. Establish clear performance benchmarks and actively track deviations. Prioritize tools that offer at least some level of explainability for their key decisions, even if it’s not full code access. What should be deprioritized is spending excessive time trying to reverse-engineer the “how” of a black box; instead, invest that energy in understanding the “what” and “whether it works for us,” and be prepared to pivot if performance drifts without clear explanation.

Practical Steps to Evaluate AI Transparency

Before adopting any new AI marketing tool, a critical evaluation of its transparency is necessary. This isn’t about becoming an AI expert, but about asking the right questions and demanding clear answers from vendors. Focus on practical implications for your campaigns and customer relationships.

- Vendor Documentation: Look for clear explanations of how the AI works, its data sources, and its limitations. If it’s vague, push for specifics.

- Data Input/Output Clarity: Understand what data the AI requires and what kind of output it generates. Can you trace an output back to specific inputs?

- Bias Mitigation Claims: Ask how the vendor addresses potential biases in their models, especially for tools impacting audience segmentation or content.

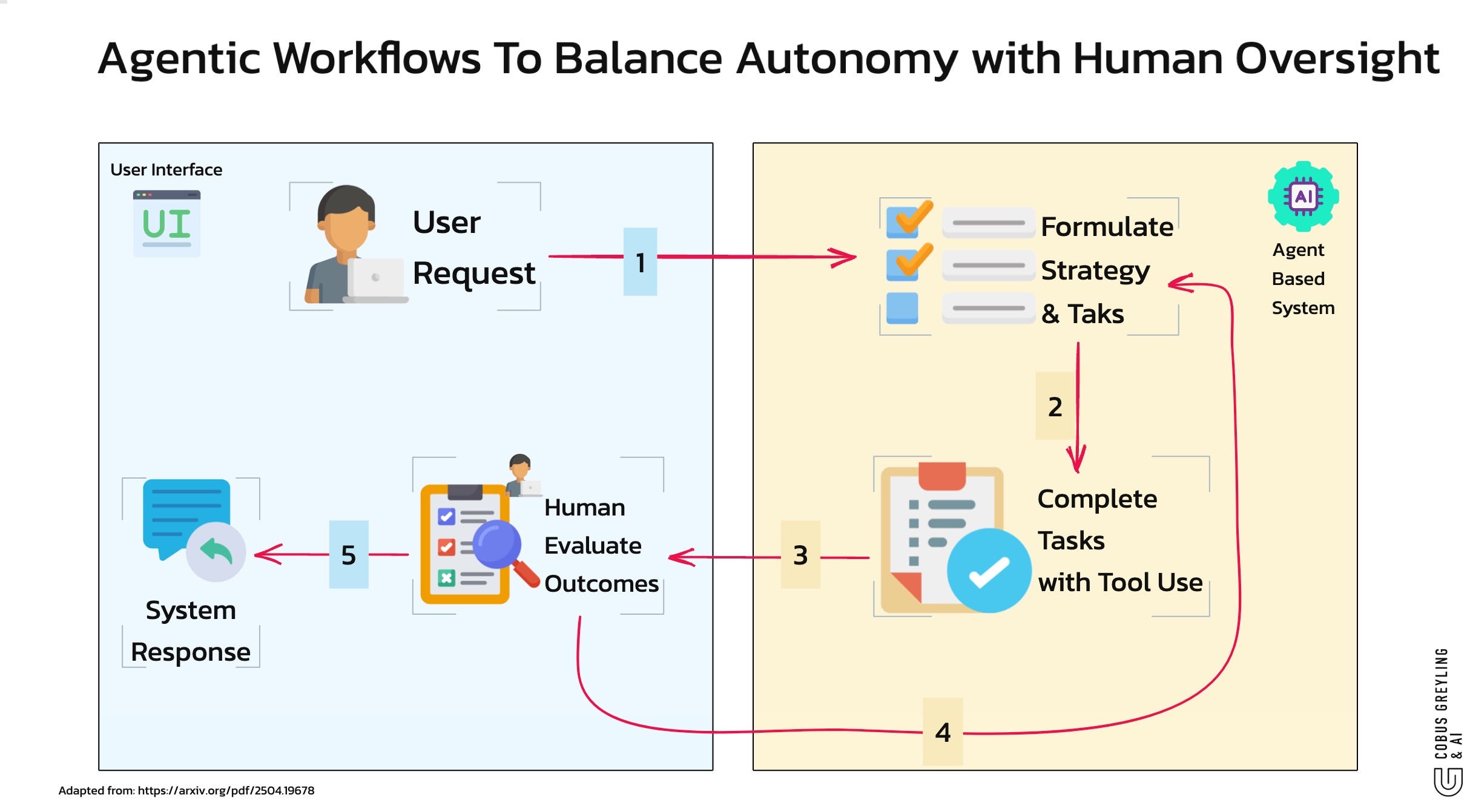

- Human-in-the-Loop Capabilities: Does the tool allow for human review and override of AI-generated decisions or content? This is a non-negotiable for many SMBs.

- Privacy and Security: Ensure the tool complies with your data privacy standards and clearly outlines its data handling practices.

- Trial and Error: Start with smaller, less critical applications to observe the AI’s behavior and output in a controlled environment.

What often gets overlooked is the true cost of ‘human-in-the-loop’ capabilities. While essential for safety, this isn’t a set-it-and-forget-it feature. It translates directly into unbudgeted human hours. Teams frequently underestimate the volume and complexity of AI outputs requiring review, turning a supposed efficiency gain into a new, often frustrating, manual bottleneck. This can lead to rushed approvals, ‘rubber-stamping’ decisions, or even outright abandonment of the review process when deadlines loom, effectively negating the very safety net you thought you built.

Beyond the immediate workload, there’s a more insidious, second-order effect: the erosion of team judgment. When AI consistently provides outputs, even with review, teams can develop an ‘automation bias.’ They may stop questioning the underlying assumptions or the subtle nuances of the AI’s suggestions, leading to a gradual drift in strategic thinking or brand voice. This is compounded by a lack of true interpretability – not just what the AI did, but why. If you can’t understand the ‘why’ behind a segmentation choice or a content recommendation, your team can’t learn, adapt, or effectively challenge the AI. You become a passenger, not a pilot, which is a dangerous position for any business trying to maintain a distinct market presence.

For most SMBs, chasing perfect, explainable AI (XAI) from day one is a luxury. You need to prioritize tools that offer sufficient transparency to mitigate immediate risks and allow for human oversight. However, don’t mistake initial functional transparency for long-term strategic insight. While you might deprioritize deep dives into algorithmic mechanics today, recognize that a tool that offers zero insight into its decision-making process will eventually limit your team’s growth and ability to innovate beyond the AI’s current capabilities. It’s a trade-off between immediate utility and future strategic agility.

Building Internal Trust: Data Governance and Human Oversight

Transparency isn’t just about the AI tool itself; it’s also about how your team manages and interacts with it. Establishing clear internal processes and maintaining human oversight are crucial for building and sustaining trust. This means defining roles, setting guidelines, and fostering a culture where AI is seen as an assistant, not a replacement for human judgment.

- Clear Data Input Guidelines: Standardize the data fed into AI tools to minimize inconsistencies and biases.

- Regular Output Review: Implement a process for human marketers to review AI-generated content, targeting suggestions, or performance reports. Don’t blindly trust the AI.

- Feedback Loops: Establish mechanisms to provide feedback to the AI system (if supported) and to the vendor, helping refine its performance and address issues.

- Training and Education: Ensure your team understands the capabilities and limitations of each AI tool. Knowledge empowers better oversight.

- Ethical Use Policy: Develop internal guidelines for the ethical application of AI in marketing, aligning with your brand’s values.

Prioritizing Transparency: What to Do Now, What to Delay

For small to mid-sized teams, resources are always constrained. Your immediate focus should be on the AI tools that directly impact customer-facing interactions or critical decision-making. Prioritize understanding the “why” behind these tools’ outputs and ensuring you have a human override.

- What to Prioritize Now:

- Customer-Facing AI: Any AI tool that directly interacts with customers (e.g., chatbots, personalization engines, ad targeting) requires immediate transparency scrutiny and human oversight. The risk to brand reputation is highest here.

- Data Privacy Compliance: Verify that all AI tools align with current data privacy regulations (e.g., GDPR, CCPA). This is non-negotiable.

- Human-in-the-Loop Integration: Ensure your workflows include mandatory human review points for AI-generated content or decisions before they go live.

- What to Delay or Deprioritize:

- Deep Technical Audits: For most SMBs, attempting to conduct deep technical audits of proprietary AI algorithms is an inefficient use of resources. Focus instead on the inputs, outputs, and vendor explanations.

- Building Custom AI Solutions: Unless you have significant in-house AI expertise and budget, developing custom AI from scratch is a massive undertaking with high risk. Leverage proven, transparent off-the-shelf tools instead.

- Over-optimizing for Edge Cases: While important, don’t get bogged down in theoretical edge cases of AI bias or failure modes if your core systems lack basic transparency. Address the most common and impactful risks first.

The critical judgment call here is to focus on practical risk mitigation and maintaining customer trust through observable actions, rather than theoretical completeness. Your time is better spent on effective oversight and clear vendor communication than on trying to become an AI architect.

Sustaining Trust in an Evolving AI Landscape

The AI landscape is dynamic. What’s considered transparent today might be insufficient tomorrow. Sustaining trust requires ongoing vigilance, adaptability, and a commitment to continuous learning. This isn’t a one-time setup; it’s an ongoing process of monitoring, adapting, and refining your approach to AI in marketing.

- Continuous Monitoring: Regularly review AI tool performance, output quality, and customer feedback. Look for unexpected patterns or biases.

- Stay Informed: Keep abreast of developments in AI ethics, regulations, and best practices. Follow reputable sources like AI ethics or AI marketing best practices.

- Vendor Relationships: Maintain open communication with your AI tool vendors. Provide feedback and inquire about their roadmap for transparency and ethical AI development.

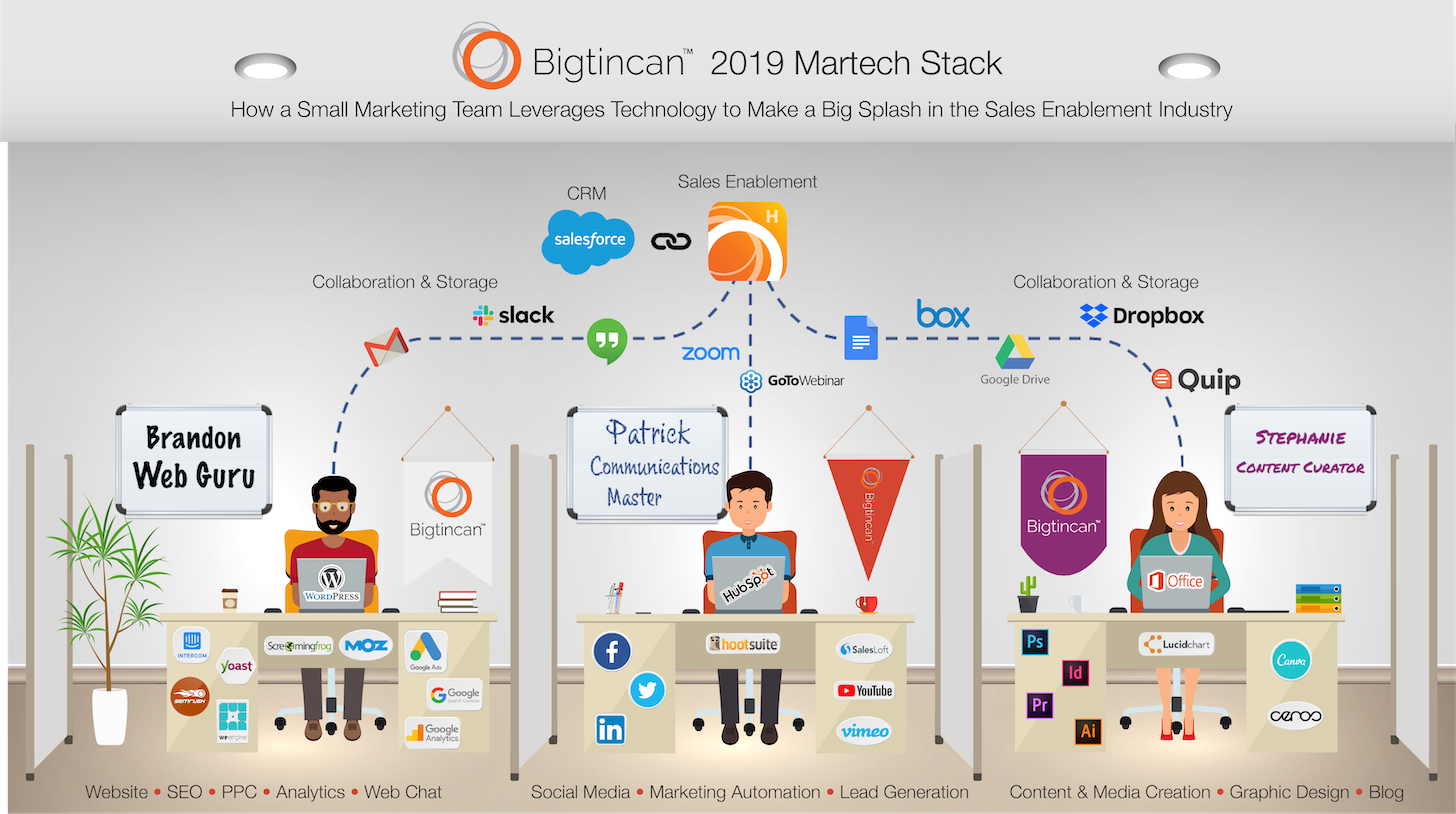

- Iterative Improvement: Treat your AI marketing stack as a living system. Be prepared to adjust strategies, swap tools, or refine internal processes as needed to maintain transparency and trust.

Leave a Comment