In today’s AI-first search landscape, simply stuffing keywords won’t cut it. Your business needs a robust technical SEO foundation to ensure search engines, powered by advanced AI, can actually find, understand, and value your content. This guide cuts through the noise, showing you exactly where to focus your limited resources to make your site AI-ready and drive tangible results.

We’ll prioritize the technical elements that deliver the most impact for small to mid-sized teams, helping you make smart trade-offs and avoid common pitfalls that waste time and budget.

Why Technical SEO Matters More Now

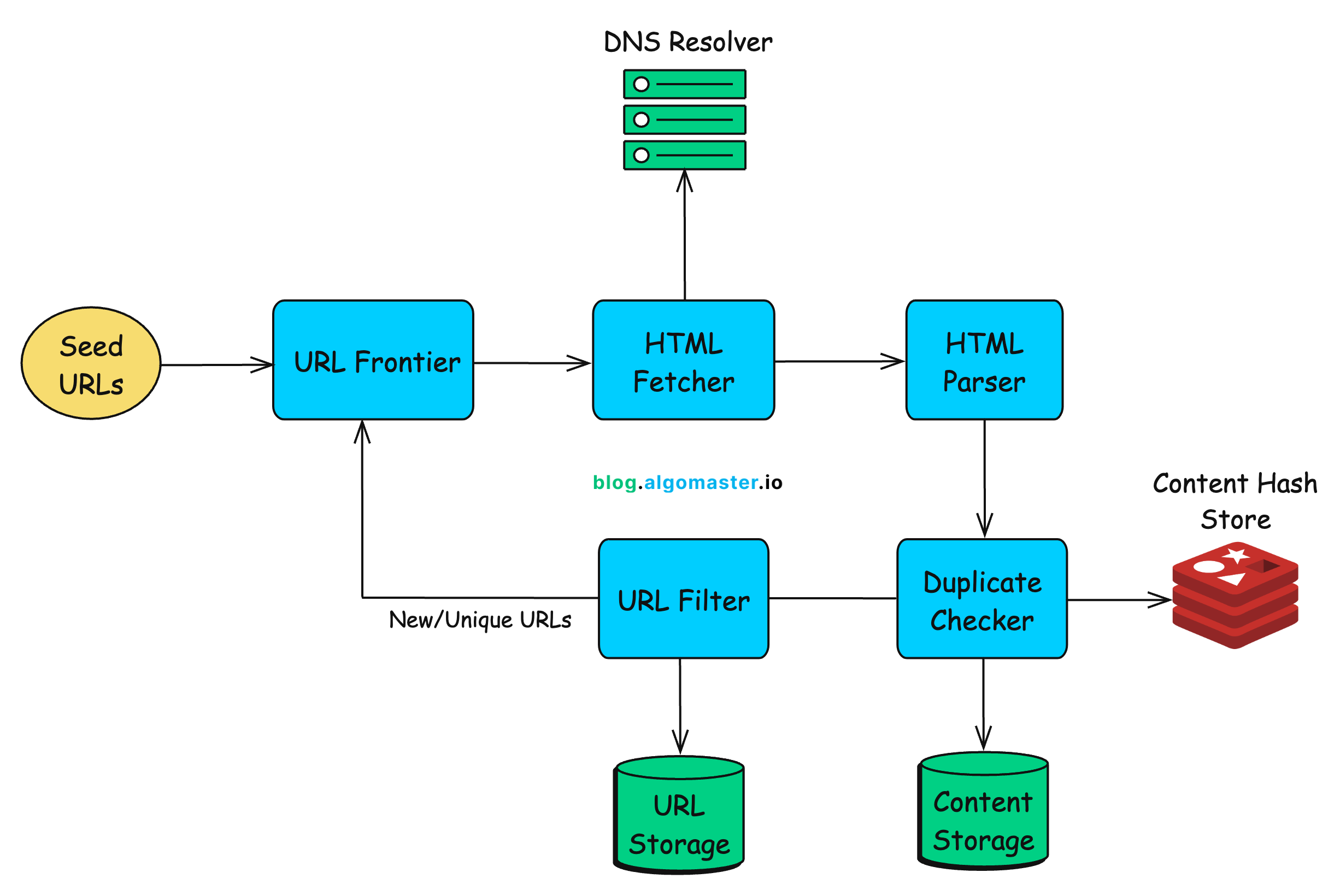

The shift towards AI-powered search, exemplified by systems like Google’s Search Generative Experience, fundamentally changes how content is evaluated. These systems prioritize understanding the meaning and context of your content, not just keyword matches. If your site has technical barriers, even the most brilliant content can remain invisible.

Think of technical SEO as the plumbing for your website. Without proper pipes and drainage, even the freshest water (your content) can’t flow where it needs to go. For AI search engines, this means ensuring your site is:

- Crawlable: Can search engine bots easily discover all your important pages?

- Indexable: Can those pages be added to the search engine’s database?

- Interpretable: Can AI systems accurately understand what your content is about, its purpose, and its value?

Ignoring these foundational elements means your competitors, even with less “perfect” content, might outrank you simply because their sites are easier for AI to process.

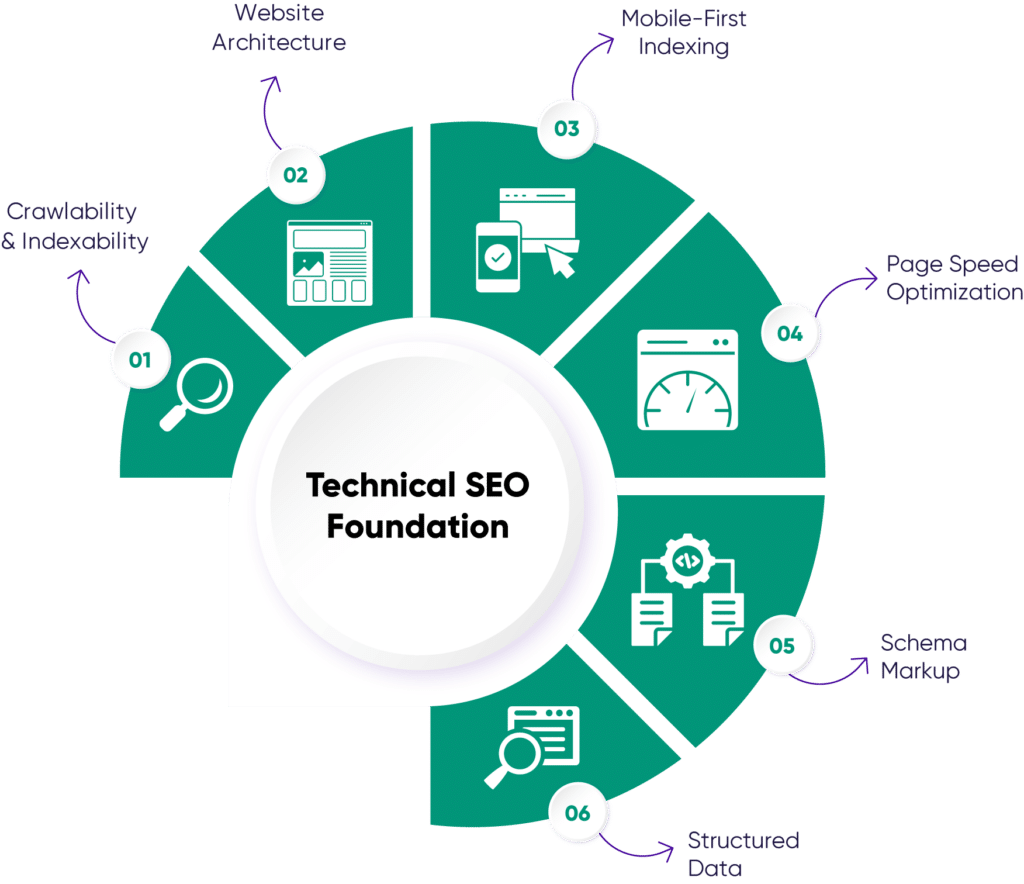

Prioritizing Core Technical Elements

For SMBs, every hour and dollar counts. Focus on these high-impact areas first:

Crawlability and Indexability: The Absolute Foundation

If search engines can’t find and index your pages, nothing else matters. This is non-negotiable. Start here.

- Robots.txt: Ensure you’re not accidentally blocking important pages. Review this file regularly.

- XML Sitemaps: Submit a clean, up-to-date sitemap to Google Search Console. This guides crawlers to your key content.

- Canonical Tags: Prevent duplicate content issues by clearly indicating the preferred version of a page. This is crucial for e-commerce sites with product variations.

- Broken Links: Both internal and external broken links hinder crawling and user experience. Prioritize fixing internal broken links first.

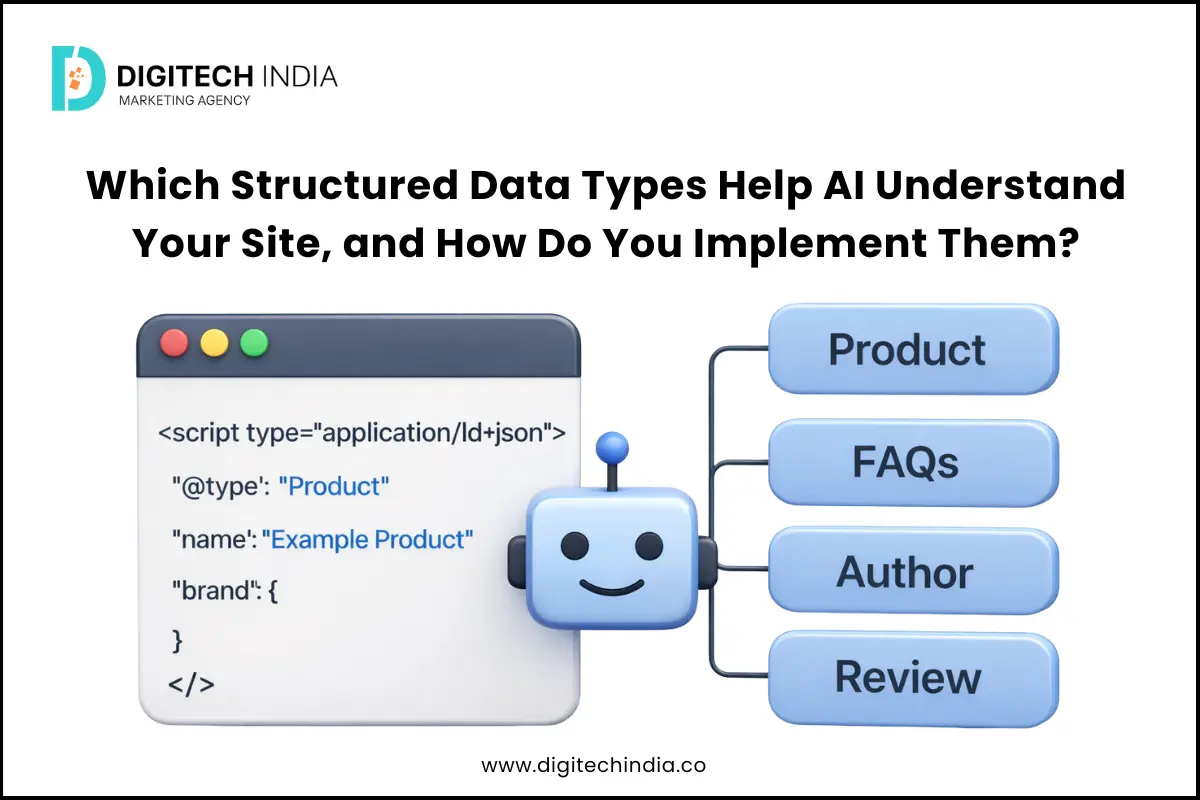

Structured Data (Schema Markup): Speaking AI’s Language

Structured data is how you explicitly tell search engines what your content means. It’s like providing a glossary for your website. For AI, this is invaluable for accurate interpretation and can lead to rich results (e.g., star ratings, product prices, FAQs directly in search results).

- Prioritize High-Value Pages: Start with your most important pages: product pages (

Productschema), service pages (Serviceschema), local business information (LocalBusinessschema), and FAQs (FAQPageschema). - Use JSON-LD: This is the recommended format and is easiest to implement.

- Test Your Markup: Use Google’s Rich Results Test to validate your schema implementation. rich results test

Page Experience (Core Web Vitals): User Signals for AI

AI-first search engines heavily factor in user experience. A fast, stable, and mobile-friendly site signals quality and relevance. Google’s Core Web Vitals (CWV) are the measurable metrics here.

- Mobile-First: Assume your site is primarily accessed on mobile. Optimize for it.

- Largest Contentful Paint (LCP): Focus on fast loading of the main content element. Optimize images, reduce server response time.

- Interaction to Next Paint (INP): Ensure your site responds quickly to user interactions. Minimize JavaScript blocking.

- Cumulative Layout Shift (CLS): Prevent unexpected layout shifts that frustrate users. Specify image dimensions, avoid injecting content above existing elements.

Use Google Search Console’s Core Web Vitals report to identify and prioritize issues. Addressing “poor” URLs should be a top priority. core web vitals report

What often gets overlooked, particularly in smaller teams, is the dynamic nature of these technical elements. Initial setup of robots.txt, XML sitemaps, or canonical tags is a one-time task, but websites are living entities. New content, CMS updates, plugin installations, or even changes in navigation can silently introduce crawl blocks, broken links, or duplicate content issues. The hidden cost here isn’t just a missed opportunity; it’s a slow, insidious erosion of your site’s index coverage that can take months to detect and even longer to fully recover from. This leads to content being published and promoted, only to languish in obscurity because search engines never properly saw it.

Similarly, with structured data, the theory often suggests implementing as much as possible. In practice, this can lead to over-engineering or misapplication. Attempting to force generic schema onto unique content, or implementing every conceivable schema type without true relevance, can backfire. Search engines are sophisticated enough to detect irrelevant or misleading markup. The non-obvious failure mode here isn’t just a lack of rich results; it’s the risk of sending confusing signals that cause search engines to distrust your schema entirely, or worse, misinterpret your content’s core purpose. This can inadvertently dilute your authority for key topics.

Regarding Page Experience, a common pitfall is chasing a perfect Core Web Vitals score across every single page. While continuous improvement is vital, for SMBs with limited resources, obsessing over marginal gains on already “good” URLs can be a significant drain. It’s easy to get caught in the “whack-a-mole” game of performance optimization, where fixing one minor issue uncovers another, or a new marketing feature introduces a fresh performance hit. Instead, prioritize addressing pages flagged as “poor” or “needs improvement” in Google Search Console, focusing on the most impactful changes that genuinely improve user experience and unlock broader visibility. Trying to achieve an immaculate score on every page often diverts critical development time from higher-leverage activities, leading to developer frustration and a perception of endless, unrewarding work.

What to Deprioritize (or Skip Entirely) Today

With limited resources, knowing what not to do is as important as knowing what to do. For most small to mid-sized businesses, you should deprioritize or skip:

- Obsessive Micro-Optimizations: Spending hours shaving milliseconds off a non-critical page’s load time when fundamental issues like broken internal links, missing schema on key product pages, or widespread crawl errors exist. Focus on the biggest levers first.

- Chasing Every Niche Keyword Variation: While long-tail keywords have their place, AI understands semantic relationships. Instead of creating dozens of slightly different pages for hyper-specific keyword variations, focus on creating one comprehensive, high-quality page that naturally covers a broader topic and its related concepts. This is more efficient and effective for AI understanding.

- Investing Heavily in Unproven “AI SEO” Tools: The market is flooded with new tools claiming to leverage AI for SEO. Many are unproven, offer marginal gains, or are simply repackaged existing functionalities. Stick to established, reliable tools like Google Search Console, Ahrefs, or Semrush for your core analysis.

Your time is better spent fixing foundational issues and improving content quality than chasing marginal gains from unverified tactics or tools.

The temptation to chase every marginal gain or shiny new tool is strong, especially when the competitive landscape feels intense. However, succumbing to this often creates more problems than it solves, particularly for lean teams.

For instance, the constant pursuit of hyper-specific keyword variations, while seemingly thorough, quickly leads to content bloat. This isn’t just inefficient; it creates internal competition between your own pages for similar queries, confusing search engines and diluting the authority you could build with a single, robust resource. More critically, it exhausts content teams, forcing them to produce volume over true value, leading to burnout and a decline in overall content quality over time. The initial ‘efficiency’ gain from AI tools suggesting these variations can quickly turn into a content management nightmare.

Similarly, the focus on micro-optimizations, while technically sound in isolation, often masks a critical opportunity cost. Every hour spent shaving milliseconds off a page that already loads reasonably well is an hour not spent on more impactful technical debt, improving core site architecture, or enhancing user experience features that genuinely drive conversions. This misallocation of engineering or marketing resources can delay critical projects, leading to a cumulative drag on overall site performance and business growth, even as the team feels productive on minor tasks.

And regarding the proliferation of ‘AI SEO’ tools, the hidden cost isn’t just the subscription fee for unproven tech. It’s the significant time and mental energy spent on evaluating, integrating, learning, and then often abandoning these tools. Teams feel immense pressure to experiment, leading to cycles of trial-and-error that divert focus from established, high-impact strategies. This constant churn of tools can erode team morale and trust in new initiatives, making it harder to adopt truly beneficial innovations down the line.

Actionable Steps for SMBs

Here’s a practical roadmap to get started:

- Audit Your Foundation: Start with Google Search Console. Check “Coverage” for indexing issues, “Sitemaps” for submission status, and “Core Web Vitals” for performance. Address critical errors first.

- Implement Essential Schema: Identify your top 5-10 most important page types (e.g., product, service, local business, blog post). Use a schema generator or plugin to add JSON-LD markup. Validate with Google’s Rich Results Test.

- Optimize for Mobile and Speed: Use PageSpeed Insights to get actionable recommendations. Focus on image optimization, server response time, and reducing render-blocking resources.

- Refine Internal Linking: Ensure your most important pages are well-linked from other relevant, authoritative pages on your site. This helps both users and AI discover and understand your content hierarchy.

Building for Future Search Understanding

The future of search is about understanding, not just matching. By focusing on technical SEO, you’re not just optimizing for today’s algorithms; you’re building a robust, interpretable foundation for whatever AI advancements come next. Prioritize clarity, authority, and user value, and let technical SEO ensure your efforts are seen and understood.

Leave a Comment