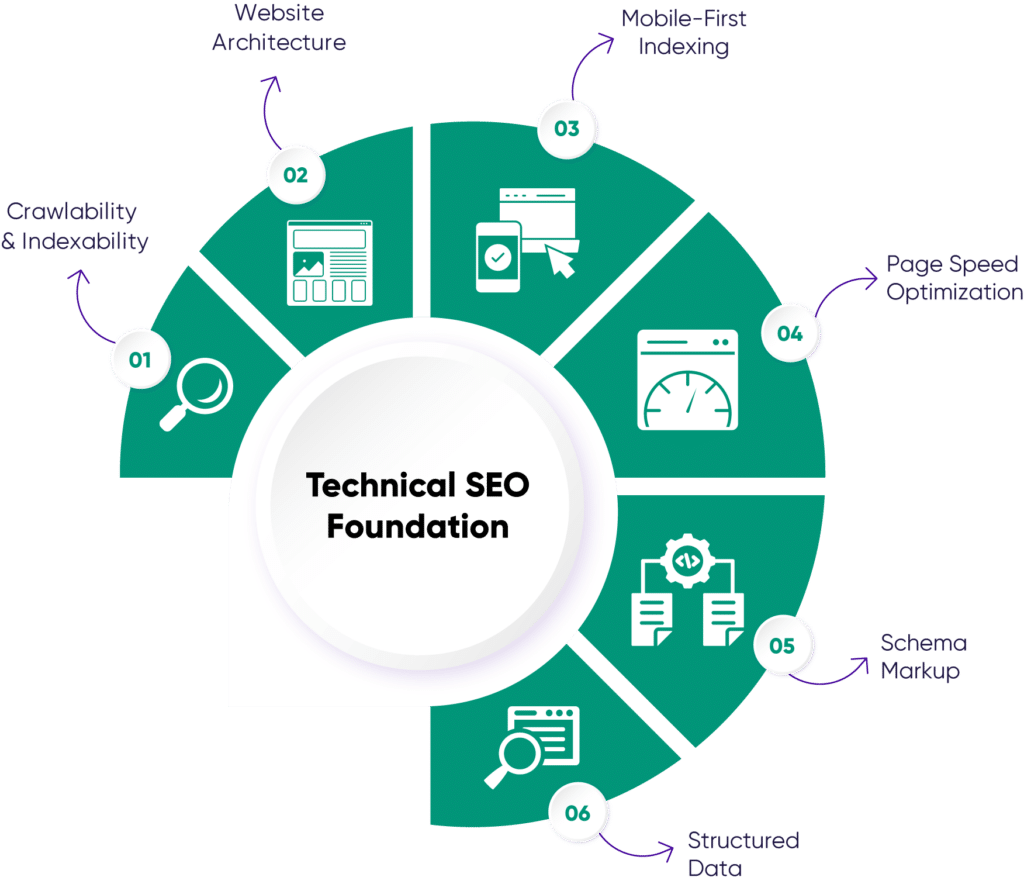

The Foundation of Organic Success: Why Technical SEO Matters

In the dynamic landscape of search engine optimization, technical SEO remains the bedrock upon which all other SEO efforts are built. Without a technically sound website, even the most compelling content or robust backlink profile may struggle to achieve optimal visibility. Search engines like Google prioritize websites that are easily crawlable, indexable, fast, and secure, directly impacting user experience and, consequently, search rankings.

For businesses aiming to grow and optimize campaigns, understanding and addressing technical SEO issues is not merely a best practice; it’s a critical operational necessity. As algorithms evolve and user expectations for seamless online experiences intensify, neglecting the technical health of your site can lead to significant drops in organic traffic, missed revenue opportunities, and a diminished competitive edge. Proactive identification and resolution of these issues are paramount.

Crawlability & Indexability Issues: Guiding Search Engines

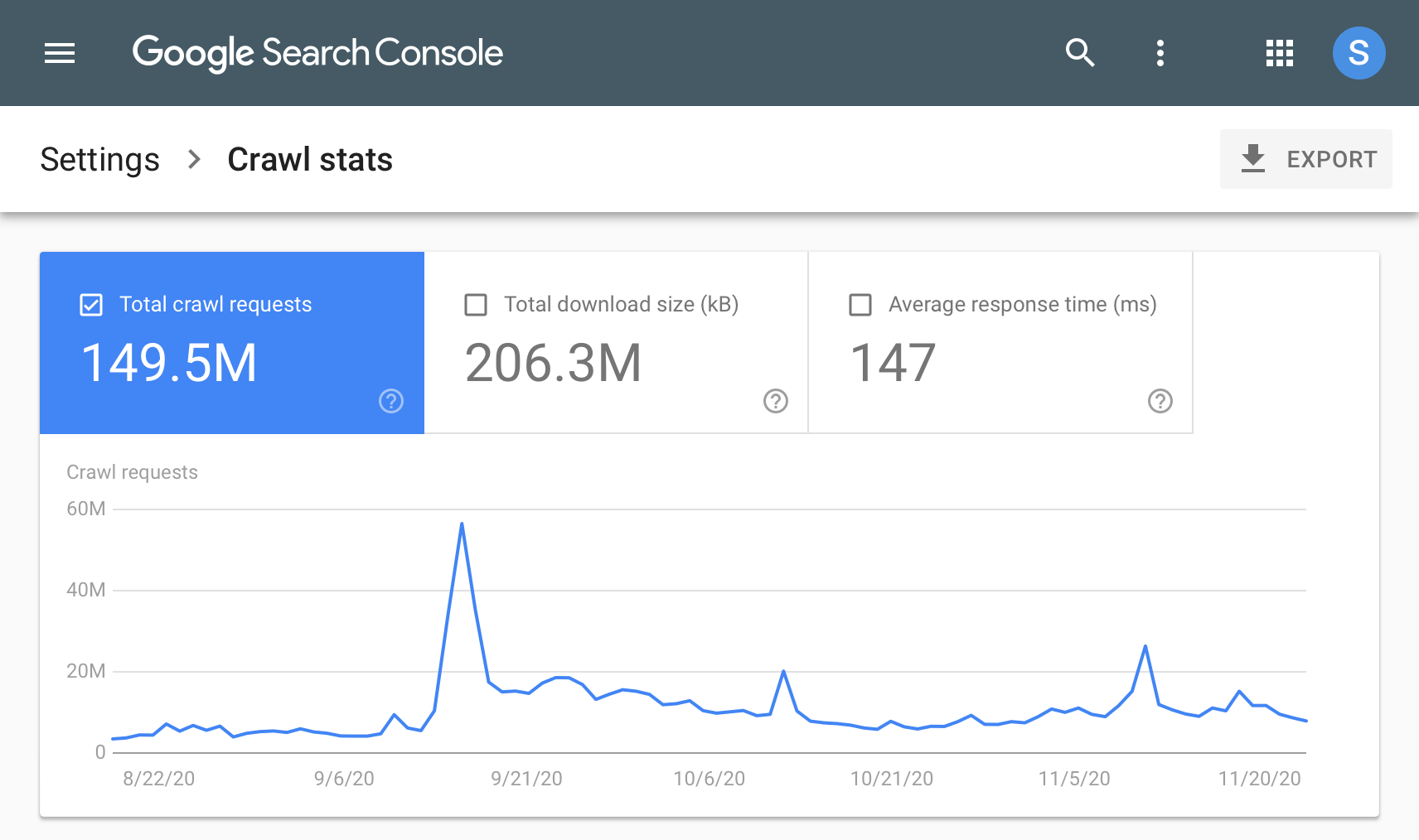

One of the most fundamental technical SEO challenges revolves around ensuring search engine bots can efficiently crawl and index your website’s content. Issues like misconfigured robots.txt files, ‘noindex’ tags on important pages, broken internal links, or server errors (e.g., 4xx, 5xx) can prevent search engines from discovering and adding your pages to their index. If a page isn’t indexed, it cannot rank, regardless of its quality.

To diagnose these problems, regularly audit your robots.txt file to ensure it’s not inadvertently blocking critical sections. Utilize Google Search Console (GSC) to monitor ‘Crawl Stats’ and ‘Index Coverage’ reports, which highlight errors and warnings. Ensure your XML sitemap is up-to-date, submitted to GSC, and accurately reflects all pages you want indexed. Implementing proper 301 redirects for moved content and fixing broken links are also crucial steps to maintain crawl equity.

Site Speed & Core Web Vitals: Enhancing User Experience

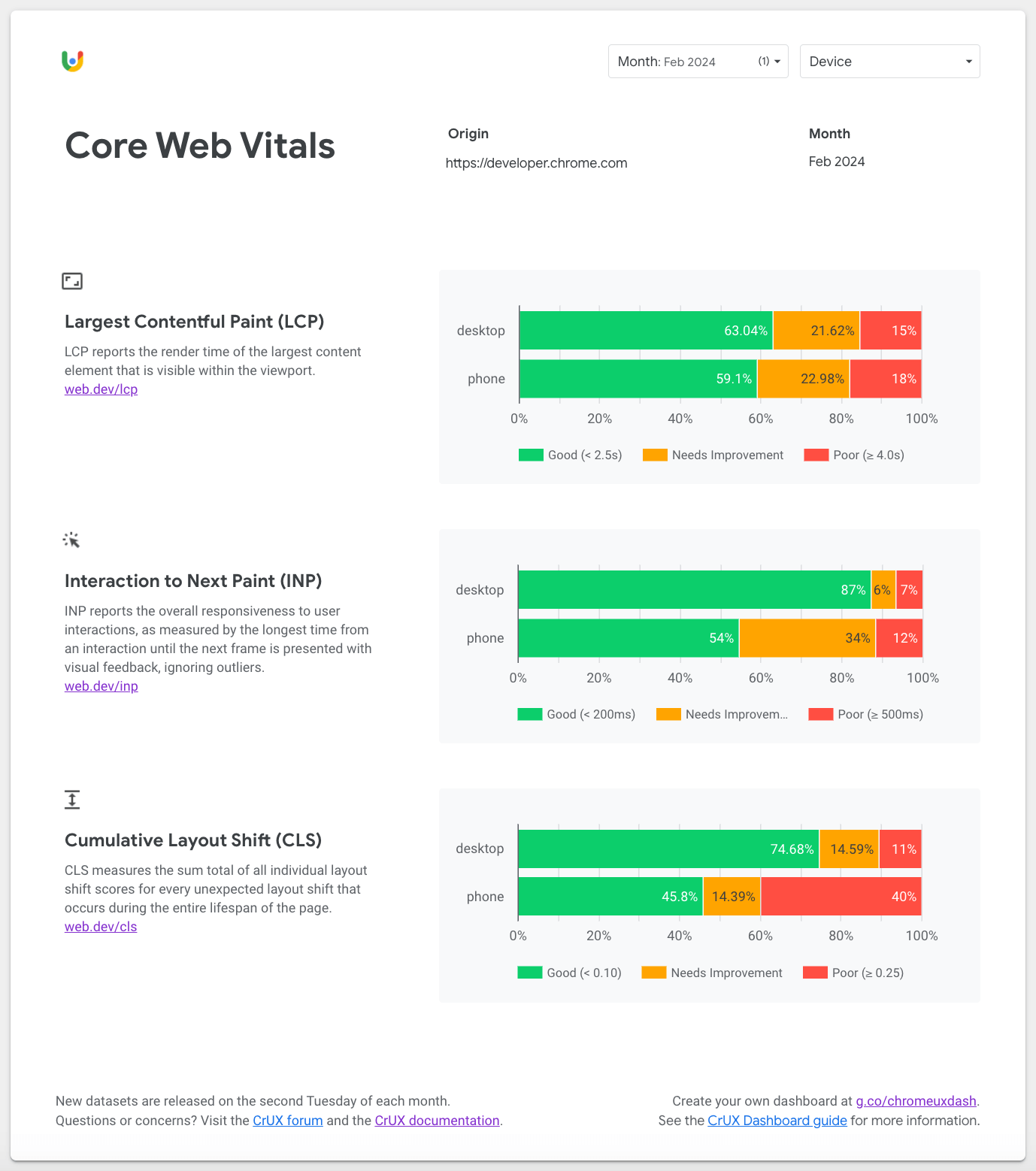

Website loading speed has been a critical ranking factor for years, and with the continued emphasis on Core Web Vitals (CWV), its importance has only grown. CWV metrics – Largest Contentful Paint (LCP), First Input Delay (FID), and Cumulative Layout Shift (CLS) – directly measure user experience aspects like loading performance, interactivity, and visual stability. Poor scores in these areas can lead to higher bounce rates and lower rankings.

Addressing site speed involves a multi-faceted approach. Optimize images by compressing them and using modern formats like WebP. Implement lazy loading for images and videos to defer loading off-screen content. Leverage browser caching, minimize CSS and JavaScript files, and ensure your server response time is swift. Content Delivery Networks (CDNs) can also significantly improve global loading speeds by serving content from geographically closer servers. Regularly test your site with tools like PageSpeed Insights and GTmetrix.

Mobile-Friendliness & Responsive Design: The Mobile-First Imperative

With mobile-first indexing being the standard for years, having a mobile-friendly website is non-negotiable. If your site doesn’t offer a seamless experience on smartphones and tablets, search engines will penalize its visibility, even for desktop searches. Issues include non-responsive designs, tiny text, unclickable elements, or content that doesn’t fit the screen.

The primary fix is implementing a responsive design that adapts fluidly to various screen sizes. Ensure your viewport meta tag is correctly configured. Test your site using Google’s Mobile-Friendly Test tool and review the ‘Mobile Usability’ report in GSC for any identified issues. Prioritize touch-friendly navigation and ensure all content and functionalities are easily accessible on smaller screens.

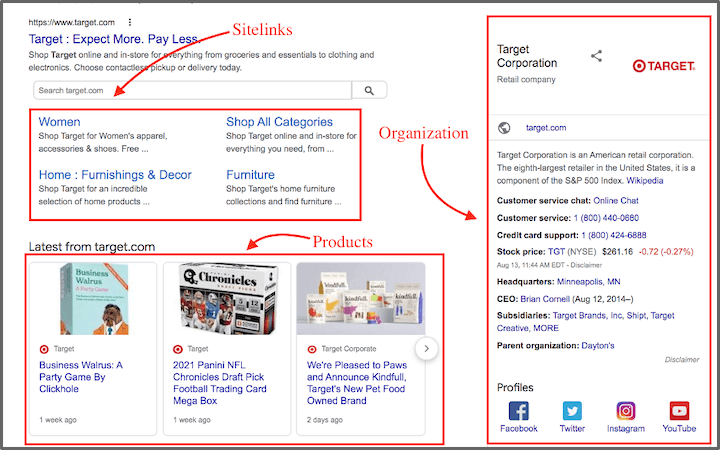

Structured Data & Schema Markup: Boosting Rich Results

Structured data, implemented via Schema.org markup, helps search engines better understand the context and meaning of your content. This can lead to rich results (e.g., star ratings, product prices, event dates) in search engine results pages (SERPs), which significantly enhance visibility and click-through rates. Common issues include incorrect implementation, missing required properties, or invalid JSON-LD syntax.

To resolve these, identify relevant schema types for your content (e.g., Article, Product, LocalBusiness, FAQPage). Implement the markup using JSON-LD, which is Google’s preferred format, directly within the HTML head or body. Use Google’s Rich Results Test tool to validate your markup and preview how it might appear in SERPs. Regularly review GSC’s ‘Enhancements’ reports for any structured data errors or warnings.

Duplicate Content & Canonicalization: Consolidating Authority

Duplicate content occurs when identical or near-identical content is accessible at multiple URLs. This can confuse search engines, dilute link equity, and waste crawl budget, as bots spend time crawling redundant pages. Common causes include URL parameters, printer-friendly versions, HTTP/HTTPS versions, or non-www/www versions of your site.

The primary solution is proper canonicalization. Implement `rel=”canonical”` tags on duplicate pages, pointing to the preferred (canonical) version. For permanent URL changes, use 301 redirects. Configure your server to consistently serve either the www or non-www version, and ensure all internal links point to the canonical URLs. For parameter handling, use GSC’s URL Parameters tool, though `rel=”canonical”` is generally more robust.

HTTPS & Security: Building Trust and Authority

HTTPS has been a confirmed ranking signal for years, and its importance for user trust and data security cannot be overstated. Websites without an SSL certificate are flagged as ‘Not Secure’ by modern browsers, deterring visitors and negatively impacting SEO. Issues often arise during migration from HTTP to HTTPS, such as mixed content warnings or incorrect redirect chains.

Ensure your website has a valid SSL certificate installed and that all traffic is redirected from HTTP to HTTPS using 301 redirects. Verify that all internal links, images, and scripts are loaded over HTTPS to avoid mixed content warnings. Regularly check your site’s security status in GSC and address any reported issues promptly. A secure site is a trustworthy site, both for users and search engines.

XML Sitemaps & Hreflang: Comprehensive Indexing & Global Reach

XML sitemaps serve as a roadmap for search engines, listing all the important pages on your site that you want crawled and indexed. An outdated or incomplete sitemap can lead to important pages being missed. For international websites, `hreflang` tags are crucial for indicating the language and geographical targeting of specific pages, preventing duplicate content issues across different language versions.

Regularly generate and submit an accurate XML sitemap to Google Search Console. Ensure it only includes canonical, indexable URLs and is free of broken links. For `hreflang`, implement it correctly in the HTML head, HTTP header, or XML sitemap, specifying the language and region for each variant, including an `x-default` tag. Use a `hreflang` checker tool to validate your implementation and monitor GSC’s ‘International Targeting’ report for errors.

Leveraging AI for Enhanced Technical SEO Audits

In 2025, AI-powered tools are increasingly augmenting technical SEO efforts, offering unprecedented efficiency and depth in audits. These platforms can rapidly analyze vast datasets, identify complex crawl patterns, pinpoint subtle site speed bottlenecks, and even suggest optimal structured data implementations. They go beyond basic checks, often predicting potential issues before they impact performance.

While AI doesn’t replace human expertise, it empowers SEO professionals to focus on strategic problem-solving rather than manual data collection. AI tools can automate routine checks, prioritize critical fixes based on potential impact, and provide actionable recommendations. Integrating these advanced analytics into your workflow allows for more proactive and precise technical SEO management, ensuring your site remains optimized for current and future search algorithms.

Proactive Technical SEO for Sustained Digital Growth

Technical SEO is not a one-time task but an ongoing process of monitoring, adapting, and refining. As websites grow, technologies evolve, and search engine algorithms update, new technical challenges will inevitably emerge. A proactive approach, characterized by regular audits, continuous monitoring, and swift issue resolution, is essential for sustained organic visibility and business growth.

By integrating technical SEO checks into your regular website maintenance schedule and staying informed about the latest search engine guidelines, businesses can ensure their digital foundation remains strong. This commitment to technical excellence not only safeguards your current rankings but also positions your website for future success, allowing your content and marketing strategies to truly shine and drive revenue.

Leave a Comment