Introduction

As search engines and AI models become more sophisticated, simply stuffing keywords no longer cuts it. Your website needs to be understood, not just crawled. This guide provides a pragmatic roadmap for small to mid-sized businesses to make their websites ‘AI-ready’ through focused technical SEO. You’ll learn what to prioritize to ensure your content is clearly interpreted by machines, leading to better visibility and more effective engagement.

We’ll cut through the noise, focusing on actionable steps that deliver real impact for teams operating with limited resources. The goal is to equip you with the knowledge to make informed decisions, ensuring your website communicates effectively with the evolving digital landscape.

Why “AI-Ready” Matters Now

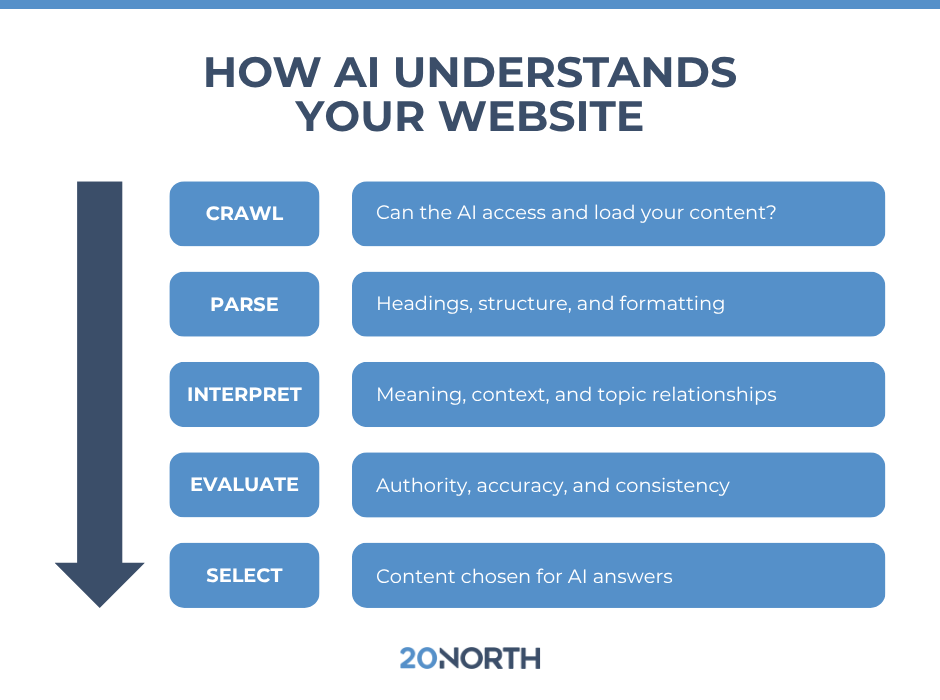

The shift from keyword matching to semantic understanding is accelerating. Today, search engines like Google are leveraging AI to interpret user intent and content meaning far beyond simple keyword presence. This means your website’s ability to clearly communicate its purpose, offerings, and value directly to machine intelligence is paramount. Future AI agents and conversational interfaces will rely even more heavily on this clarity.

For small businesses, this isn’t about chasing every new AI feature; it’s about building a robust foundation. An AI-ready website is one that provides unambiguous signals, allowing search engines and AI models to accurately categorize, summarize, and present your information. This directly impacts your visibility in search results, featured snippets, and future AI-driven discovery channels.

The immediate impact of not being AI-ready is diminished visibility. However, the more insidious consequence is the accumulation of “semantic debt.” Just as technical debt makes future development harder, unclear content and structure today create a growing burden. Rectifying this later isn’t a simple content refresh; it often demands a fundamental re-evaluation of your core messaging and information architecture, which is a far more resource-intensive and disruptive undertaking for a lean team.

Many teams might be tempted to use generative AI tools to “fix” existing content that isn’t performing. The practical reality is that AI models, while powerful, are still largely reflective. If your source material is ambiguous, contradictory, or lacks a clear underlying intent, feeding it into an AI tool will likely produce output that mirrors those same flaws, or worse, generates confident but incorrect information. This creates a false sense of progress while embedding deeper inaccuracies that are harder to detect and correct.

The real work of becoming AI-ready isn’t just about technical SEO or content optimization; it’s about achieving internal clarity. This requires a level of consensus within the business on what you truly offer, who you serve, and the precise problems you solve. Without this internal alignment, content efforts become fragmented, leading to a website that speaks with multiple voices. This internal friction, often overlooked, translates directly into external ambiguity for both human users and AI models, causing frustration for marketing teams struggling to articulate a consistent message and for customers trying to understand your value.

Prioritize Structured Data: The Machine’s Language

If you do one thing to make your site AI-ready, it should be implementing structured data. This is the most direct way to speak to machines. Structured data, primarily using Schema.org vocabulary, provides explicit context about the entities on your page – your business, products, services, articles, and more. It’s like giving AI a cheat sheet for understanding your content.

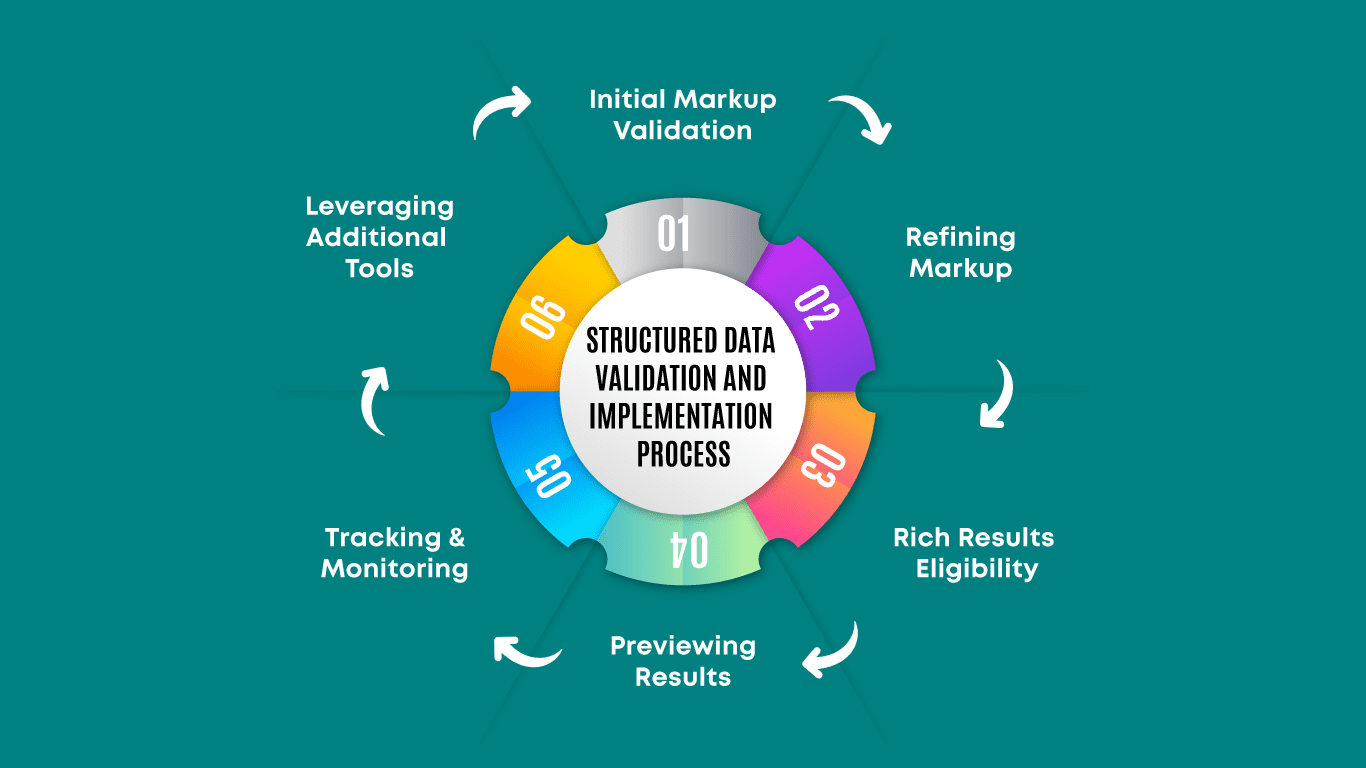

Start with the most relevant types for your business: Organization or LocalBusiness, Product, Service, and Article. Use JSON-LD format for ease of implementation. Tools like Google’s Structured Data Markup Helper or various CMS plugins can simplify this process. Don’t aim for perfection across every single page immediately; prioritize your core money pages and high-value content. This direct communication significantly improves the chances of your content appearing in rich results and being accurately interpreted by AI systems. structured data guidelines

What often gets overlooked, however, is the ongoing maintenance. Initial implementation, while a hurdle, is often seen as a one-time project. But structured data isn’t static. As your site evolves – new products, updated services, revised articles – the underlying content changes. If the corresponding structured data isn’t updated in sync, it quickly becomes stale or, worse, inaccurate. This creates a hidden maintenance burden that many teams underestimate, leading to a gradual erosion of its value over time.

The real pitfall isn’t just missing structured data; it’s incorrect or outdated structured data. Presenting one piece of information on the page (e.g., a product price or availability) and a different one in the Schema markup sends conflicting signals to search engines and AI. This inconsistency can lead to your content being deprioritized or even ignored, as machines are designed to favor accuracy. It’s often more detrimental than having no structured data at all, as it signals a lack of reliability and can actively harm your content’s chances of appearing in rich results.

While the advice is to prioritize core pages, the practical reality of what constitutes “core” can be a moving target, and teams often face pressure to mark up everything, leading to scope creep and burnout. For smaller teams, it’s crucial to explicitly deprioritize complex, low-traffic pages or content types that don’t have clear, direct Schema.org equivalents. Trying to force a markup where it doesn’t naturally fit often results in generic or incorrect implementations that yield minimal benefit and consume valuable time better spent on high-impact areas. Focus on getting the most common and critical types right, and accept that some content may not have perfect machine readability via structured data.

Content Clarity and Context: Beyond Keywords

While structured data provides explicit signals, the natural language content on your pages must also be clear and contextually rich for AI understanding. Think about how a human would understand your content without prior knowledge. Is it unambiguous? Does it answer questions directly? AI models thrive on well-organized, semantically rich content.

Focus on using natural language, logical headings (H1, H2, H3), and well-defined paragraphs. Each section should clearly address a specific sub-topic. Emphasize entity-based SEO: clearly define the core entities (products, services, concepts) you’re discussing. Instead of just repeating keywords, explain concepts thoroughly and provide relevant details. This approach helps AI models build a comprehensive understanding of your content’s subject matter, improving its relevance for a wider range of queries.

Technical Foundations: Crawlability and Indexability

All the structured data and clear content in the world won’t matter if search engines and AI can’t access and index your site. Basic technical SEO remains the bedrock of any AI-ready strategy. Ensure your site is easily crawlable and indexable. This means:

- **Robots.txt:** Correctly configured to allow access to important pages and block unimportant ones.

- **XML Sitemaps:** Up-to-date and submitted to Google Search Console, guiding crawlers to all your key content.

- **Canonical Tags:** Properly used to prevent duplicate content issues.

- **Mobile-Friendliness:** Essential for user experience and search ranking, as AI models also consider user interaction signals.

- **Page Speed:** A fast loading site improves user experience and crawl efficiency.

Regularly monitor Google Search Console for any crawl errors, index coverage issues, or manual actions. These foundational elements ensure that AI systems can even begin to process your valuable content.

What to Deprioritize (or Skip) Today

For small to mid-sized teams, resource allocation is critical. Today, you should deprioritize over-optimizing for every conceivable long-tail keyword variation. AI’s ability to understand intent and semantic relationships means that meticulously targeting hundreds of hyper-specific, low-volume keywords is often less efficient than creating comprehensive, clear content around broader topics. The time spent researching and optimizing for these niche terms could be better invested in improving the clarity and structured data of your core content.

Similarly, avoid chasing every new AI-powered SEO tool or experimental feature without a clear understanding of its direct, measurable impact on your business goals. Many are still in early stages, require significant learning curves, or offer marginal gains for the investment. Focus on the proven, foundational elements that provide lasting value and directly address how machines understand your site, rather than getting distracted by fleeting trends or micro-optimizations that yield diminishing returns for a lean team.

Actionable Next Steps for Your Team

To start making your website truly AI-ready, here’s a practical sequence:

- **Audit Core Pages:** Identify your top five to ten most important pages (e.g., product pages, service pages, your ‘About Us’).

- **Implement Core Structured Data:** For these audited pages, implement

Organization/LocalBusiness,Product, orServiceSchema.org markup using JSON-LD. Use Google’s Rich Results Test to validate. - **Review Content Clarity:** Read through your core pages. Are your headings logical? Is the language direct and unambiguous? Does it clearly define what you offer and for whom?

- **Check Technical Health:** Log into Google Search Console. Address any critical crawl errors, sitemap issues, or mobile usability problems immediately.

- **Iterate and Expand:** Once your core pages are optimized, gradually expand your structured data implementation and content clarity efforts to other important sections of your site. This is an ongoing process, not a one-time fix.

Leave a Comment