As AI tools become indispensable for marketing, establishing clear usage guidelines isn’t just about compliance; it’s about protecting your brand, data, and efficiency. This article cuts through the noise to provide a pragmatic framework for small to mid-sized marketing teams.

You’ll learn how to implement effective AI governance without overcomplicating it, focusing on immediate priorities and what truly matters for your operational reality. Our goal is to help you leverage AI’s power responsibly, mitigate common risks, and ensure your team makes smart, informed decisions daily.

Why Governance Matters Now (Beyond Compliance)

For marketing teams, AI governance isn’t a future problem; it’s a present necessity. The rapid adoption of tools for content generation, data analysis, and campaign optimization introduces immediate risks that can impact your brand reputation, legal standing, and operational efficiency. We’re talking about potential data breaches from sensitive inputs, inconsistent brand voice in AI-generated copy, or even biased outputs that alienate segments of your audience. Ignoring these risks isn’t an option; proactive, practical governance helps you harness AI’s benefits while sidestepping its pitfalls. It’s about smart risk management, not just ticking boxes.

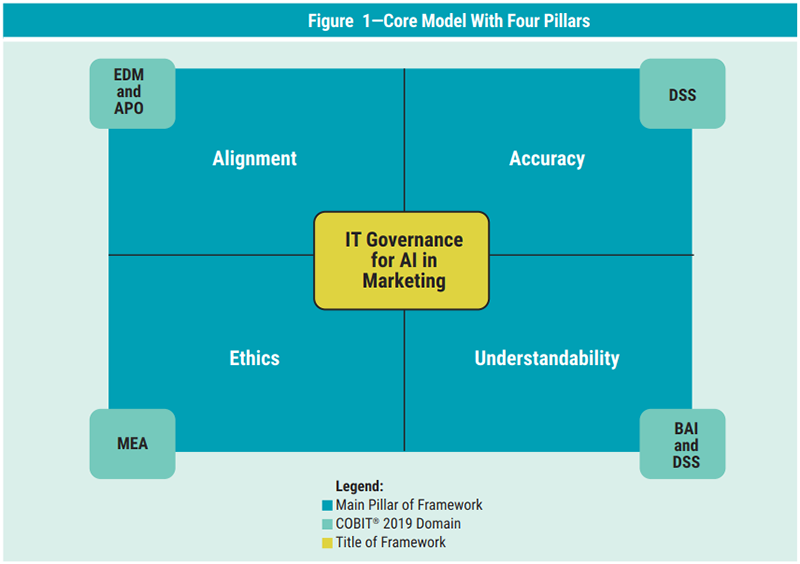

Core Pillars of AI Governance for Marketing Teams

Effective AI governance for marketing isn’t about rigid rules but establishing clear boundaries and expectations across a few critical areas. These pillars guide your team’s decision-making and ensure responsible tool usage.

- Data Privacy & Security: This is paramount. Marketers frequently handle customer data, proprietary campaign strategies, and internal analytics. Feeding sensitive or confidential information into public AI models without understanding their data retention and usage policies is a significant risk. Your guidelines must explicitly address what data can and cannot be used with AI tools, emphasizing anonymization or aggregation where possible.

- Brand Voice & Accuracy: AI excels at generating content, but it often lacks nuance, brand personality, and factual accuracy. Unchecked AI output can dilute your brand voice, introduce factual errors, or even generate misleading claims. Governance here means defining the level of human review required for AI-generated content and establishing clear parameters for tone, style, and factual verification.

- Bias & Fairness: AI models are trained on vast datasets, which often reflect societal biases. If your marketing AI generates copy, images, or audience segments, it can inadvertently perpetuate stereotypes or exclude certain groups. Your governance should include checks for bias in AI outputs, especially for customer-facing content or targeting decisions, ensuring your marketing remains inclusive and ethical.

- Transparency & Disclosure: Internally, your team needs to know when and how AI is being used. Externally, there’s a growing expectation for transparency with customers, particularly when AI is directly interacting with them (e.g., chatbots) or generating significant portions of content. While full disclosure isn’t always practical for every internal AI use, establishing a policy for customer-facing AI interactions builds trust.

Beyond immediate factual errors, a more insidious risk lies in the gradual erosion of your brand’s unique voice. When teams lean too heavily on AI for initial drafts, the output often carries a generic, ‘AI-speak’ quality – grammatically correct but devoid of personality, nuance, or the specific quirks that define your brand. Over time, human editors, under pressure to meet deadlines, can become desensitized to this blandness, inadvertently adopting it or spending disproportionate time trying to inject the missing soul. The cost isn’t just a single bad piece of content, but a slow dilution of your brand identity across all channels, making it harder to stand out.

Another often-overlooked aspect is the operational overhead required to maintain effective governance. Establishing policies is one thing; consistently enforcing them, auditing outputs for bias, ensuring data security protocols are followed, and staying current with evolving AI capabilities and risks is another entirely. For lean marketing teams, this demands dedicated time and a specific skillset that might not exist in-house. The pressure to ‘just get it done’ can lead to governance becoming a theoretical exercise rather than a living practice, creating a gap between policy and execution that grows wider with each new AI tool adopted.

This operational gap often fuels the rise of ‘shadow AI’ – individual team members using unapproved AI tools or personal accounts to bypass perceived bottlenecks or lack of official resources. While seemingly efficient in the short term, this practice completely sidesteps your established governance, creating unmanaged data flows, inconsistent brand messaging, and unchecked bias risks. It’s a prime example of theory meeting practice: policies are in place, but the real-world pressure for speed and resource limitations can push practitioners to find workarounds, inadvertently exposing the business to significant, unquantified risks.

Prioritizing Your First Steps: What to Do Today

For small to mid-sized teams, the key is to start simple and iterate. Don’t aim for a perfect, comprehensive policy document on day one. Focus on immediate, high-impact areas.

- Start with a Simple Policy Document: Draft a concise, one-page internal guideline. This isn’t a legal brief; it’s a living document that outlines the “dos and don’ts” for your most commonly used AI tools. Focus on the core risks: what data can’t go into ChatGPT, the mandatory human review for all AI-generated blog posts, and who to ask if unsure. Keep it practical and easy to understand.

- Designate an AI ‘Champion’: Appoint one or two individuals within your marketing team to be the go-to experts for AI tool usage and governance. This person doesn’t need to be a technical guru but someone who understands the team’s workflows and can advocate for responsible AI. They’ll help clarify guidelines, gather feedback, and be the first point of contact for questions.

- Focus on High-Risk, High-Impact Areas First: Identify where AI use poses the greatest risk to your brand or data. This usually involves customer-facing content (social media, emails, ads) and any process involving sensitive customer or proprietary business data. Implement your initial guidelines strictly in these areas before expanding to lower-risk applications like internal brainstorming or preliminary research.

What’s easy to overlook is that a ‘simple’ policy can quickly become an ‘outdated’ policy if not explicitly designed for evolution. The initial document, while effective for immediate risks, often lacks a clear, mandated review cycle or a process for incorporating feedback as new tools emerge or existing ones update. This can lead to teams operating under obsolete guidelines, creating new, unaddressed vulnerabilities or fostering a culture where the policy is seen as a static hurdle rather than a living guide.

The ‘champion’ role, while critical, often gets layered onto an already full plate. Without clear time allocation or the authority to enforce, this individual can quickly become a bottleneck or a source of frustration, struggling to keep pace with tool changes or to effectively guide a team that views AI governance as ‘extra work.’ The non-obvious failure mode here is not a lack of intent, but a lack of practical support, leading to inconsistent application of guidelines and a diluted impact of the champion’s efforts.

While focusing on high-risk areas is the right starting point, it’s easy to overlook the creeping risks in ‘low-risk’ applications. Internal brainstorming or preliminary research, if unchecked, can still lead to the accidental ingestion of proprietary data into public models or the normalization of biased outputs within your team, subtly influencing future decisions and internal communications. The second-order effect is that a perceived ‘safe zone’ can become a blind spot, allowing less visible but cumulative risks to build up over time.

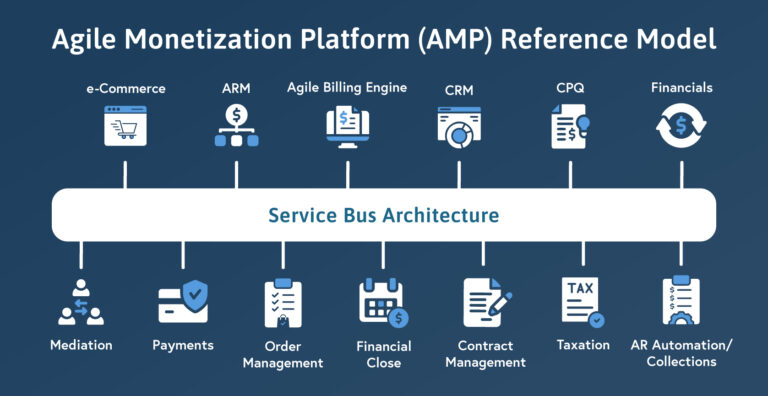

What you should explicitly deprioritize today is any effort to build custom AI models or invest in complex, enterprise-grade AI governance platforms. These initiatives are resource-intensive, often require specialized expertise beyond what most SMBs possess, and can divert critical attention and budget from the immediate, practical steps of establishing responsible usage with existing tools. Focus on process and people, not bespoke tech, for now.

What to Deprioritize or Skip (For Now)

With limited resources, it’s crucial to know what not to spend time on. For most small to mid-sized marketing teams, getting bogged down in highly complex or theoretical aspects of AI governance is a distraction. You should absolutely deprioritize developing custom AI ethics frameworks or attempting to build your own proprietary AI models for governance. These are resource-intensive endeavors typically reserved for large enterprises with dedicated legal and R&D departments. Similarly, avoid spending excessive time on speculative future risks or trying to predict every possible AI misuse scenario. Instead, focus on the tangible, immediate risks present with the tools your team is using today. Don’t get caught up in academic debates about AI sentience or highly advanced regulatory compliance that doesn’t yet apply to your scale. Your energy is better spent on practical, enforceable guidelines for current operations.

Practical Tools & Tactics for Enforcement

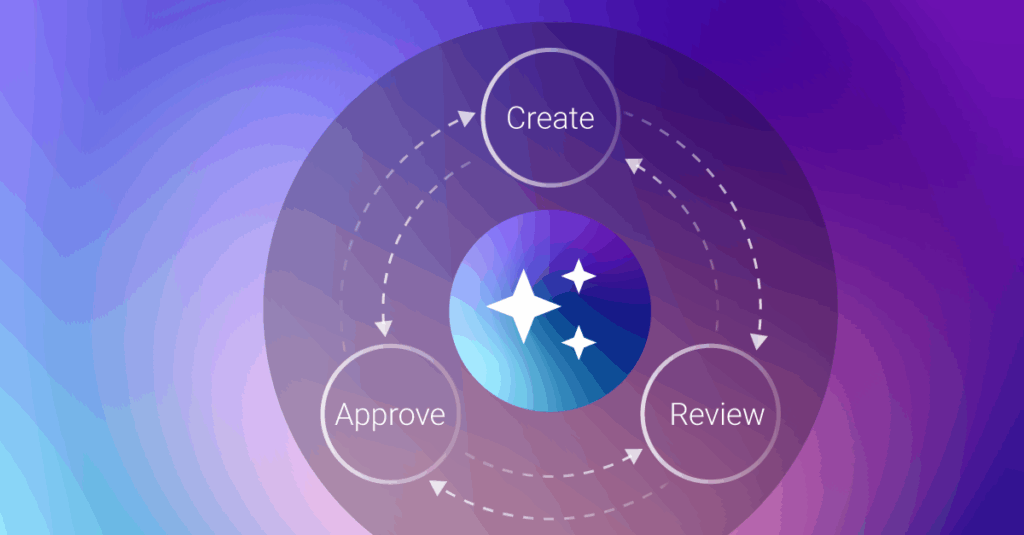

Guidelines are only effective if they’re understood and followed. Here’s how to embed them into your team’s daily workflow.

- Template Libraries & Approved Prompts: For content generation, create a library of approved prompts and content templates. This guides your team toward desired outputs, maintains brand consistency, and reduces the likelihood of off-brand or inaccurate content. For example, a standard prompt for blog post outlines or social media captions can include instructions for tone, keywords, and mandatory human review steps.

- Regular, Informal Check-ins: Don’t make governance a one-time training event. Integrate discussions about AI usage into your regular team meetings. Share examples of good and bad AI outputs, discuss new tools, and address challenges. This fosters a culture of continuous learning and responsible use, making governance a collaborative effort rather than a top-down mandate.

- Leverage Platform Features: Many AI tools and marketing platforms are integrating their own guardrails. For instance, some content platforms now offer AI detection or brand voice checks. Utilize these built-in features where available to add an extra layer of compliance and consistency. Stay updated on how your core marketing tech stack is evolving to support responsible AI use. For more on leveraging AI, see AI tools for marketing.

Building a Sustainable AI Practice

Establishing AI governance isn’t a one-off project; it’s an ongoing process. As AI technology evolves and your team’s usage matures, your guidelines will need to adapt. Regularly review your policies – perhaps quarterly – to ensure they remain relevant to the tools you’re using and the challenges you’re facing. Encourage open communication within the team about AI successes and failures. This iterative approach, combined with a focus on practical application over theoretical perfection, will allow your marketing team to leverage AI effectively and responsibly, driving growth while mitigating risks in the long term. Remember, the goal is to empower your team, not restrict it, by providing clear guardrails for innovation.

Leave a Comment