As AI continues to reshape search and content consumption, your website’s architecture is no longer just about traditional SEO; it’s about how effectively AI can understand and contextualize your content. For small to mid-sized businesses, this means focusing on practical structural improvements that directly aid AI in interpreting your site’s purpose and topical authority.

This article will guide you through prioritizing key architectural decisions, helping you make trade-offs that ensure your content is not only discoverable but also semantically clear to the AI systems that increasingly power search and content recommendations.

Why Site Architecture Matters More for AI Today

The evolution of search, particularly with AI-powered features like Google’s Search Generative Experience (SGE), means that search engines are moving beyond simple keyword matching. They’re striving for a deeper, semantic understanding of content. A well-structured site provides explicit signals about the relationships between your pages, the hierarchy of your topics, and the overall context of your information.

For AI, this clear structure is like a well-organized library. It allows AI models to efficiently crawl, index, and, crucially, comprehend the nuances of your content, leading to better representation in AI-driven search results and improved visibility for your target audience.

Prioritizing a Logical Hierarchy

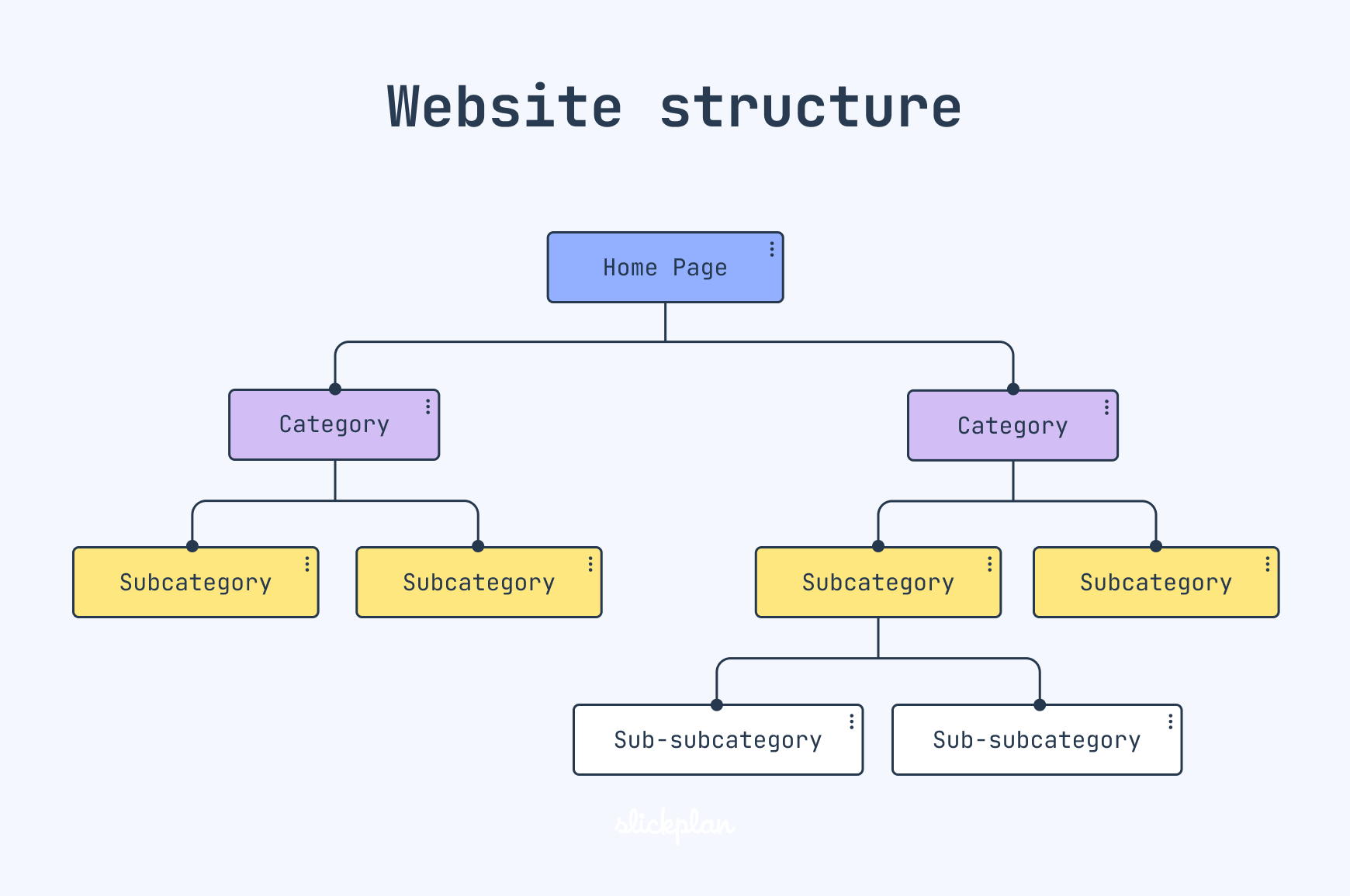

A logical site hierarchy is the bedrock of good architecture, both for users and AI. It dictates how information flows and how topics relate.

- What to do first:

- Shallow Structure: Aim for a flat site structure where most pages are accessible within three clicks from the homepage. This minimizes the ‘depth’ of your content, making it easier for crawlers to reach and understand.

- Clear Topic Clusters: Group related content under logical categories. For instance, all blog posts about ’email marketing’ should reside under an ‘Email Marketing’ category page, which then links to individual articles. This creates clear topical authority.

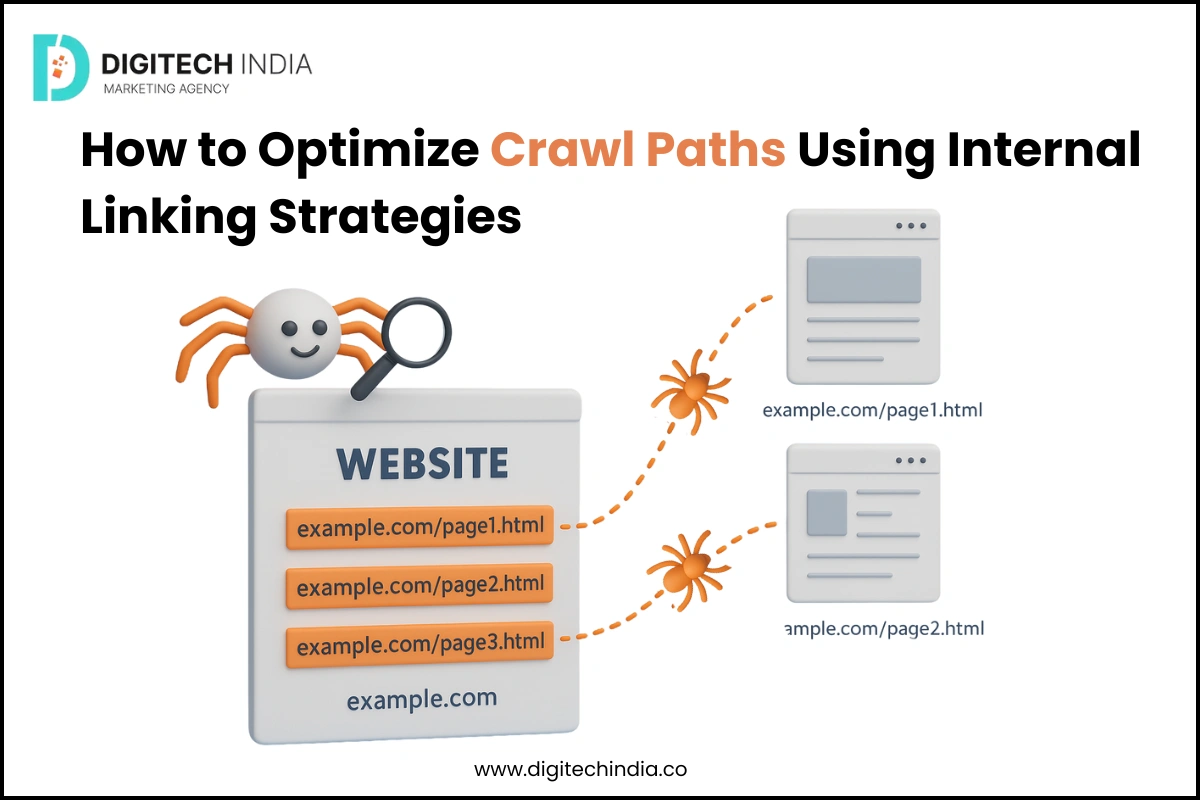

- Consistent Internal Linking: Use descriptive anchor text to link related articles and pages. This reinforces topical connections and distributes ‘link equity’ effectively across your site. Think of it as building a web of relevance.

- Why it works: A shallow, clustered structure with strong internal linking helps AI agents quickly grasp your site’s main topics and the relationships between them. It signals which content is most important and how different pieces of information contribute to a broader theme.

- What to delay or avoid: Don’t get bogged down in creating overly complex taxonomies or deep, multi-layered navigation structures unless absolutely necessary for a massive content library. For most SMBs, simplicity and clarity trump intricate categorization. Avoid internal linking strategies that are purely for SEO (e.g., keyword stuffing in anchors) and instead focus on user-centric, contextually relevant links.

While a shallow structure is undeniably beneficial, its ongoing maintenance as content volume grows is often underestimated. Strictly adhering to a “three-click” rule can force awkward content placement or lead to an explosion of sub-category pages, inadvertently diluting the very topical authority you’re trying to build. Similarly, the initial clarity of topic clusters can become a rigid constraint. Teams frequently find themselves wrestling with new content that doesn’t fit neatly into existing categories, resulting in either orphaned pages or forced categorizations that confuse both users and search engines. This introduces friction into the content creation workflow, slowing down publication and accumulating editorial debt.

The theory of consistent internal linking is sound, but in practice, it often becomes an afterthought, or is executed superficially. Teams tend to link only to the most obvious, high-level pages, overlooking opportunities for more granular, contextually rich connections between related articles. This isn’t just a missed SEO opportunity; it creates a fragmented user journey where visitors might not discover deeper, highly relevant content. The downstream effect is a site where link equity isn’t optimally distributed, and content that could reinforce each other’s authority remains isolated, diminishing the overall power and discoverability of your content library.

Finally, the pursuit of a “perfect” hierarchy can introduce significant decision pressure and frustration within a team. Over-analyzing every piece of content’s exact placement or debating the ideal anchor text for every internal link can lead to analysis paralysis, slowing down content production. For most SMBs, a “good enough” logical structure that is easy to maintain and understand is far more valuable than an intricately designed, theoretically perfect one that becomes a bottleneck for publishing. Prioritize getting valuable content published and accessible over endlessly refining a taxonomy that few users will ever fully appreciate.

Enhancing Context with Semantic Grouping

Beyond basic hierarchy, semantic grouping helps AI understand the meaning and purpose of your content more deeply.

- What to do first:

- Category Pages as Hubs: Design your category pages not just as lists of posts, but as valuable content hubs that summarize the topic and link strategically to sub-categories, key articles, and related resources.

- Breadcrumbs: Implement clear breadcrumb navigation on all relevant pages. These visual cues not only aid user navigation but also provide explicit hierarchical paths for AI, showing exactly where a page sits within your site’s structure.

- Basic Schema Markup: Focus on implementing fundamental schema types like

Organization,WebPage,Article, andProduct. These provide structured data that explicitly tells AI what your content is about and what type of entity it represents. You can test your schema with Google’s Rich Results Test tool. rich results test

- Why it works: These elements provide explicit, machine-readable signals about your content’s context and relationships. Breadcrumbs offer a clear path, while schema markup directly labels content types, allowing AI to process information more accurately and potentially display rich results in search.

- What to delay or avoid: Don’t chase every new or highly specific schema type unless you have a clear, measurable benefit in mind. For example, implementing highly granular schema for every single product attribute might be overkill if your product catalog is small and the standard

Productschema suffices. Prioritize the schema that offers the most impact for your core business offerings.

What often gets overlooked with category pages designed as content hubs is the ongoing maintenance burden. Building them out initially is one thing, but keeping them fresh and relevant requires a continuous effort to integrate new content, update summaries, and prune outdated links. Without this sustained attention, these hubs quickly become stale, undermining their value and creating a “set it and forget it” trap that ultimately wastes the initial investment and degrades the user experience over time.

Similarly, while breadcrumbs seem straightforward, their practical implementation often reveals subtle failure modes. Inconsistent application across different sections of a site, or a mismatch between the breadcrumb path and the actual underlying information architecture, can confuse both users and search engine crawlers. This isn’t just a minor aesthetic flaw; it actively sends conflicting signals about your content’s context, making it harder for AI to accurately map your site’s structure and diminishing the very clarity you aimed to provide.

Regarding basic schema markup, a common pitfall is the expectation that implementing it automatically guarantees rich results. While schema provides explicit signals, search engines ultimately decide whether to display rich snippets based on many factors, including query relevance, content quality, and competitive landscape. Teams can become frustrated when they invest time in schema only to see inconsistent or no rich result display, leading to a perception of wasted effort. The reality is that schema is a foundational signal, not a magic bullet, and its true value often lies in the cumulative effect of clearer communication with AI rather than immediate, dramatic visual changes in SERPs.

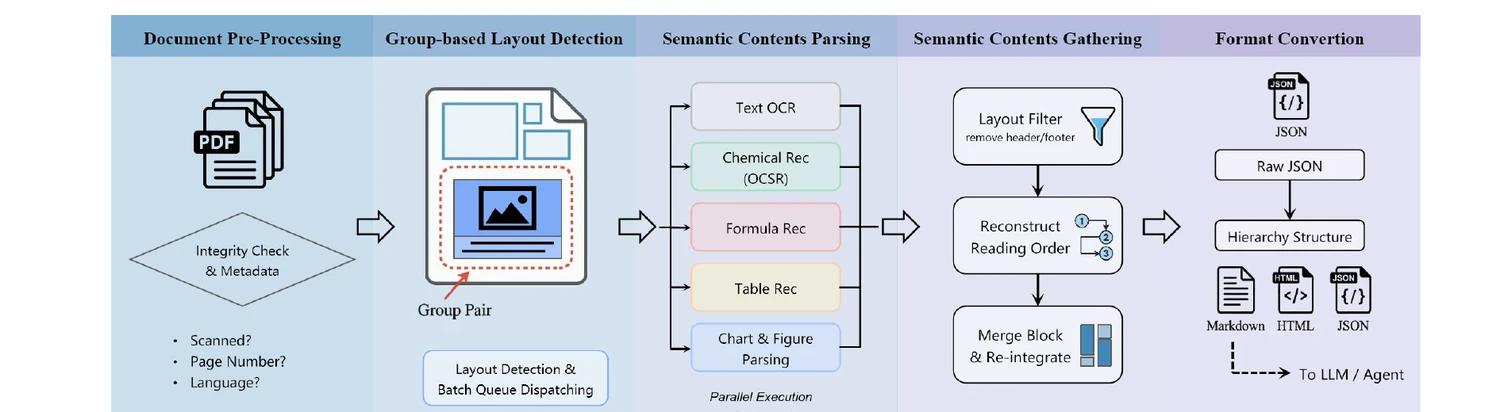

Optimizing Crawlability and Indexing for AI Agents

Even the best architecture is useless if AI agents can’t efficiently discover and process your content.

- What to do first:

- Clean XML Sitemaps: Ensure your XML sitemaps are up-to-date, include only indexable pages, and are submitted via Google Search Console. This is your direct line to telling search engines what content you want them to find.

- Strategic Robots.txt: Use your

robots.txtfile to block truly irrelevant or duplicate content (e.g., internal search results, admin pages) from being crawled. Be cautious not to block valuable content. - Canonical Tags: Implement canonical tags correctly to prevent duplicate content issues, especially for e-commerce sites with product variations or filtered category pages. This tells AI which version of a page is the authoritative one.

- Fast Page Load Times: Page speed is a ranking factor and a critical user experience element. AI agents, like human users, prefer fast-loading sites. Optimize images, leverage caching, and minimize render-blocking resources.

- Why it works: These technical elements ensure that AI crawlers can efficiently navigate, understand, and index your site without wasting resources on irrelevant pages or getting confused by duplicate content. A fast site means more content can be processed in less time.

- What to delay or avoid: For most small to mid-sized businesses, obsessing over minute crawl budget optimizations is often a low-ROI activity. If your site is well-linked, fast, and free of major technical errors, Google’s crawlers will generally find and index your important content sufficiently. Focus on the foundational elements rather than advanced, granular crawl control unless you manage a site with hundreds of thousands of pages.

Practical Trade-offs for SMBs

As an SMB, you’re operating with limited resources. Your architectural decisions must reflect this reality.

- Prioritize User Experience First: Always remember that a site architecture that is intuitive and easy for humans to navigate will almost always be beneficial for AI as well. Don’t build for crawlers at the expense of your users.

- Iterate, Don’t Overhaul: You don’t need to rebuild your entire site architecture overnight. Start with the most impactful changes, such as flattening your structure, improving internal linking, and implementing basic schema. Measure the impact, then iterate.

- Focus on Content Quality: The best site architecture cannot compensate for poor, unhelpful, or irrelevant content. Ensure your content is high-quality, addresses user intent, and provides real value.

- What to deprioritize today: For most SMBs, deep dives into highly advanced semantic web concepts, building custom knowledge graphs, or implementing every niche schema type can be safely deprioritized. The effort required for these often outweighs the practical benefits for businesses with limited content libraries and traffic. Instead, focus your energy on creating a clear, logical structure, ensuring robust internal linking, and applying foundational schema markup. These practical steps will yield the most significant returns in enhancing both crawlability and contextual understanding for AI, without overstretching your team.

Leave a Comment