Introduction: Practical Indexing for Real-World Teams

For small and mid-sized businesses, getting your content indexed by Google isn’t just a technical task; it’s a strategic imperative. This article cuts through the noise, offering a practitioner’s view on how Google actually discovers, processes, and ranks your web pages. You’ll gain clear, actionable insights into the critical signals that influence indexing, helping you prioritize efforts, avoid common pitfalls, and ensure your valuable content earns the visibility it deserves, even with limited resources.

We’ll focus on practical decision-making, guiding you on what truly moves the needle for indexing success and what can safely be deprioritized. Our goal is to equip you with the judgment to make smart trade-offs, ensuring your team’s efforts translate directly into improved organic search presence.

Understanding Google’s Indexing Process: Beyond the Basics

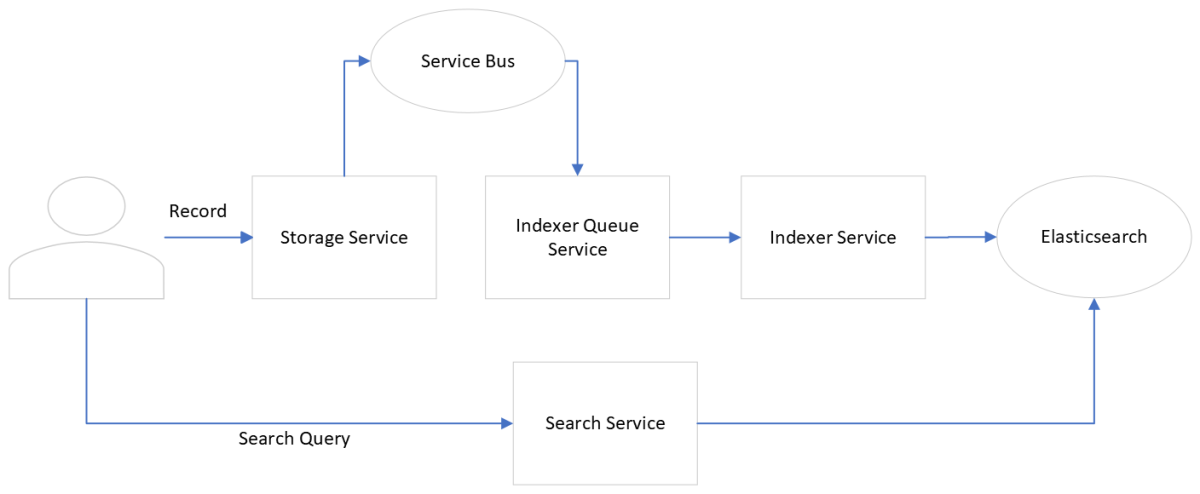

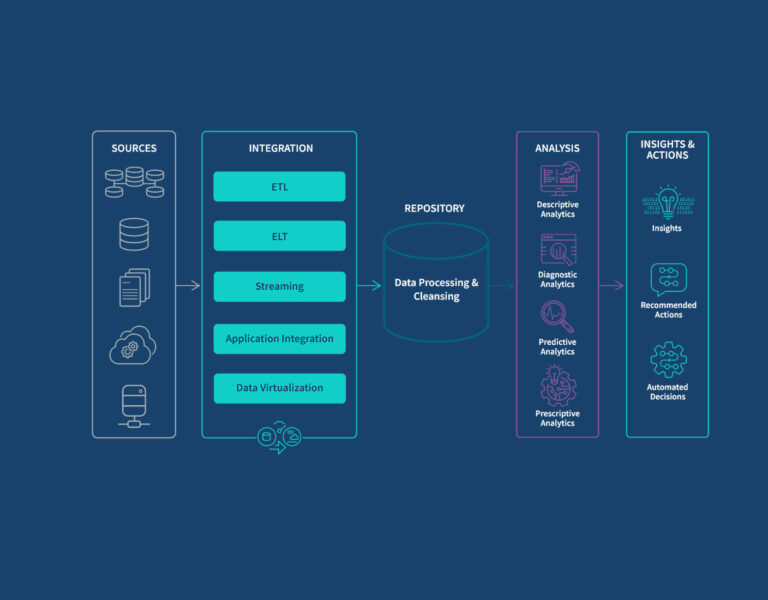

Google’s indexing isn’t a simple binary of ‘indexed’ or ‘not indexed.’ It’s a complex process involving crawling, rendering, and then evaluating content for inclusion in its massive index. For your content to be visible, Googlebot must first discover it (crawling), then understand it (rendering), and finally deem it valuable enough to store and retrieve (indexing). The ‘advanced’ part for SMBs isn’t about obscure tactics, but about understanding the signals that influence each stage and making informed decisions.

Crucially, Google doesn’t index everything it crawls. It prioritizes pages that offer unique value, are technically accessible, and demonstrate a good user experience. Your strategy must align with these priorities to maximize your indexing success.

What often gets overlooked in practice is that simply getting a page ‘indexed’ isn’t the finish line. Many teams celebrate when a page appears in Google’s index, only to find it doesn’t rank for any meaningful queries. This isn’t just a missed opportunity; it’s a hidden cost. Every indexed page, regardless of its quality, consumes a portion of Google’s crawl budget and attention. If a significant percentage of your indexed pages are low-value, thin content, or technically flawed, you’re effectively diluting the overall quality signal of your site. This can lead to a downstream effect where Google’s perception of your site’s authority or relevance is subtly diminished, making it harder for even your best content to gain traction.

The practical reality for small to mid-sized businesses is that resources are finite. You can’t fix every technical issue on every page, nor should you try. This leads to a critical decision point: what to prioritize. Chasing every minor indexing error across a vast, low-value content archive is a common trap that wastes valuable time and effort. Instead, focus your limited time and effort on ensuring your most valuable, revenue-driving, or audience-attracting content is perfectly crawlable, renderable, and indexable.

For today, you should explicitly deprioritize or even ‘noindex’ pages that offer minimal unique value, are outdated, or serve purely internal purposes. The goal isn’t to get everything indexed, but to get the right things indexed effectively. Trying to force Google to index every single page on your site, especially those with little strategic value, often leads to frustration and diverts resources from where they can make a real impact.

Prioritizing Technical Health for Efficient Indexing

Technical health forms the bedrock of effective indexing. Without a solid foundation, even the best content can struggle for visibility. For small teams, the focus should be on high-impact areas that directly affect Googlebot’s ability to crawl and render your pages efficiently.

- Site Speed and Core Web Vitals: These aren’t just ranking factors; they directly impact how Googlebot perceives and processes your site. A slow site can lead to reduced crawl budget and a poorer rendering experience for Google. Prioritize optimizing server response times, image compression, and minimizing render-blocking resources.

- Mobile-First Indexing: This is no longer a future trend; it’s the current reality. Ensure your mobile version is fully functional, loads quickly, and contains all the content and internal links present on your desktop version. Google primarily uses your mobile site for indexing and ranking.

- Canonicalization: Duplicate content, even if unintentional (e.g., different URLs for the same page), can confuse Googlebot, waste crawl budget, and dilute ranking signals. Implement proper canonical tags to consolidate signals and guide Google to your preferred version.

- XML Sitemaps: While not a guarantee of indexing, a clean, up-to-date XML sitemap acts as a roadmap for Googlebot, helping it discover new and updated content. Ensure your sitemap only includes pages you want indexed and is free of broken links or redirects.

What’s often overlooked is the compounding effect of technical debt. Neglecting these foundational elements doesn’t just mean missed opportunities; it means actively wasting resources. Every piece of content created, every link built, every social share earned for a page that isn’t properly indexed is effort effectively nullified. For lean teams, this isn’t just inefficient, it’s a direct drain on an already stretched budget and limited headcount, forcing a reactive scramble later when issues become critical.

Another common pitfall lies in the seemingly minor details of implementation. It’s easy to assume that if a page exists, it will be indexed. However, a stray `noindex` tag, an overly broad `Disallow` directive in `robots.txt`, or even a misconfigured server response can silently block Googlebot. These aren’t theoretical concerns; they’re real-world scenarios that often arise from development cycles, staging environments pushed live without proper checks, or a simple oversight that can render entire sections of a site invisible to search engines for weeks or months.

Finally, technical health isn’t a “set it and forget it” task. The digital landscape, platform updates, and even internal site changes mean ongoing monitoring and maintenance are critical. The pressure on small teams to constantly deliver new features or content often pushes these essential, but less visible, maintenance tasks to the back burner. This creates a cycle where minor issues fester, eventually demanding a disproportionate amount of time and effort to resolve, pulling resources away from growth initiatives and adding significant stress to already busy teams.

Content Quality and Uniqueness: The Ultimate Indexing Signal

No amount of technical optimization will compensate for low-quality or duplicate content. Google’s primary goal is to serve users the most relevant and valuable information. Your content strategy must reflect this.

- Originality and Value: Create content that genuinely answers user queries, provides unique insights, or solves a problem better than existing content. Avoid thin content, spun articles, or pages with minimal value. Google is increasingly sophisticated at identifying and deprioritizing such content.

- Freshness and Updates: Regularly review and update your existing content to ensure its accuracy and relevance. For evergreen topics, periodic updates signal to Google that your content remains a reliable resource. For timely topics, freshness is a direct indexing signal.

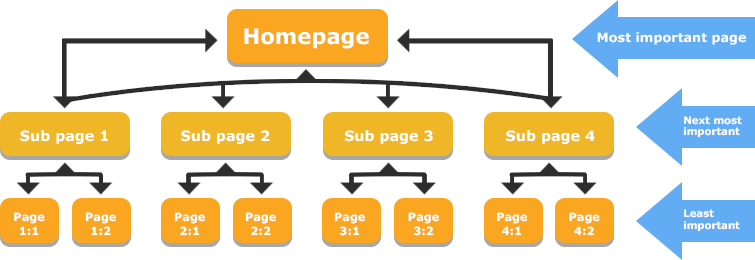

- Internal Linking Strategy: Beyond technical discovery, internal links distribute ‘PageRank’ and topical authority throughout your site. Strategically link from high-authority pages to new or important content you want indexed quickly. Use descriptive anchor text that provides context to both users and search engines.

Internal linking structure diagram

Leveraging Google Search Console for Indexing Control

Google Search Console (GSC) is your direct line to Google regarding your site’s indexing status. It’s an indispensable tool for any practitioner.

- Coverage Report: This is your first stop for identifying indexing issues. Monitor pages with ‘Excluded’ or ‘Error’ statuses. Understand the reasons (e.g., ‘Crawled – currently not indexed,’ ‘Blocked by robots.txt,’ ‘Page with redirect’) and address them systematically.

- URL Inspection Tool: Use this to debug specific pages. You can see how Google last crawled and rendered a URL, request re-indexing, and identify any issues preventing indexing. This is invaluable for troubleshooting new content or pages that aren’t appearing in search results. Google Search Console URL Inspection Tool

- Sitemaps Report: Monitor the status of your submitted sitemaps. Ensure Google is processing them correctly and that the number of submitted URLs aligns with the number of indexed URLs. Discrepancies here often point to underlying content or technical issues.

- Removals Tool: Use this strategically to temporarily block specific URLs from appearing in Google Search results if you need to quickly de-index sensitive or outdated content. Remember, this is a temporary measure; for permanent removal, use a ‘noindex’ tag or remove the content.

Strategic Decisions: What to Prioritize, Delay, and Avoid

Operating with limited resources means making tough choices. Here’s a practitioner’s take on where to focus your indexing efforts.

Prioritize Today:

- Core Technical Health: Ensure your site is fast, mobile-friendly, and free of critical crawl errors. This is foundational.

- High-Quality, Unique Content: Invest in creating truly valuable content that serves your audience. This is the strongest signal for indexing and ranking.

- Strategic Internal Linking: Guide Googlebot and users through your site, distributing authority and context effectively.

- Google Search Console Monitoring: Regularly check your GSC Coverage report and address critical indexing issues promptly.

Delay for Later:

For most small to mid-sized businesses, obsessing over minute crawl budget optimizations is a low-priority task. Unless you have hundreds of thousands of pages or a highly dynamic site with frequent changes, Googlebot will likely crawl your important content sufficiently if your technical foundation is sound. Similarly, chasing every minor algorithm update with drastic site-wide changes is often counterproductive. Focus on consistent, high-quality work rather than reacting to every tremor in the SEO landscape. Complex JavaScript SEO fixes without clear, data-backed evidence of indexing issues can also be a significant time sink with minimal immediate return.

Avoid Entirely:

- Duplicate Content: Actively work to eliminate or consolidate duplicate content issues through canonicalization or redirects. This wastes crawl budget and dilutes your ranking signals.

- Blocking Essential Resources: Never block CSS, JavaScript, or images that are critical for rendering your page. Google needs to see your page as a user would.

- Relying Solely on Sitemaps: Don’t assume submitting a sitemap guarantees indexing. It’s a discovery tool, not a magic bullet. Underlying quality and technical issues must still be addressed.

- Ignoring User Experience: Poor UX signals (high bounce rates, low time on page) can indirectly affect indexing by signaling lower quality or relevance, even if technically crawlable.

Leave a Comment