The rise of AI agents means your website content isn’t just for human eyes and traditional search algorithms anymore. These agents, whether powering search results, conversational interfaces, or specialized tools, need to understand, summarize, and act on your information with precision. This article outlines the critical technical SEO strategies that go beyond basic structured data to ensure your content is not just found, but truly comprehended and utilized by AI agents, helping you secure organic visibility and drive business growth.

By implementing these pragmatic approaches, you’ll gain a tangible advantage. You’ll learn where to focus your limited resources for maximum impact, ensuring your site’s technical foundation supports both current and future AI-driven content consumption patterns. This isn’t about chasing every new trend, but about solidifying the core technical signals that matter.

Prioritizing Foundational Clarity for AI Agents

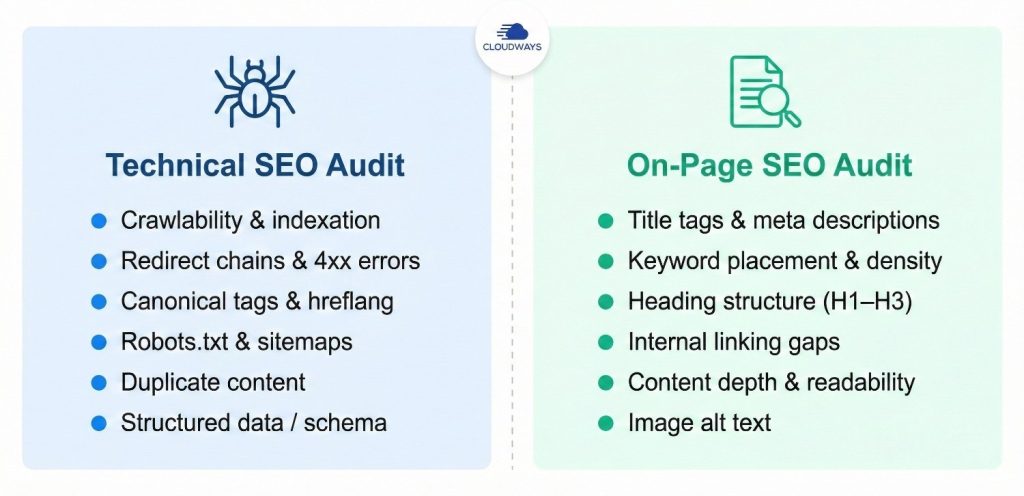

Before diving into advanced tactics, ensure your site’s fundamental technical health is robust. AI agents, much like traditional crawlers, need to efficiently discover, crawl, and index your content. Any roadblocks here render all other efforts moot. Start with a thorough audit of your crawlability and indexability. Check your robots.txt file for unintended blocks, verify your XML sitemaps are up-to-date and correctly submitted, and address any canonicalization issues that might confuse agents about your preferred content versions.

This foundational work is non-negotiable. If AI agents can’t access or correctly interpret your pages, your content effectively doesn’t exist to them. It’s a low-cost, high-impact area where even small teams can make significant progress quickly.

- Crawl Budget Optimization: Ensure important pages are prioritized for crawling.

- Canonical Tags: Use them consistently to prevent duplicate content issues.

- Sitemap Accuracy: Keep your XML sitemaps clean and updated.

Semantic HTML and Content Structure

While structured data provides explicit signals, well-formed semantic HTML offers implicit, yet powerful, cues to AI agents about your content’s meaning and hierarchy. Think of it as providing a clear outline for an intelligent reader. Use <h1> for your main page title, <h2> for major sections, and <h3> for sub-sections. This isn’t just for visual appeal; it tells AI agents the relative importance and relationship of different content blocks.

Beyond headings, leverage other semantic elements. Use <ul> and <ol> for lists, <p> for paragraphs, and <strong> or <em> for emphasis where appropriate. Avoid using generic <div> tags for structural elements that have more specific semantic counterparts. This clarity helps AI agents accurately extract key information, summarize content, and answer specific queries based on your page’s structure.

What often gets overlooked in practice is the subtle but significant difference between content that looks structured and content that is semantically structured. It’s easy to fall into the trap of using a <div> with custom styling to make text appear like a heading, or to skip heading levels (e.g., going straight from <h1> to <h3>) for visual layout reasons. While this might satisfy a designer’s immediate aesthetic, it actively misleads AI agents, breaking the logical flow and hierarchy your content is supposed to convey. The hidden cost here isn’t just a minor inconvenience; it’s a degradation of your content’s machine-readability, leading to less accurate AI interpretations and potentially poorer performance in AI-driven search or content summarization.

This discrepancy creates a downstream effect: as AI models become more sophisticated, content that lacks true semantic integrity will struggle to compete. Teams under pressure often prioritize immediate visual output over underlying structural correctness, viewing the latter as an optional nicety. However, the cumulative effect of these small compromises across many pages creates significant technical debt. For small to mid-sized teams, a full, retroactive audit and rewrite of every single legacy page for perfect semantic HTML is often an unrealistic endeavor. Instead, prioritize applying correct semantic structure to all new content being published and focus your cleanup efforts on your top 10-20% most critical, high-traffic existing pages. This pragmatic approach ensures future content is optimized while addressing the most impactful areas of your current library without overwhelming limited resources.

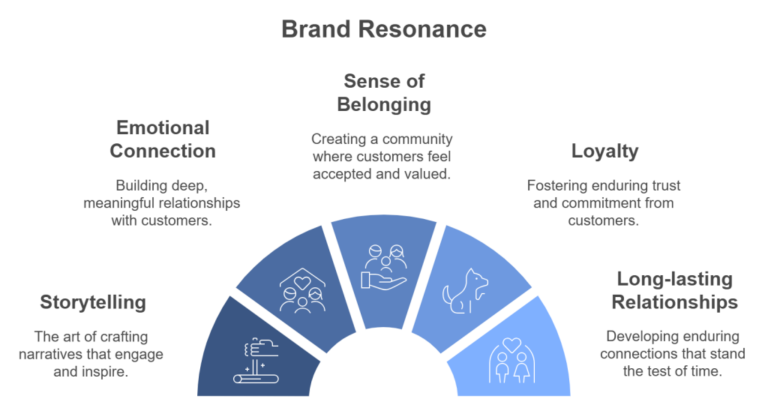

Internal Linking Architecture for Context and Authority

Your internal linking structure is a powerful signal for both human users and AI agents. It dictates how authority flows through your site and, crucially, how different pieces of content relate to each other. For AI agents, a logical internal linking strategy helps them understand the depth and breadth of your expertise on a given topic. When relevant pages link to each other with descriptive anchor text, it reinforces topical clusters and demonstrates your site’s authority.

Focus on creating clear, descriptive anchor text that accurately reflects the destination page’s content. Avoid generic phrases like

Leave a Comment