The landscape of search is rapidly evolving, driven by advanced AI models that understand context and intent far beyond simple keywords. For small to mid-sized businesses, this means traditional technical SEO, while still foundational, isn’t enough to secure discoverability. This article cuts through the noise to give you a pragmatic roadmap: what technical SEO efforts genuinely move the needle today, what you can afford to delay, and what to avoid entirely, all within the constraints of limited budgets and team capacity.

You’ll gain actionable insights to ensure your website is not just crawlable and indexable, but also semantically clear and trustworthy to the AI systems now powering search results and content generation. This isn’t about chasing every new trend, but about solidifying your site’s technical foundation to thrive in an AI-first world.

The AI Shift: From Keywords to Semantic Understanding

For years, technical SEO focused heavily on ensuring search engines could crawl, index, and understand content primarily through keywords and basic page structure. While these remain critical, AI-driven search, exemplified by generative AI features and evolving ranking algorithms, now prioritizes a deeper, semantic understanding of content. AI models don’t just match keywords; they interpret user intent, entity relationships, and the overall context of information. This means your technical SEO must now actively facilitate this deeper comprehension, making your content not just visible, but truly understandable to sophisticated AI systems.

Foundational Technical SEO: Still Non-Negotiable

Some technical SEO elements are timeless and remain the bedrock of any successful online presence. These are your absolute must-haves, regardless of AI advancements:

- Crawlability and Indexability: If search engines can’t find and process your pages, nothing else matters. Ensure your

robots.txtisn’t blocking essential content, your sitemaps are up-to-date, and there are no widespreadnoindextags on pages you want discovered. Regularly check Google Search Console for crawl errors. - Page Experience & Core Web Vitals: User experience is a direct signal. Fast loading times, mobile responsiveness, and visual stability (Core Web Vitals) are crucial. AI models, which often simulate user interactions, will inherently favor sites that offer a superior experience. Prioritize fixing major performance bottlenecks.

- HTTPS Security: A secure connection is a basic trust signal for both users and search engines. Ensure all pages are served over HTTPS.

While the foundational elements of crawlability and indexability are clear, the practical challenge often lies in their sustained maintenance. It’s easy to configure robots.txt and sitemaps once and then overlook them, especially after significant site changes like a platform migration or a major content restructuring. This oversight isn’t benign; an unoptimized or outdated setup leads to inefficient crawl budget allocation. Search engines spend less time discovering your valuable pages, delaying the indexing of new content or critical updates. The downstream effect is a persistent lag in how your site’s freshness and relevance are perceived, which can be a quiet source of frustration for content teams when their latest efforts don’t gain traction as expected.

Similarly, the emphasis on Page Experience and Core Web Vitals, while entirely justified, can introduce a non-obvious failure mode: over-optimization. Teams can get caught in a “whack-a-mole” scenario, spending disproportionate development resources chasing marginal gains in metrics that don’t always translate to a tangible improvement in user experience or business outcomes. The internal pressure to achieve perfect “green” scores can lead to technical debt, complex workarounds, or even compromise other site functionalities, creating friction between marketing, development, and product teams. It’s a practical trade-off where an isolated focus on a metric can sometimes overshadow broader strategic goals and resource allocation.

New Priorities for AI-Driven Discoverability

To truly optimize for the AI era, your technical SEO strategy needs to evolve. These areas now demand increased attention:

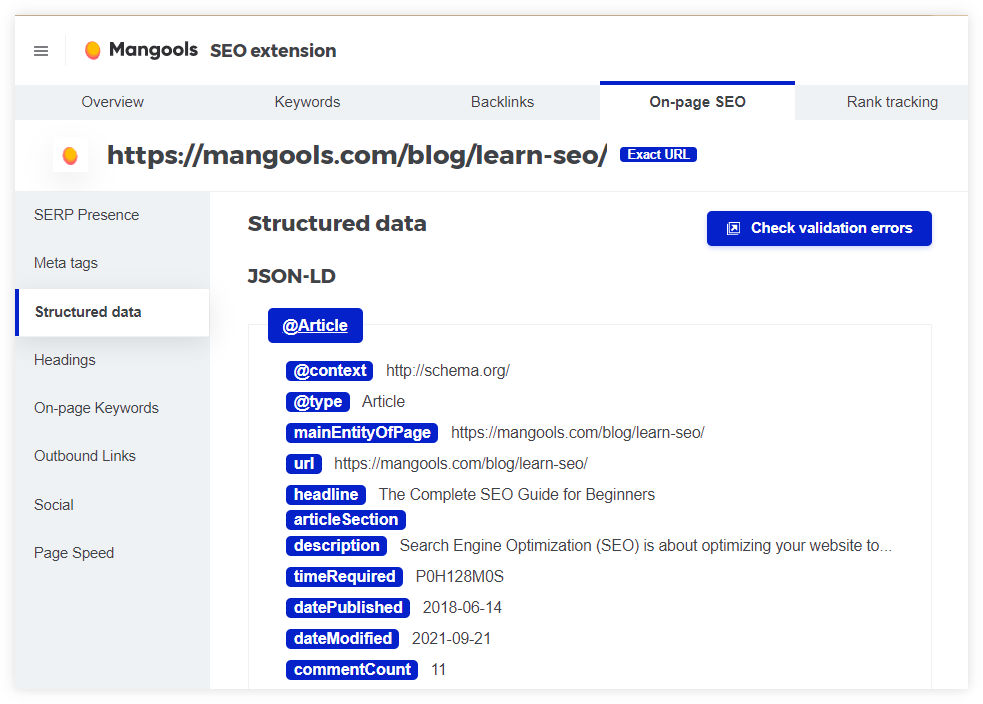

Structured Data for Semantic Clarity

This is arguably the most impactful technical lever for AI discoverability today. Structured data, using Schema.org vocabulary, provides explicit clues to search engines about the entities on your page (products, services, articles, people, organizations) and their relationships. AI models can leverage this data to better understand your content’s context, generate rich snippets, and even inform generative AI responses. For SMBs, prioritize:

- Product Schema: If you sell anything, this is essential for e-commerce.

- Article Schema: For blog posts and informational content.

- LocalBusiness Schema: Critical for local service businesses.

- FAQPage and HowTo Schema: Directly answers common questions and outlines steps, making your content highly digestible for AI.

Implement this using JSON-LD. Focus on accuracy and completeness for the types most relevant to your business.

Content E-E-A-T Signals (Technical Angle)

Expertise, Experience, Authoritativeness, and Trustworthiness (E-E-A-T) are paramount for AI systems evaluating content quality. While E-E-A-T is largely about content quality, technical elements play a supporting role:

- Author Bios & Profiles: Ensure clear, linked author profiles with credentials on your content pages.

- Publication Dates: Display accurate and updated publication dates.

- Internal Linking to Authoritative Sources: Link to your own established, high-quality content to build topical authority.

- Secure Hosting & Privacy Policies: Technically sound security and transparent privacy policies build trust.

Semantic HTML & Accessibility

Clean, well-structured HTML isn’t just for human readability or accessibility tools; it’s a direct input for AI models. Using semantic HTML tags (<header>, <nav>, <main>, <article>, <section>, <footer>, <h1>-<h6>, <p>, <ul>, <ol>) helps AI parse your content’s hierarchy and meaning more accurately. Ensure your site is accessible, as this often correlates with good semantic structure, which AI can interpret more effectively. semantic HTML best practices

Internal Linking & Information Architecture

A robust internal linking structure helps AI understand the relationships between your content pieces and the overall depth of your topical coverage. Think of it as building a knowledge graph within your own site. Create clear topic clusters and ensure important pages are linked from relevant, authoritative internal sources. This signals to AI which content is most important and how different pieces relate, improving discoverability for complex queries.

What often gets overlooked in the push for structured data is the ongoing maintenance. Implementing Schema.org is a significant upfront effort, but the real challenge lies in ensuring it remains accurate and complete as your content, products, or services evolve. Data drift – where your structured data no longer perfectly reflects the on-page content – is a common, non-obvious failure mode. This isn’t just a missed opportunity; it can send conflicting signals to AI, potentially undermining trust or leading to misinterpretations that are harder to diagnose than a simple missing tag. Teams often lack a clear process for auditing and updating this data, turning a strategic asset into a source of technical debt and frustration.

Similarly, the pursuit of E-E-A-T signals and semantic HTML can fall into the “good enough” trap. While basic implementation might pass initial checks, relying on merely adequate signals creates a ceiling for discoverability. AI models are constantly learning to discern nuance and depth. A site that is technically sound but not exceptional in its semantic structure or E-E-A-T signals will find it increasingly difficult to compete for complex, multi-faceted queries. This isn’t an immediate penalty, but a slow, downstream erosion of competitive advantage, as AI prioritizes content that offers clearer, more robust signals of quality and relevance.

Finally, while internal linking is critical for building an on-site knowledge graph, the practical execution often devolves into a “link farm” anti-pattern. The theory suggests strategic connections; the practice can become a scramble to link every keyword mention, diluting the authority and clarity of your information architecture. This over-linking makes it harder for AI to distinguish truly important relationships from noise, and it puts significant decision pressure on content teams to make judgment calls about link relevance under tight deadlines, often without a clear, scalable framework.

What to Deprioritize or Delay Today

With limited resources, not everything deserves immediate attention. For SMBs, you should deprioritize or delay:

- Obsessive Micro-Optimizations: Spending hours on minor technical fixes that have negligible impact on user experience or AI understanding (e.g., shaving milliseconds off a page load time that’s already excellent, or fixing obscure console warnings that don’t affect core functionality). Focus on high-impact issues first.

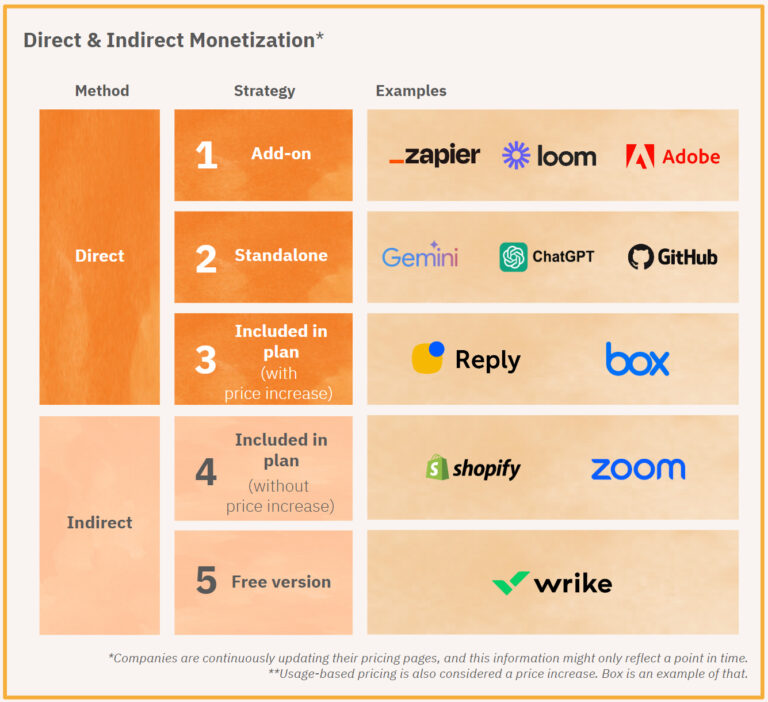

- Chasing Every New AI-Related Trend: Avoid implementing every new AI-driven feature or tool without a clear understanding of its practical benefit and ROI. Many are experimental or niche. Stick to established best practices that support AI understanding, like structured data, before diving into speculative tactics.

- Over-optimization for Specific Keywords: While keywords still matter, the era of stuffing exact-match keywords is over. AI understands synonyms, related concepts, and user intent. Focus on natural language and comprehensive topic coverage rather than rigid keyword density targets.

Actionable Steps for SMBs Now

Here’s a practical sequence for your technical SEO efforts in 2026:

- Audit Core Web Vitals: Use Google Search Console and Lighthouse to identify and fix major performance issues. Prioritize mobile.

- Implement Key Structured Data: Start with the most relevant Schema.org types for your business (Product, Article, LocalBusiness, FAQPage). Use Google’s Rich Results Test to validate.

- Review Internal Linking: Ensure your most important content is well-linked internally, creating clear topic clusters.

- Enhance Semantic HTML: Work with your developers to ensure new content and template updates use semantic HTML correctly.

- Monitor AI-Driven Search Changes: Keep an eye on official announcements from major search engines regarding how AI impacts discoverability, but filter for practical, actionable advice relevant to SMBs. AI in marketing news

Navigating the Future of Discoverability

The shift towards AI-driven search is not a temporary trend but a fundamental evolution. For small to mid-sized businesses, success in this new environment hinges on a pragmatic, focused approach to technical SEO. By prioritizing semantic clarity through structured data, reinforcing E-E-A-T signals, and maintaining a robust technical foundation, you can ensure your content remains discoverable and valuable, even as the search landscape continues to transform.

Leave a Comment