What Responsible AI Means for Your Business (and What It Doesn’t)

Deploying AI tools offers significant advantages, but without a responsible approach, you risk unintended consequences that can erode customer trust and incur compliance headaches. This guide cuts through the theoretical noise to provide actionable steps for implementing ethical AI practices within your small or mid-sized business. You’ll learn how to prioritize efforts, mitigate common risks, and build trust, even with limited resources, ensuring your AI initiatives genuinely support growth.

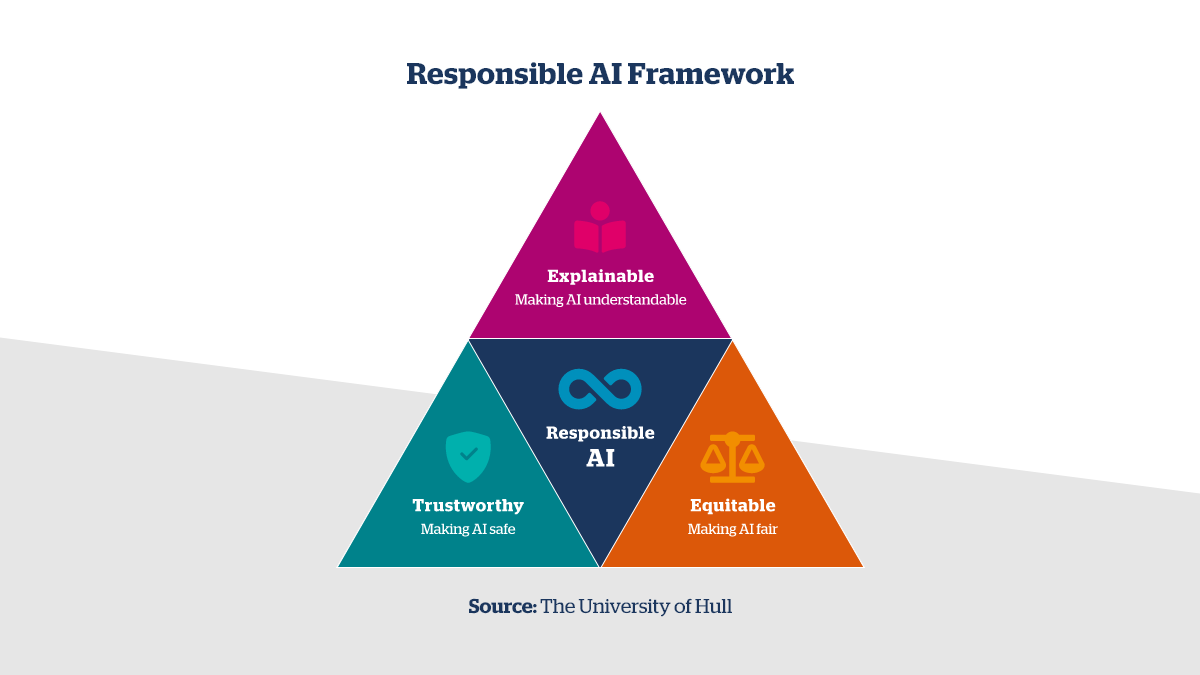

For small to mid-sized businesses, responsible AI isn’t about achieving theoretical perfection or establishing a dedicated ethics committee. It’s about a pragmatic approach to minimizing harm, ensuring fairness, and building trust in your AI-powered operations. This means understanding the core principles – fairness, transparency, accountability, and privacy – and applying them in a way that fits your operational realities. It doesn’t require a massive budget, but it does demand thoughtful integration and continuous vigilance.

Prioritizing Your Responsible AI Efforts: Where to Start

Given limited resources, prioritization is key. Start by focusing your responsible AI efforts on applications that directly interact with customers or influence critical business decisions. These are the areas with the highest potential for both positive impact and significant risk if mishandled. Think about your customer service chatbots, personalized marketing engines, or lead scoring systems.

- Conduct a Simple Risk Assessment: For each AI tool you use or plan to implement, ask fundamental questions: What data does it consume? How does it arrive at its decisions? Who are the primary stakeholders affected by its outputs? What are the potential negative outcomes or biases that could emerge? This initial assessment doesn’t need to be exhaustive; it just needs to highlight the most critical areas.

- Focus on High-Impact Areas: Prioritize AI systems that could lead to discriminatory outcomes, privacy breaches, or significant financial harm if they malfunction or operate unfairly.

What you should delay is over-engineering a comprehensive AI ethics policy before you even understand your specific, immediate risks. Start small, address the most pressing concerns, and allow your policies to evolve with your AI adoption.

The temptation to build a perfectly comprehensive AI ethics policy from the outset is understandable, but it often leads to a different kind of operational drag. In practice, small teams can get mired in theoretical debates and edge cases that haven’t materialized, delaying any real action. This isn’t just a time sink; it creates a policy that is often too generic or abstract to provide actionable guidance when specific, real-world dilemmas arise, leaving practitioners frustrated and without clear direction.

A more insidious, second-order consequence of this ‘policy-first’ approach is the creation of ‘shelfware’ – a well-intentioned document that sits unused because it doesn’t integrate with daily workflows or address the immediate, tangible risks. This can foster a false sense of security, where the organization believes it has ‘addressed’ responsible AI simply by having a policy, while the actual systems continue to operate without practical, embedded ethical guardrails. The real-world impact of AI is often felt long before a perfectly crafted policy can be implemented, making iterative, risk-focused action far more effective.

Finally, what’s easy to overlook is that responsible AI isn’t a one-time setup; it’s an ongoing operational commitment. Even well-designed systems can drift over time as data inputs change, user behaviors evolve, or new societal norms emerge. Without continuous monitoring, auditing, and a clear process for adaptation, an AI system that was responsible yesterday can develop new biases or vulnerabilities tomorrow. This sustained effort is a significant, often underestimated, resource drain that requires dedicated attention beyond the initial deployment.

Practical Steps for Bias Mitigation

Bias is a pervasive challenge in AI, often stemming from the data used to train models or the design of the algorithms themselves. For SMBs, the focus should be on practical detection and mitigation strategies.

- Data Audits: Regularly review your training data for representational bias. Are certain demographics, regions, or customer segments underrepresented or overrepresented? Incomplete or skewed data is a primary source of AI bias.

- Diverse Teams: Involve diverse perspectives in the development, testing, and deployment of your AI tools. A team with varied backgrounds is more likely to spot potential biases that a homogeneous group might miss.

- Targeted Testing: Beyond general quality assurance, specifically test your AI models with diverse datasets to identify disparate impacts on different user groups. Look for performance gaps or unfair outcomes across various demographic slices.

- Establish Feedback Loops: Create clear, accessible mechanisms for users or customers to report perceived biased outcomes or unfair decisions made by your AI systems. This human oversight is crucial for catching issues that automated tests might miss.

Even with thorough data audits, the world doesn’t stand still. Customer behaviors shift, market demographics evolve, and new trends emerge. What was representative six months ago might now be subtly skewed. The hidden cost here is the assumption of static data. Without ongoing, periodic re-audits, or at least a robust monitoring system for data drift, your “mitigated” bias can slowly creep back in, leading to models that become less relevant and potentially unfair over time. This isn’t just about initial cleanup; it’s about continuous vigilance, which often gets deprioritized when immediate project deadlines loom.

The call for diverse teams and feedback loops is sound in theory, but execution often hits practical snags. A diverse team only mitigates bias if their varied perspectives are genuinely heard and acted upon. In practice, power dynamics, tight deadlines, or a lack of clear processes for integrating critical feedback can render this effort superficial. It’s easy to check the box on “diverse team” without truly empowering them to challenge assumptions or design choices. Similarly, establishing feedback loops is one thing; actually acting on that feedback, especially when it points to fundamental issues requiring significant re-work, is another. Teams often face immense pressure to ship, making it difficult to pause and address nuanced bias reports that don’t immediately break the system. This leads to human frustration and a slow erosion of trust in the feedback mechanism itself.

Targeted testing for disparate impact is critical, but the practical challenge lies in defining “diverse datasets” and the sheer volume of slices required. It’s tempting to focus on a few major demographic categories, but bias often manifests in the long tail – smaller, less obvious subgroups that are harder to isolate and test for. The effort required to gather sufficient, representative data for these granular tests, and then to interpret the results meaningfully, can be substantial. For teams with limited data science resources, this often means making a pragmatic trade-off: focusing on the most impactful, broad categories and accepting that some subtle biases might persist in niche segments simply due to resource constraints. While the ideal is comprehensive coverage, today’s reality for many SMBs means deprioritizing exhaustive testing for every conceivable subgroup. Prioritize the most significant and common user segments first; the long tail can wait until you have more robust data infrastructure and dedicated personnel.

Ensuring Transparency and Explainability

Transparency builds trust. For SMBs, this means being clear about when and how AI is being used, especially in customer-facing interactions or decision-making processes.

- Clear Disclosures: If a customer is interacting with a chatbot, make it explicit. A simple “You’re chatting with an AI assistant” can prevent frustration and build trust.

- Understand Inputs and Outputs: While deep technical explainability (XAI) might be beyond the scope for many SMBs, you must understand the primary inputs driving your AI’s decisions and the expected range of outputs. For critical applications, ensure you can trace back *why* a particular decision was made, even if it’s a high-level explanation.

- Human in the Loop: For high-stakes decisions (e.g., loan applications, critical customer support), ensure there’s always a human review or override capability. This provides a crucial safety net and maintains accountability.

Avoid deploying “black-box” AI solutions where you cannot understand or audit their decision-making process, especially in areas that could have significant ethical or legal implications for your business or customers.

Data Privacy and Security: Non-Negotiables

AI systems often consume vast amounts of data, amplifying existing data privacy and security risks. This area is non-negotiable for responsible AI deployment.

- Data Minimization: Adopt a strict policy of only collecting and using data that is absolutely essential for the AI’s intended purpose. Less data means less risk.

- Anonymization and Pseudonymization: Where feasible, anonymize or pseudonymize data used for AI training and processing. This reduces the risk of re-identification and protects individual privacy.

- Robust Security Measures: Ensure all data pipelines, storage, and AI models are protected with strong encryption, access controls, and regular security audits. Treat AI data with the same, if not greater, level of security as your most sensitive customer information.

- Compliance Awareness: Stay informed and adhere to relevant data protection regulations applicable to your business and customers, such as GDPR or CCPA. Ignorance is not a defense. Data protection regulations

What to Deprioritize (and Why)

Currently, for most small to mid-sized businesses, you should deprioritize investing heavily in complex, custom AI ethics frameworks or hiring a dedicated AI ethicist. While these have their place in larger enterprises, for an SMB with limited headcount and budget, they represent an unnecessary overhead that can divert resources from more impactful, immediate actions. The goal today is to *act* responsibly, not just to *document* responsibility. Your resources are better spent on integrating ethical thinking into your existing workflows, carefully selecting AI tools, and establishing practical safeguards. Avoid getting bogged down in theoretical debates or trying to build out a comprehensive governance structure that doesn’t directly impact your day-to-day operations or address your most pressing risks. Focus on practical implementation first; formal structures can evolve as your AI adoption matures.

Building a Culture of Responsible AI

Ultimately, responsible AI isn’t just about technology; it’s about the people who build, deploy, and interact with it. Fostering a culture that values ethical considerations is paramount.

- Team Training: Provide basic training for your team on the principles of responsible AI, potential risks, and their role in mitigating them. This doesn’t need to be extensive; even a short workshop can raise awareness.

- Leadership Buy-in: Ensure that management understands and actively champions responsible AI practices. Ethical considerations should be part of the strategic conversation, not an afterthought.

- Regular Review and Adaptation: Periodically review your AI tools and practices against your established ethical guidelines. The AI landscape is evolving rapidly, and your approach to responsibility should adapt accordingly.

- Encourage Open Dialogue: Create an environment where team members feel comfortable raising concerns or providing feedback about the ethical implications or potential biases of AI systems.

Leave a Comment