As a marketing practitioner, navigating the rapid adoption of AI tools means more than just efficiency gains; it means managing new risks. This article cuts through the noise to provide actionable strategies for governing your AI tools effectively. You’ll learn how to prioritize compliance, protect your brand’s trust, and make informed decisions about AI use, even with limited resources.

We’ll focus on what truly matters for small to mid-sized teams operating under real-world constraints, emphasizing practical steps over theoretical frameworks.

The Non-Negotiables: Why AI Governance Isn’t Optional

In March 2026, the landscape of AI use in marketing is no longer nascent. Ignoring AI governance is no longer a viable strategy, even for lean teams. The core risks are tangible: potential data breaches from feeding sensitive customer information into public models, reputational damage from biased or inaccurate AI outputs, and the looming threat of regulatory fines as AI-specific legislation matures. Your brand’s trust, built over years, can erode quickly if AI tools are used carelessly.

For small to mid-sized businesses, the impact of a single misstep can be disproportionately severe. Proactive governance isn’t about becoming a legal expert; it’s about common-sense risk management.

Prioritizing Your AI Tool Inventory and Usage Policies

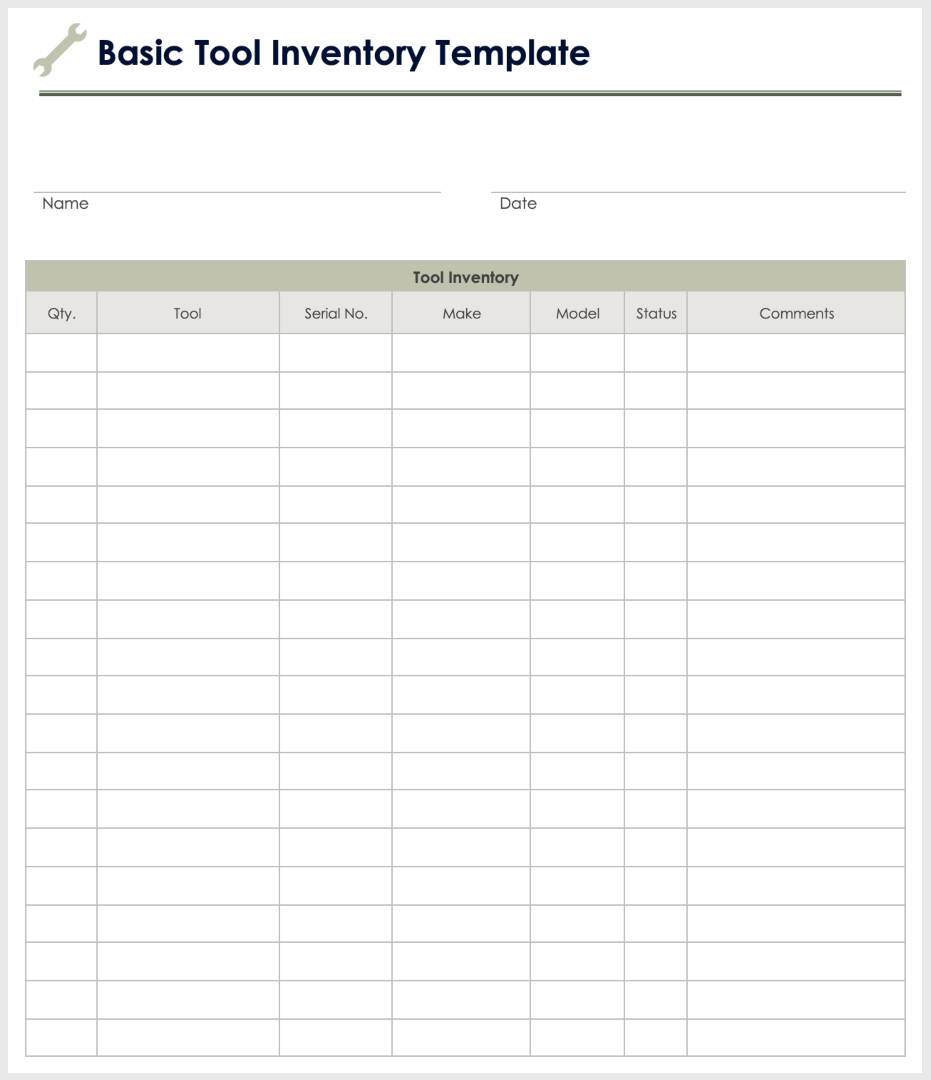

You cannot govern what you do not know you have. The first practical step is to create a simple, living inventory of every AI tool your marketing team uses. This isn’t just about paid subscriptions; it includes free tools, browser extensions, and any AI-powered features within existing platforms.

- What tool is it? (e.g., ChatGPT, Midjourney, Grammarly AI, HubSpot AI features)

- What type of data does it process? (e.g., customer data, internal strategy documents, public content)

- Who uses it? (Specific team members or departments)

- What is its primary purpose? (e.g., content generation, ad copy, image creation, data analysis)

Once inventoried, establish clear, concise usage policies. These don’t need to be legalistic documents. Focus on practical guidelines: never input personally identifiable customer data into public AI models, always review AI-generated content for accuracy and brand voice, and understand the terms of service for each tool. These policies should be easily accessible and understood by everyone on the team.

The initial inventory is a snapshot, not a static artifact. The real challenge lies in its ongoing maintenance. Teams often start with good intentions, but as new tools emerge and existing ones evolve, the inventory quickly becomes outdated without a dedicated process for updates. This creates a false sense of security, as the documented state no longer reflects actual usage, making policy enforcement a guessing game and increasing the risk of overlooked vulnerabilities.

This lack of a current, shared view inevitably leads to tool sprawl. Without a central record, different team members or sub-teams often acquire similar tools to solve the same problem, unaware of existing solutions or subscriptions elsewhere in the organization. This isn’t just a hidden cost in redundant licenses; it fragments workflows, complicates data integration, and dilutes collective expertise, making it harder to standardize processes or leverage volume discounts.

Crafting policies is the easier part; ensuring they are actually understood and followed is where most teams falter. It’s not enough to simply publish a document. The “why” behind each guideline – the specific risks it mitigates – must be clearly communicated and reinforced. Without this context, policies are often perceived as bureaucratic hurdles rather than essential safeguards, leading to low adoption and the very risks they were designed to prevent.

For teams with limited bandwidth, the temptation is to build a perfectly exhaustive inventory from day one. Resist this. Prioritize tools that handle sensitive customer data or are used for high-volume content generation. These carry the highest immediate risk. You can defer a comprehensive audit of every browser extension or minor AI feature until the core, high-impact tools are documented and governed. An imperfect but actionable inventory is far more valuable than an exhaustive one that never gets finished.

Data Privacy and Security: Your First Line of Defense

This is paramount. The biggest immediate risk for many marketing teams is inadvertently compromising customer data or proprietary information by feeding it into AI tools without understanding their data handling practices. Assume that any data you input into a public AI model may be used to train that model, unless explicitly stated otherwise by the vendor.

Before adopting any new AI tool, conduct basic vendor due diligence. Ask direct questions about their data retention policies, encryption standards, and whether they use your data for model training. Prioritize tools that offer enterprise-grade data privacy features, even if they come at a slightly higher cost. Where possible, anonymize or use synthetic data for testing and development, especially when experimenting with new AI capabilities.

Many teams, facing tight deadlines and resource constraints, are tempted by the immediate productivity gains of readily available, often free, AI tools. This creates a significant blind spot: the proliferation of “shadow AI” where individual team members use unvetted tools with sensitive data, bypassing established privacy protocols. The immediate convenience masks a growing compliance liability and a fragmented data footprint that becomes nearly impossible to track or secure effectively.

Beyond direct exposure, the subtle erosion of data integrity is a critical downstream effect. If proprietary customer insights or strategic campaign data are inadvertently used to train public models, it’s not just a privacy breach; it can subtly dilute the uniqueness of your own data advantage. Worse, if these models then generate outputs for competitors based on your inputs, you’ve inadvertently contributed to leveling the playing field against yourself. This isn’t always an immediate, catastrophic failure, but a slow, strategic disadvantage that’s hard to pinpoint until it’s too late.

What should be deprioritized? Don’t get bogged down in trying to implement a perfect, enterprise-grade data governance framework from day one if your resources are limited. Instead, prioritize a clear, non-negotiable “no sensitive data in public AI” policy and enforce it strictly. Focus on identifying and securing the most critical data streams first. Trying to audit every single tool and data point simultaneously often leads to paralysis; a focused, risk-based approach is more effective for lean teams.

Addressing Bias and Ensuring Ethical Outputs

AI models are trained on vast datasets, which often reflect existing societal biases. This means AI-generated content, ad targeting suggestions, or personalization algorithms can inadvertently perpetuate or amplify these biases. For marketers, this can lead to alienating segments of your audience, damaging your brand’s reputation, or even facing accusations of discrimination.

Practical steps include: always subjecting AI-generated outputs to human review for fairness, inclusivity, and accuracy. Diversify your team’s perspectives when reviewing AI content. Understand that no AI tool is perfectly unbiased, and your role is to mitigate its potential for harm. This isn’t about achieving theoretical perfection, but about preventing real-world negative impacts on your audience and brand.

What to Deprioritize (and Why) for Lean Teams

For small to mid-sized marketing teams, the temptation can be to try and implement every best practice from larger enterprises. Resist this. You should explicitly deprioritize building a dedicated AI ethics committee or investing in complex, custom AI model explainability tools. These initiatives are resource-intensive, require specialized expertise, and often yield diminishing returns for teams focused on practical marketing outcomes. Your budget and headcount are better spent elsewhere.

Instead, channel your efforts into the foundational elements: clear internal usage policies, robust data privacy checks for every tool, and a consistent human review process for AI-generated outputs. Focus on mitigating the most immediate and impactful risks rather than chasing academic perfection in AI governance.

Building a Culture of Responsible AI Use

Effective AI governance isn’t just about policies and checklists; it’s about fostering a culture of responsible use within your team. This requires ongoing education, not just a one-time training session. Conduct regular, short discussions about new AI tools, potential pitfalls, and successful applications. Encourage team members to critically evaluate AI suggestions rather than accepting them blindly.

Promote open communication where team members feel comfortable raising concerns about AI outputs or usage. This collective vigilance is often more effective than any top-down mandate, especially in dynamic marketing environments.

Staying Agile: Adapting to Evolving AI and Regulations

The AI landscape is in constant flux, with new tools emerging weekly and regulatory frameworks evolving. Your AI governance strategy cannot be a static document. Schedule quarterly or bi-annual reviews of your AI tool inventory and usage policies. This ensures they remain relevant and address new risks.

Keep an eye on emerging data privacy and AI-specific regulations, such as the EU AI Act, even if they don’t directly apply to your region or business size immediately. EU AI Act summary These frameworks often set precedents and indicate the direction of future compliance requirements. Staying informed allows you to anticipate changes and adapt your practices proactively, minimizing disruption down the line.

Leave a Comment